We have all often discussed how powerful Large Language Models (LLMs) are and yet we hardly know what in the world they think. They are black boxes to most of us and even their creators.

Today, Google DeepMind punched a gaping mole-shaped hole in that box with the launch of Gemma Scope 2.

This isn’t just a model update; it’s an enormous suite of “interpretability tools” to help researchers visualize the inner decision-making life of the entire Gemma 3 family.

Topics

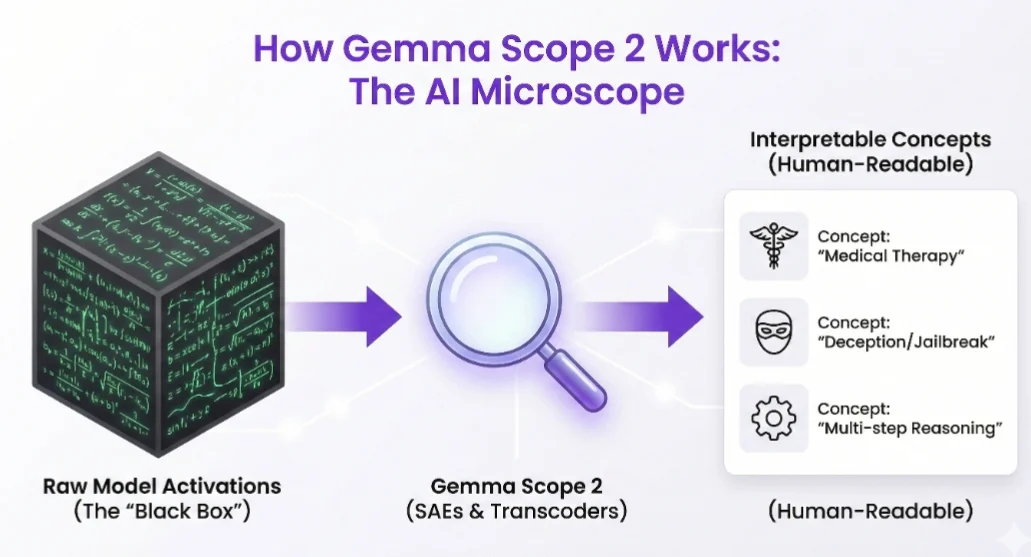

ToggleIf Gemma 3 is the engine, Gemma Scope 2 is both the see-through hood and diagnostic computer.

The “Largest Ever” Open Release

Introducing Gemma Scope 2

— Omar Sanseviero (@osanseviero) December 19, 2025

🤗Largest open release of interpretability tools (over 1 trillion parameters trained!)

🔬Works as a microscope to analyze all Gemma 3 models' internal activations

🗣️Advanced tools for analyzing chat behaviors pic.twitter.com/wnMg3tIXuV

The size of this release is awe-inspiring. In developing these tools Google says that they had to store around 110 Petabytes of data and train over 1 trillion total parameters just for interpretability.

That makes it the largest open-source release of interpretability tools by an AI lab to date. It spans all model sizes of the Gemma 3 range, from the tiny 270M parameter one to the mighty 27B variant.

A “Microscope” for Neural Networks

A spokesman for Google has described Gemma 2 as a “microscope” for language models. But how does it actually happen?

It is based on state-of-the-art methods, e.g. Sparse Autoencoders (SAEs) and Transcoders. Consider them to be translators that are perched atop the model’s “neurons.”

They peer at raw, messy mathematical activations inside the A.I. and turn those into human-understandable concepts such as “this neuron computes when thinking about cancer therapy” or “this layer responds to a lie.”

Also Read: Meta Prepares AI Offensive for 2026 with “Mango” and “Avocado” Models

Key Technical Upgrades:

- Full Coverage: In contrast to the previous version, this is a suite for all layers of Gemma 3 models not only some elements.

- Complex Wiring: It also incorporates Skip-transcoders and Cross-layer transcoders that allow researchers to visualize the development of a thought through multiple layers of the brain.

- Matryoshka Training: They employed a cutting-edge training method (which they call Matryoshka) that allows their tools to spot more useful concepts and fine-tunes errors in the previous model.

Why This Matters: Debugging the “Black Box”

This isn’t just academic. As models grow, they exhibit “emergent behaviors”, talent that wasn’t exactly programmed into them. Sometimes it’s a good thing (such as learning newfound science), and sometimes it’s dangerous (such as jailbreaking or manipulation).

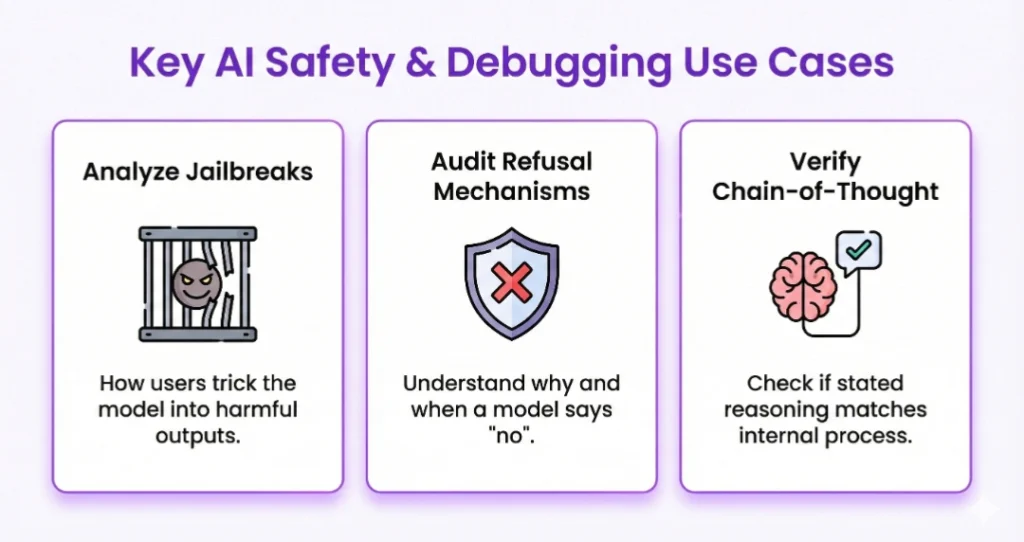

Gemma-baseline 2 has specific functionality to investigate chatbot behaviors, and allows researchers to:

- Jailbreaks: How users fool the model.

- Refusal Mechanisms: Where and why a model says “no.”

- Chain-of-Thought Faithfulness: Verifying whether the internal reasoning of a model is consistent with what is actually communicated with the user.

By opening up these tools, Google is essentially crowdsourcing the safety audit of its latest models.