Xiaomi MiMo V2 Flash: Just when we thought the “open vs. closed” debate in AI was starting to settle down, Xiaomi seemed to throw a spanner in the works.

They officially launched and open-sourced MiMo-V2-Flash, a base model that not only chases pack leaders down, but also beats them on the tape.

Check: Xiaomi MiMo V2 Flash Features, Review

Topics

ToggleThis is not a small experimental release. Xiaomi has released a huge 309 billion-parameter beast that moves at the speed of a lightweight model, courtesy of a novel architectural trick.

It’s fast, powerful and specifically designed to simplify all complex reasoning and coding that every individual developer has been struggling with.

Also Read: Luma AI Just Solved Video’s Biggest Problem with Ray 3 Modify

The Active Secret: 309B Intelligence, 15B Cost

The headline spec is a bit ambiguous at first glance: 309B total parameters, yet only 15B active. What does that mean?

MiMo-V2-Flash is developed with Mixture-of-Experts (MoE) strategy. Think of it as a vast library of 309 billion specialized “neurons,” but when it produces, say, the word “forest,” those are merely the 15 billion most relevant that get activated.

- The Result: You receive the deep benevolence of a gigantic model but the inductive speed (and cost) of an only somewhat smaller one.

- Hybrid Attention: It adopts the new attention mechanism with combined “Sliding Window” attention and full “Global” attention at the rate of 5:1. This enables it to use an ultra-long 256k token context window without eating up all your GPU memory.

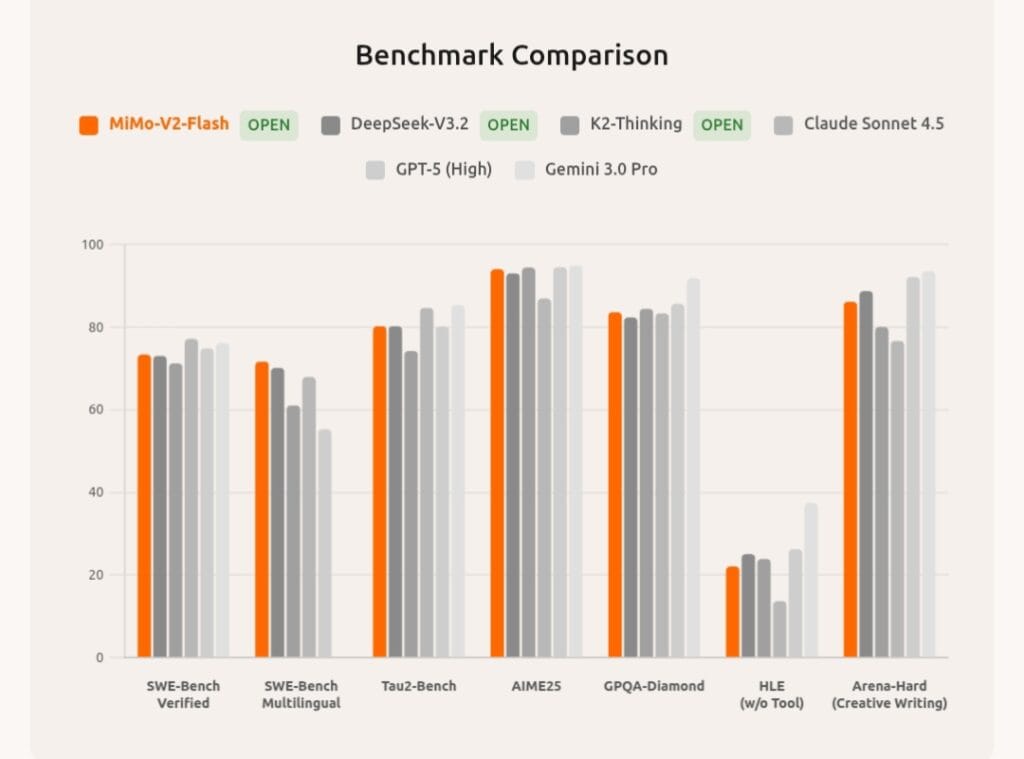

Benchmarks: A New Coding & Math Heavyweight

Xiaomi is marketing this as a special-purpose model for “Reasoning, Coding, and Agentic” tasks. I’ve done the math, and they support the claim.

- Math (AIME 2025): It achieves the accuracy of 94.1%, ranking top-2 against open-source models and surpassing well-known deep giants such as DeepSeek-V3. 2 (93.1%).

- Science (GPQA-Diamond): It achieves 83.7%, indicating that it can reason at the PhD level.

- Coding (SWE-bench Verified): This is the big one. It achieves 73.4%, ranking #1 among all open-source models and is on par with top-tier closed models.

Vibe Coding & The Hybrid Brain

What is not so much about MiMo-V2-Flash itself, but what makes it special is, how it seamlessly fits into modern workflows. It has a “Hybrid Thinking Mode,” which lets users switch between whether the model “thinks” deeply (for complex logic) or answers quickly (for fast-paced tasks).

It is also designed specifically for vibe-coding, your AI-assisted programming workflow. It integrates nicely with such scaffolding tools as Claude Code, Cursor, and Cline, it wisely separates ‘structure markup’ from presentation details and can complete functional HTML pages in a single click.

This incredible deal doesn’t just commoditize a substantially impactful model to the baseline price of $0.10 per million token; they are trying to commoditize high-end intelligence here.