What is Alpamayo 1?

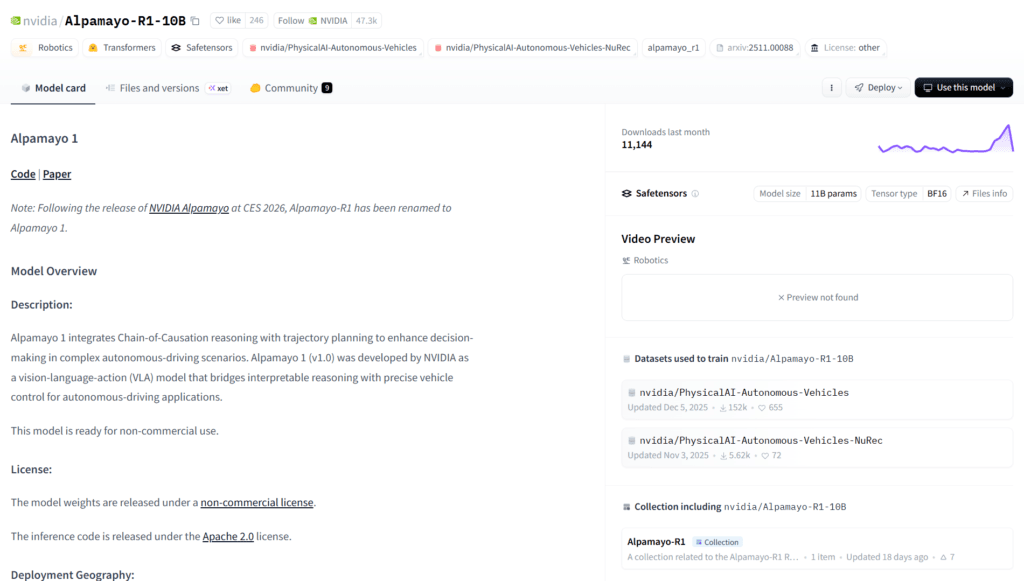

Alpamayo 1 (formerly Alpamayo-R1) is NVIDIA’s open-source vision-language-action model that integrates chain-of-thought reasoning with trajectory planning for autonomous driving in complex scenarios.

When was Alpamayo 1 released?

The research paper was published October 28, 2025, with full open weights and ecosystem released at CES 2026 in January 2026.

Is Alpamayo 1 free to use?

Yes, model weights are open-source on Hugging Face under non-commercial license; inference code on GitHub is free for research.

What size is Alpamayo 1?

It is a 10-billion-parameter model combining Cosmos-Reason backbone with a diffusion trajectory decoder.

How can I run Alpamayo 1?

Download weights from Hugging Face, use GitHub inference scripts on NVIDIA GPU with 24GB+ VRAM; integrate with AlpaSim simulator.

What license does Alpamayo 1 have?

Non-commercial use only for current release; NVIDIA plans commercial options in future Alpamayo family models.

What is the goal of Alpamayo 1?

To enable transparent, reasoning-based Level 4 autonomous driving by explaining decisions in natural language while generating safe trajectories.

Who developed Alpamayo 1?

NVIDIA Research team, with contributions from many engineers; part of NVIDIA’s Physical AI initiative.

Alpamayo 1

About This AI

Alpamayo 1 is NVIDIA’s pioneering open-source vision-language-action (VLA) model designed to advance reasoning-based autonomous driving.

It integrates Chain-of-Causation reasoning with trajectory prediction, enabling vehicles to perceive environments from video/sensor inputs, reason causally in natural language, and generate safe, precise driving actions.

The 10-billion-parameter model combines Cosmos-Reason backbone (for interpretable reasoning) with a diffusion-based trajectory decoder for real-time control.

Key strengths include handling long-tail complex scenarios, transparency via explainable reasoning traces, state-of-the-art performance in reasoning, alignment, safety, latency, and trajectory quality validated through open-loop metrics, closed-loop simulations, and real-world vehicle tests.

Released in late 2025 (initial paper October 2025, full open weights and ecosystem at CES 2026), it forms part of NVIDIA’s Alpamayo family with AlpaSim simulator and Physical AI datasets.

Weights are available on Hugging Face under non-commercial license, with inference code on GitHub for research and development.

Alpamayo 1 targets Level 4 autonomy research, offering a foundation for fine-tuning, distillation, reasoning evaluators, and auto-labeling in safe, transparent AV systems.

As an open toolchain, it accelerates physical AI progress by making human-like reasoning accessible to the AV community.

Key Features

- Chain-of-Causation Reasoning: Generates interpretable causal reasoning traces for every decision, improving transparency and safety auditing

- Vision-Language-Action Architecture: Processes video/sensor inputs, reasons in language, and outputs precise vehicle trajectories

- 10 Billion Parameters: Combines Cosmos-Reason backbone with diffusion trajectory decoder for high-fidelity control

- Real-Time Trajectory Generation: Produces dynamically feasible paths with low latency suitable for on-vehicle inference

- Long-Tail Scenario Handling: Excels in rare/complex driving situations through reasoning rather than pattern matching

- Open Weights and Inference: Safetensors on Hugging Face and open-source code on GitHub for research use

- Simulation Integration: Works with AlpaSim open-source simulator for closed-loop testing

- Dataset Support: Trained/evaluated with open Physical AI AV datasets for reproducible research

- Multi-Stage Training: Supervised fine-tuning plus RL for reasoning-action consistency and quality optimization

Price Plans

- Free ($0): Full open-source model weights, inference code, and related datasets available under non-commercial license for research and development

- Future Commercial (TBD): NVIDIA indicates upcoming models in the family may include commercial licensing options

Pros

- First open reasoning VLA for AV: Pioneers transparent, explainable decision-making in autonomous driving research

- State-of-the-art performance: Leads in reasoning, trajectory quality, safety, alignment, and latency benchmarks

- Transparency and auditability: Reasoning traces allow developers to understand and debug decisions

- Open ecosystem: Includes model weights, inference scripts, simulator, and datasets for full toolchain

- Generalizable to long-tail cases: Reasoning enables handling rare scenarios beyond training data

- Foundation for future work: Suitable for fine-tuning, distillation, evaluators, and commercial adaptations

- NVIDIA hardware optimization: Runs efficiently on GPUs (requires at least 24GB VRAM)

Cons

- Non-commercial license: Weights restricted to research/non-commercial use; commercial options in future models

- High hardware requirements: 10B model needs powerful GPUs (24GB+ VRAM) for inference

- Research-focused: Not production-ready for consumer AV; aimed at developers and academia

- Complex setup: Requires familiarity with Hugging Face, GitHub code, and AV simulation pipelines

- No hosted inference: Must run locally or on own infrastructure; no easy cloud demo

- Early stage: Released late 2025/early 2026; community tools and fine-tunes still emerging

- Limited modalities: Primarily vision + language to action; may need extensions for full sensor fusion

Use Cases

- Autonomous driving research: Develop and evaluate reasoning-based AV algorithms in simulations and real tests

- Trajectory prediction: Generate safe, explainable paths in complex urban or edge-case scenarios

- Safety auditing: Use reasoning traces to analyze and verify decision logic for compliance

- Simulation testing: Pair with AlpaSim for closed-loop evaluation of reasoning models

- Fine-tuning and distillation: Adapt model to specific vehicle platforms or reduce size for on-board deployment

- Physical AI exploration: Advance vision-language-action models beyond driving to robotics

- Dataset creation: Leverage reasoning outputs for auto-labeling or synthetic data generation

Target Audience

- AV researchers and academics: Studying reasoning, safety, and long-tail generalization in driving AI

- Autonomous vehicle developers: Building next-gen systems with explainable decisions

- AI and robotics engineers: Experimenting with VLA models for physical world interaction

- NVIDIA ecosystem users: Leveraging DRIVE platform or GPUs for AV prototyping

- Simulation specialists: Combining with AlpaSim for high-fidelity testing

- Physical AI innovators: Exploring open toolchains for embodied intelligence

How To Use

- Download weights: Get safetensors from Hugging Face nvidia/Alpamayo-R1-10B

- Clone repo: Git clone https://github.com/NVlabs/alpamayo for inference scripts

- Install dependencies: Use provided requirements for PyTorch, transformers, diffusers, etc.

- Run inference: Load model with 24GB+ GPU VRAM and input video frames + optional commands

- Generate output: Model produces reasoning trace + trajectory prediction

- Test in simulation: Integrate with AlpaSim for closed-loop evaluation

- Fine-tune: Adapt using open datasets or custom driving data (research only)

How we rated Alpamayo 1

- Performance: 4.8/5

- Accuracy: 4.7/5

- Features: 4.6/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 3.8/5

- Customization: 4.5/5

- Data Privacy: 5.0/5

- Support: 4.2/5

- Integration: 4.4/5

- Overall Score: 4.6/5

Alpamayo 1 integration with other tools

- Hugging Face: Model weights hosted for easy download and inference with transformers library

- GitHub: Open-source inference scripts and evaluation code available in NVlabs/alpamayo repo

- AlpaSim Simulator: Native compatibility with NVIDIA's open AV simulation framework for testing

- Physical AI Datasets: Trained and evaluated on open NVIDIA datasets for reproducible research

- NVIDIA GPUs: Optimized for high-VRAM NVIDIA hardware (e.g., A100/H100) for efficient inference

Best prompts optimised for Alpamayo 1

- N/A - Alpamayo 1 is a specialized vision-language-action model for autonomous driving that processes video/sensor inputs and generates trajectories with reasoning traces automatically; it does not use free-form text prompts like generative chat or image/video tools.

- N/A - Input consists of driving video frames, previous trajectories, and optional verbal commands; reasoning and actions are produced end-to-end without user-crafted prompts.

- N/A - This is a research AV model focused on perception-reasoning-action pipeline; no manual prompting interface exists for content generation.

FAQs

Newly Added Tools

About Author