What is BioNeMo Framework?

BioNeMo Framework is NVIDIA’s open-source suite for building, training, and adapting large biomolecular AI models for drug discovery and digital biology, with GPU-optimized recipes and parallelism.

Is BioNeMo free to use?

Yes, the core framework is completely free and open-source under Apache 2.0; enterprise support and containers available via NVIDIA AI Enterprise license.

When was BioNeMo Framework released?

Initial launch announced in September 2022; current version v2.7 released October 1, 2025, with ongoing updates.

What models does BioNeMo support?

Includes ESM-2, Geneformer, CodonFM, Amplify, BioBert, Evo2, Llama 3 variants, Vision Transformer, and more via recipes and sub-packages.

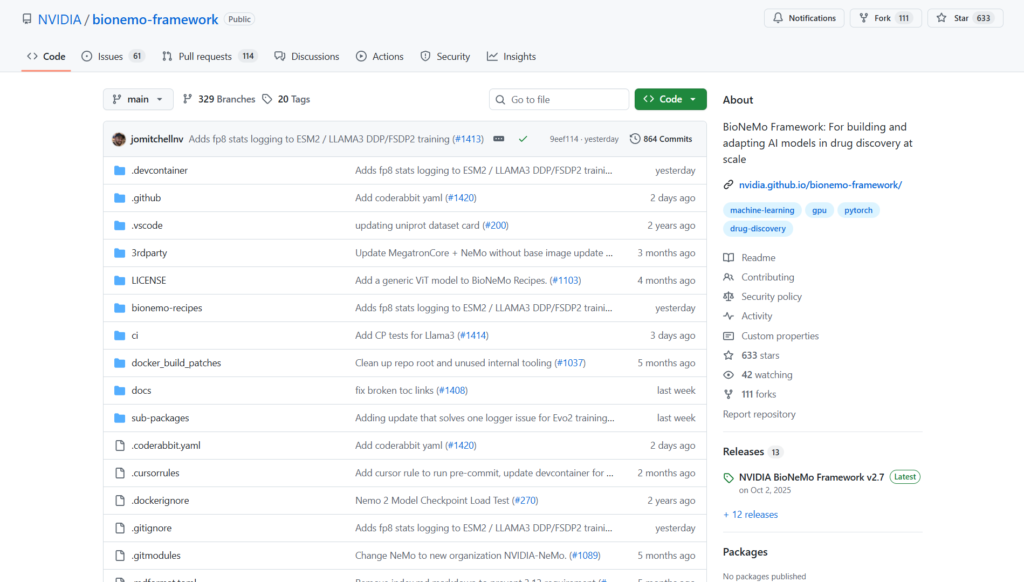

How do I get started with BioNeMo?

Clone the GitHub repo, install via pip or use NGC Docker containers, and run bionemo-recipes examples for training.

What hardware is required for BioNeMo?

Optimized for NVIDIA GPU clusters (multi-node recommended); works on A100/H100 etc. for large-scale training.

Who uses BioNeMo Framework?

Biopharma researchers, computational biologists, AI scientists in drug discovery, and companies like Amgen and A-Alpha Bio.

Where can I find BioNeMo documentation?

Official docs at docs.nvidia.com/bionemo-framework, GitHub repo, and NGC catalog for containers.

BioNeMo

About This AI

BioNeMo Framework is an open-source suite from NVIDIA for accelerating the development, training, and adaptation of large-scale biomolecular AI models in digital biology and drug discovery.

It provides GPU-optimized tools, libraries, and recipes for training transformer-based models on biological data, supporting massive parallelism (FSDP and 5D) with NVIDIA TransformerEngine integration for high performance on clusters.

Key models include ESM-2 (protein BERT), Geneformer (single-cell), CodonFM, Amplify, BioBert, Evo2, and more, with lightweight portable examples in bionemo-recipes for customization.

Features focus on efficient data loading (bionemo-scdl), in-training processing, and scalable workflows for protein language models, DNA/RNA sequences, and chemistry applications.

Released initially around 2022 with ongoing updates, the current v2.7 (October 1, 2025) includes new recipes like CodonFM and Megatron/NeMo 5D support for x86/ARM.

Available via GitHub (Apache 2.0 license), NGC containers (nightly and release), and docs at docs.nvidia.com/bionemo-framework.

It enables researchers and biopharma teams to build domain-specific models faster, reducing time/cost in drug discovery pipelines.

Enterprise access via NVIDIA AI Enterprise offers support and secure containers; open-source version is free for community use.

Ideal for computational biologists, AI scientists in pharma, and developers needing scalable biomolecular AI training.

Key Features

- GPU-optimized training recipes: Pre-configured for ESM-2, Geneformer, CodonFM, and other biomolecular models with high performance

- Advanced parallelism support: Fully-sharded-data-parallel (FSDP) and 5D (tensor, pipeline, context, etc.) for cluster-scale training

- TransformerEngine integration: Accelerates FP8 and other precision formats for faster, memory-efficient runs

- Modular bionemo-recipes: Lightweight, portable examples for easy customization and riffing

- Efficient data loading: bionemo-scdl and bionemo-webdatamodule for biological sequence handling and in-training processing

- NeMo and Megatron-Core base: Leverages NVIDIA's ecosystem for large-model training stability

- Docker/NGC containers: Pre-built images (nightly/release) for x86 and ARM, simplifying deployment

- Documentation and examples: Detailed guides, VSCode devcontainer, and community-contributed notebooks

- Multi-domain support: Proteins, single-cell, DNA/RNA, chemistry, and more via specialized models

Price Plans

- Free/Open-Source ($0): Full framework, recipes, models, and NGC containers available under Apache 2.0; no cost for community/research use

- NVIDIA AI Enterprise (Custom/Licensed): Paid enterprise support, secure/production containers, expert assistance, and integration with NVIDIA cloud services

Pros

- High scalability: Trains billion-parameter models on hundreds of GPUs efficiently

- Open-source and free: Apache 2.0 license with full code, weights, and recipes accessible to all

- Optimized for biology: Domain-specific tooling accelerates drug discovery workflows

- Active development: Frequent releases (e.g., v2.7 in Oct 2025) with new models and features

- Enterprise-ready options: NGC containers and NVIDIA AI Enterprise for production support

- Community contributions: Users adding notebooks and recipes (e.g., zero-shot protein design)

- Integration with NVIDIA stack: Seamless with DGX, Base Command, and cloud partners

Cons

- Requires GPU clusters: Best performance on multi-node setups; single-GPU limited for large models

- Setup complexity: Involves Docker, submodules, and dependencies; steep for beginners

- Enterprise features paid: Full support, secure containers via NVIDIA AI Enterprise license

- No hosted service: Self-managed; no simple web UI for non-technical users

- Focus on training: Primarily for model building; less emphasis on inference/deploy apps

- Hardware dependency: Relies on NVIDIA GPUs for optimal acceleration

- Documentation evolving: Some features WIP (e.g., certain parallelism modes)

Use Cases

- Protein language model training: Fine-tune ESM-2 or Geneformer on proprietary biomolecular data

- Drug candidate prediction: Build models for molecular property prediction or protein design

- Genomics and single-cell analysis: Train on DNA/RNA sequences or scRNA-seq data for insights

- Biopharma R&D acceleration: Scale AI workflows on GPU clusters to shorten discovery timelines

- Research experimentation: Customize recipes for new biological modalities or tasks

- Collaborative development: Use containers for reproducible multi-team training

- Cloud integration: Deploy on AWS SageMaker or other platforms for hybrid setups

Target Audience

- Computational biologists: Researchers building biomolecular AI models

- Drug discovery teams: Biopharma scientists accelerating pipelines with AI

- AI/ML engineers in life sciences: Scaling training on GPU infrastructure

- Academic and open-source contributors: Experimenting with open recipes

- Enterprise biopharma developers: Using licensed version for production

- Students and educators: Learning large-scale bio-AI training

How To Use

- Clone repo: git clone --recursive https://github.com/NVIDIA/bionemo-framework

- Install dependencies: pip install -r requirements.txt or use NGC Docker container

- Run quick start: Use bionemo-recipes examples like python train_ddp.py for ESM-2

- Customize model: Modify configs in recipes for your dataset and hyperparameters

- Train on cluster: Launch multi-node jobs with SLURM or NGC batch support

- Evaluate: Use built-in tools to test model performance on benchmarks

- Deploy inference: Export to Hugging Face or use NeMo inference endpoints

How we rated BioNeMo

- Performance: 4.9/5

- Accuracy: 4.7/5

- Features: 4.8/5

- Cost-Efficiency: 4.9/5

- Ease of Use: 4.0/5

- Customization: 4.9/5

- Data Privacy: 4.8/5

- Support: 4.5/5

- Integration: 4.7/5

- Overall Score: 4.7/5

BioNeMo integration with other tools

- NVIDIA NGC: Pre-built containers (nightly/release) for easy deployment on GPU clusters

- Hugging Face: Model pushing/export and compatibility with community ecosystems

- NeMo and Megatron-Core: Core foundation for parallelism and large-model training

- TransformerEngine: FP8 acceleration and precision optimizations

- Cloud Platforms: Compatible with AWS SageMaker, Google Cloud, and DGX Cloud via containers

Best prompts optimised for BioNeMo

- Not applicable - BioNeMo Framework is a training and development toolkit for biomolecular AI models, not a prompt-based generative tool like ChatGPT or text-to-video. It uses configuration files, recipes, and Python scripts for model training rather than natural language prompts for output generation.

- N/A - Focus is on GPU-accelerated training pipelines, data loading, and fine-tuning code; no user-facing prompting interface for content creation.

- N/A - Users interact via code, configs, and command-line for building models, not via descriptive prompts for inference results.

FAQs

Newly Added Tools

About Author