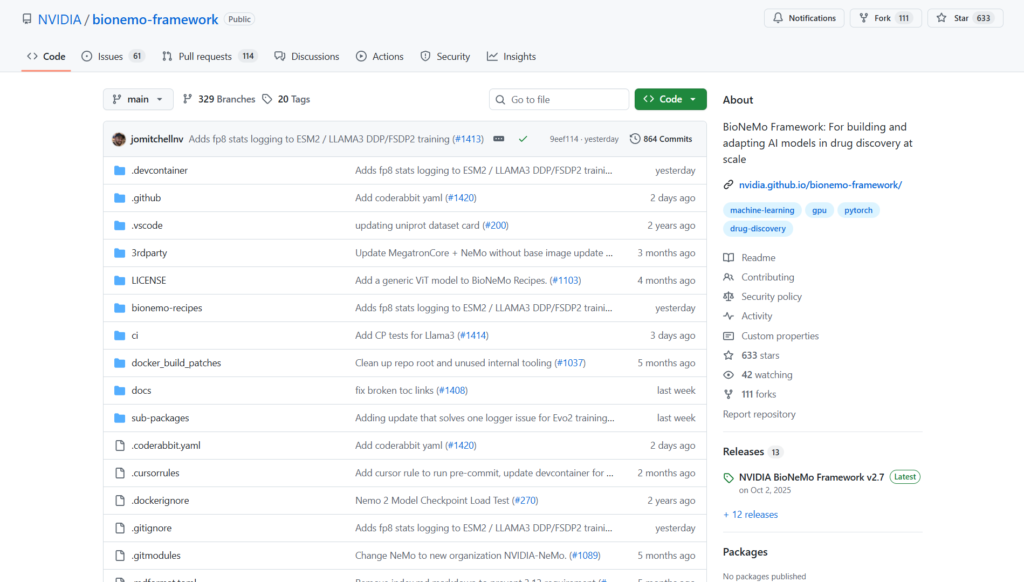

What is BioNeMo Framework?

BioNeMo Framework is NVIDIA’s open-source suite for building, training, and adapting large biomolecular AI models for drug discovery and digital biology, with GPU-optimized recipes and parallelism.

Is BioNeMo free to use?

Yes, the core framework is completely free and open-source under Apache 2.0; enterprise support and containers available via NVIDIA AI Enterprise license.

When was BioNeMo Framework released?

Initial launch announced in September 2022; current version v2.7 released October 1, 2025, with ongoing updates.

What models does BioNeMo support?

Includes ESM-2, Geneformer, CodonFM, Amplify, BioBert, Evo2, Llama 3 variants, Vision Transformer, and more via recipes and sub-packages.

How do I get started with BioNeMo?

Clone the GitHub repo, install via pip or use NGC Docker containers, and run bionemo-recipes examples for training.

What hardware is required for BioNeMo?

Optimized for NVIDIA GPU clusters (multi-node recommended); works on A100/H100 etc. for large-scale training.

Who uses BioNeMo Framework?

Biopharma researchers, computational biologists, AI scientists in drug discovery, and companies like Amgen and A-Alpha Bio.

Where can I find BioNeMo documentation?

Official docs at docs.nvidia.com/bionemo-framework, GitHub repo, and NGC catalog for containers.