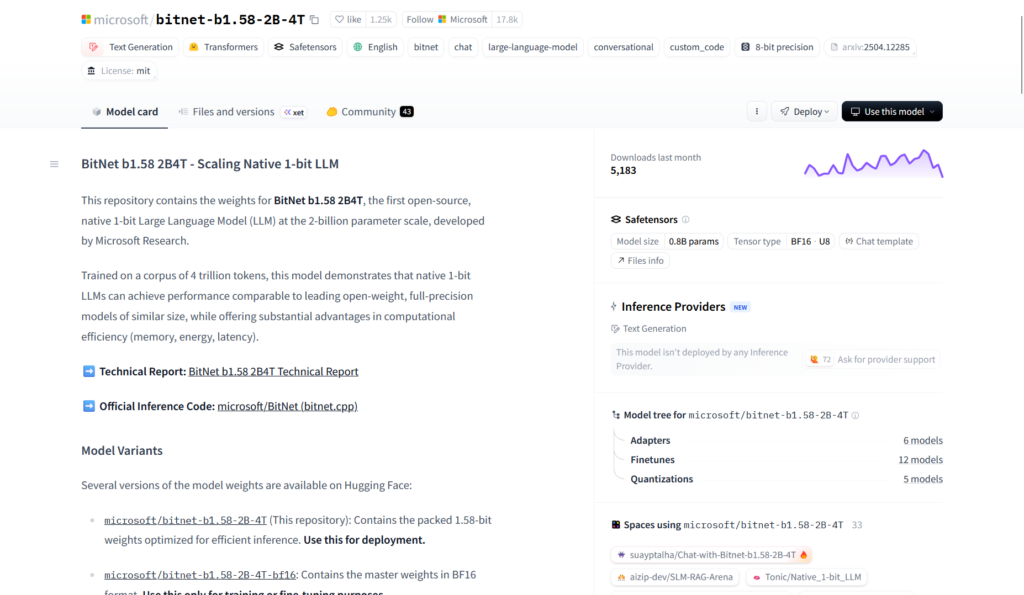

What is BitNet?

BitNet is Microsoft’s open-source inference framework (bitnet.cpp) for 1-bit LLMs like BitNet b1.58, enabling fast, efficient, lossless running on CPUs and GPUs with major speed and energy savings.

When was BitNet released?

The bitnet.cpp framework was first released on October 17, 2024, with ongoing updates through 2025-2026 including new kernels and optimizations.

Is BitNet free to use?

Yes, completely free and open-source under MIT license with full code available on GitHub; no fees for building or running locally.

What models does BitNet support?

Primarily BitNet b1.58 series (e.g., 2B-4T, 3B, large variants) in GGUF format from Hugging Face, with support for other 1-bit LLMs.

What hardware is needed for BitNet?

Runs efficiently on standard CPUs (x86/ARM) and GPUs (CUDA); can handle 100B-scale models on single CPU at 5-7 tokens/second.

How much faster is BitNet than full-precision inference?

Achieves 2.37x to 6.17x speedup on x86 CPUs and 1.37x to 5.07x on ARM, with energy reductions of 55-82%.

Where can I download BitNet models?

Model weights (e.g., BitNet b1.58-2B-4T) are hosted on Hugging Face; framework code and build instructions on GitHub.

What license does BitNet use?

MIT License, allowing free use, modification, and commercial deployment with attribution.

BitNet

About This AI

BitNet is Microsoft’s official inference framework (bitnet.cpp) for 1-bit Large Language Models, specifically optimized for BitNet b1.58 series with ternary weights (-1, 0, +1).

It enables fast, lossless inference on CPUs (x86 and ARM) and GPUs, delivering significant speedups and energy savings compared to full-precision models.

Key highlights include support for models like BitNet b1.58-2B-4T (2.4B parameters trained on 4T tokens), achieving human-like reading speeds (5-7 tokens/second) on single CPUs for large models (up to 100B scale).

The framework uses custom kernels with lookup tables, parallel implementations, and embedding quantization for performance boosts (1.15x to 2.1x additional speedup in latest updates).

It dramatically reduces memory footprint, energy consumption (55-82% savings), and latency while maintaining comparable quality to full-precision LLMs of similar size.

Released in October 2024 with ongoing optimizations (latest in January 2026), it is built on llama.cpp foundations and supports Hugging Face models in GGUF format.

Ideal for edge devices, local deployment, low-power hardware, and developers seeking efficient LLM inference without massive GPUs.

Fully open-source under MIT license with easy build instructions, demo, and community contributions driving adoption in local AI and research.

Key Features

- Fast CPU inference: 2.37x to 6.17x speedup on x86, 1.37x to 5.07x on ARM with energy reductions up to 82%

- Lossless 1.58-bit support: Optimized kernels for ternary weights (-1,0,1) without quality loss

- GPU acceleration: CUDA support for even higher throughput on compatible hardware

- Single-CPU large model running: Handles 100B-scale BitNet models at 5-7 tokens/second

- Lookup table kernels: Efficient binary matmul replacements for massive efficiency gains

- Parallel kernel optimizations: Configurable tiling and embedding quantization for extra speed

- Hugging Face integration: Direct support for GGUF-converted BitNet models from HF

- Easy build and run: CMake-based compilation with Python wrappers for inference

- Demo and benchmarking: Included examples and performance tracking tools

- MIT open-source license: Full code available for modification and commercial use

Price Plans

- Free ($0): Fully open-source under MIT license with complete code, weights support, and no usage fees; build and run locally forever

- Cloud/Enterprise (Custom): Potential future hosted inference via Azure or partners (not yet available)

Pros

- Extreme efficiency: Runs large LLMs on everyday CPUs with low power and memory use

- Significant speed/energy wins: Up to 6x faster and 82% less energy than full-precision

- Open and accessible: Free MIT license, easy local setup for developers and researchers

- Edge/local deployment ready: Enables private, offline AI on laptops, phones, embedded devices

- Active development: Frequent updates with new kernels and optimizations

- Strong community: 27.6k GitHub stars show high interest and adoption

- Future-proof potential: Paves way for ultra-low-bit LLMs on consumer hardware

Cons

- Requires compilation: Needs building from source with CMake/Clang for best performance

- Limited to supported models: Optimized for BitNet b1.58 series; other 1-bit LLMs may need conversion

- Hardware dependent: Best results on modern CPUs/GPUs; older hardware slower

- Setup complexity: Involves dependencies, submodules, and environment configuration

- No hosted version: Purely local/offline; no cloud API or web demo

- Model size constraints: Even efficient, very large models still need substantial RAM

- Early ecosystem: Fewer pre-converted models and integrations compared to llama.cpp

Use Cases

- Local/private LLM inference: Run models offline on laptops or edge devices without cloud dependency

- Low-power AI applications: Deploy on battery-powered hardware, IoT, or mobile for real-time tasks

- Research and experimentation: Test 1-bit quantization effects and efficiency in AI studies

- Cost-sensitive deployments: Reduce GPU needs for inference in startups or education

- Embedded systems: Integrate into robotics, autonomous devices, or custom hardware

- Developer tools: Build fast local assistants or code helpers on standard machines

Target Audience

- AI developers and researchers: Exploring low-bit LLMs and efficient inference techniques

- Edge computing engineers: Building on-device AI without heavy hardware

- Local AI enthusiasts: Running powerful models privately on personal computers

- Startups and indie devs: Minimizing cloud costs for LLM-powered products

- Academic institutions: Teaching/training with resource-efficient models

- Embedded/IoT teams: Adding intelligence to low-power devices

How To Use

- Clone repo: git clone --recursive https://github.com/microsoft/BitNet.git

- Setup environment: Use conda create -n bitnet-cpp python=3.9; pip install -r requirements.txt

- Build framework: Run python setup_env.py -md -q to compile kernels

- Download model: Get GGUF from Hugging Face (e.g., microsoft/bitnet-b1.58-2B-4T)

- Run inference: python run_inference.py -m -p "Your prompt here"

- Benchmark performance: Use included scripts to test speed/energy on your hardware

- Integrate in apps: Use C++/Python APIs for custom projects or llama.cpp compatibility

How we rated BitNet

- Performance: 4.9/5

- Accuracy: 4.7/5

- Features: 4.6/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.1/5

- Customization: 4.8/5

- Data Privacy: 5.0/5

- Support: 4.4/5

- Integration: 4.7/5

- Overall Score: 4.7/5

BitNet integration with other tools

- Hugging Face: Direct support for GGUF-converted BitNet models downloaded from HF repos

- llama.cpp ecosystem: Built upon llama.cpp foundations for broad compatibility and extensions

- Local hardware: Optimized for CPU (x86/ARM) and GPU (CUDA); no external cloud needed

- Custom applications: C++/Python APIs allow embedding inference in apps, servers, or agents

- Developer tools: Works with VS Code, Jupyter, or any Python/C++ environment for testing

Best prompts optimised for BitNet

- N/A - BitNet is an inference framework for running 1-bit LLMs, not a prompt-based generative tool. It executes existing models like BitNet b1.58 on your hardware using standard LLM prompts.

- N/A - No user prompts needed for the framework itself; use any prompt compatible with the loaded BitNet model (e.g., Llama-style chat templates).

- N/A - Focus is on efficient execution of pre-trained models rather than generating content from custom prompts.

FAQs

Newly Added Tools

About Author