What is BitNet?

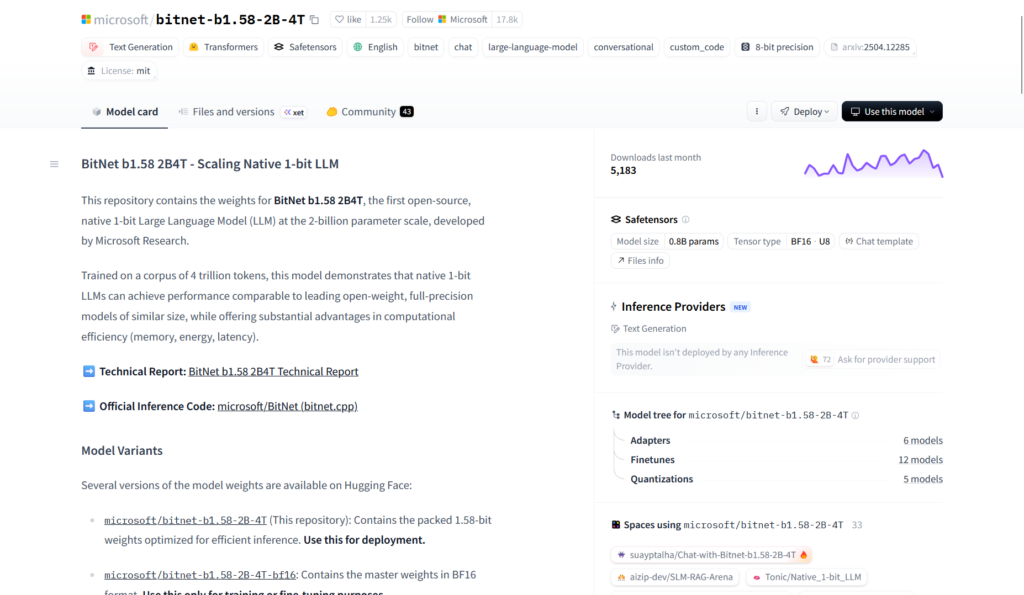

BitNet is Microsoft’s open-source inference framework (bitnet.cpp) for 1-bit LLMs like BitNet b1.58, enabling fast, efficient, lossless running on CPUs and GPUs with major speed and energy savings.

When was BitNet released?

The bitnet.cpp framework was first released on October 17, 2024, with ongoing updates through 2025-2026 including new kernels and optimizations.

Is BitNet free to use?

Yes, completely free and open-source under MIT license with full code available on GitHub; no fees for building or running locally.

What models does BitNet support?

Primarily BitNet b1.58 series (e.g., 2B-4T, 3B, large variants) in GGUF format from Hugging Face, with support for other 1-bit LLMs.

What hardware is needed for BitNet?

Runs efficiently on standard CPUs (x86/ARM) and GPUs (CUDA); can handle 100B-scale models on single CPU at 5-7 tokens/second.

How much faster is BitNet than full-precision inference?

Achieves 2.37x to 6.17x speedup on x86 CPUs and 1.37x to 5.07x on ARM, with energy reductions of 55-82%.

Where can I download BitNet models?

Model weights (e.g., BitNet b1.58-2B-4T) are hosted on Hugging Face; framework code and build instructions on GitHub.

What license does BitNet use?

MIT License, allowing free use, modification, and commercial deployment with attribution.