What is CodeGeeX2?

CodeGeeX2 is the second-generation multilingual code generation model from THUDM, improving on CodeGeeX with better performance, speed, and support for over 100 languages.

When was CodeGeeX2 released?

CodeGeeX2 was released on July 24, 2023, as an upgrade to the original CodeGeeX model.

Is CodeGeeX2 free to use?

Yes, it is completely free and open-source under MIT license with model weights on Hugging Face and local deployment support.

What languages does CodeGeeX2 support?

It supports over 100 programming languages with strong multilingual capabilities, including non-English ones.

How can I use CodeGeeX2?

Via VS Code extension for real-time suggestions, local inference with transformers, web demo, or Hugging Face spaces.

Does CodeGeeX2 require internet?

No for local use; run offline after downloading weights. Web demo and extension need connection.

How does CodeGeeX2 compare to newer models?

Solid for its time with good multilingual support, but outperformed by 2024-2026 models like DeepSeek-Coder in benchmarks.

Is CodeGeeX2 still maintained?

The repo is community-driven; updates are limited since 2023 release, but weights remain usable.

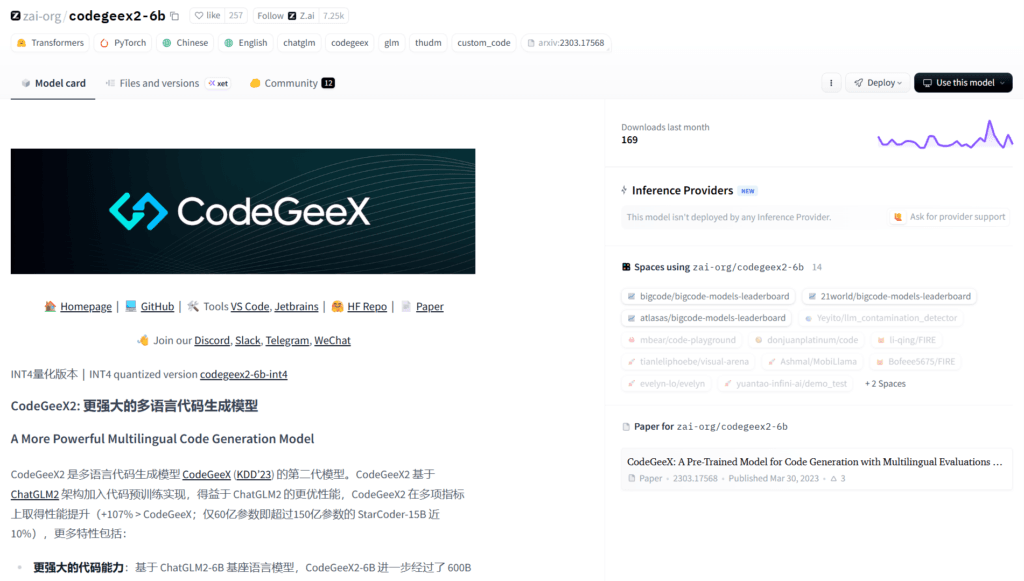

CodeGeeX2

About This AI

CodeGeeX2 is the second-generation multilingual code generation model from THUDM (Tsinghua University KEG), built upon the ChatGLM2-6B architecture and trained on vast code corpora.

It significantly improves over the original CodeGeeX with enhanced performance, faster inference, lighter footprint, and support for over 100 programming languages.

Key strengths include superior code completion, generation from natural language instructions, infilling, translation between languages, and handling complex programming tasks across domains.

The model excels in benchmarks like HumanEval (multilingual versions), MBPP, and others, showing substantial gains in pass@1 scores for many languages compared to predecessors.

It supports VS Code extension integration for real-time suggestions, web demo, API usage, and local deployment options.

Released on July 24, 2023, CodeGeeX2 became popular among developers for its open-source nature (MIT license), strong multilingual capabilities, and efficiency on consumer hardware.

With community adoption via Hugging Face (multiple variants like 6B, quantized), GitHub stars in the thousands, and integration in tools like Continue.dev or custom setups, it remains a go-to open LLM for coding assistance.

Ideal for developers needing reliable, fast, multilingual code generation without heavy compute requirements.

Key Features

- Multilingual code generation: Supports over 100 programming languages with strong performance across them

- Code completion and infilling: Context-aware suggestions, fill-in-the-middle capability for partial code

- Natural language to code: Translate instructions or comments into functional code

- Code translation: Convert code between different languages accurately

- Fast inference: Lightweight architecture enables quick responses even on modest hardware

- VS Code extension: Real-time integration for IDE-based usage with autocomplete and chat

- Web demo and API: Online playground and endpoints for easy testing/integration

- Quantized variants: 4-bit/8-bit versions on Hugging Face for reduced memory use

- Open-source weights: Full model available under MIT license for fine-tuning/local use

- Improved benchmarks: Higher pass@1 on HumanEval-X, MBPP, and other multilingual evals vs CodeGeeX

Price Plans

- Free ($0): Fully open-source model with all features available under MIT license; no paid tiers or subscriptions required

Pros

- Strong multilingual support: Excels in non-English programming languages and cross-language tasks

- Efficient and lightweight: Runs well on consumer GPUs compared to larger models

- Completely free and open: MIT license with no restrictions for commercial or personal use

- Easy integration: VS Code plugin, Hugging Face, and simple local setup

- Good performance gains: Significant improvements over original CodeGeeX in speed and quality

- Community ecosystem: Active GitHub, Hugging Face models, and third-party tools/extensions

Cons

- Smaller context window: Limited compared to modern 128K+ token models

- No longer cutting-edge: Outperformed by newer 2024-2026 models like DeepSeek-Coder or CodeLlama variants

- Requires setup for local use: Needs GPU and dependencies for best performance

- Occasional hallucinations: Can generate incorrect or insecure code in edge cases

- Limited official support: Community-driven maintenance post-release

- No real-time chat mode: Primarily completion-focused, not conversational like GPTs

Use Cases

- Code autocompletion: Real-time suggestions in VS Code or other IDEs

- Prototyping functions: Generate boilerplate or algorithms from natural language

- Code translation: Convert legacy code to modern languages or frameworks

- Learning programming: Explain and generate examples in multiple languages

- Debugging assistance: Suggest fixes or alternative implementations

- Multilingual projects: Work seamlessly in non-English codebases

- Custom fine-tuning: Adapt for domain-specific code generation

Target Audience

- Individual developers: Seeking free, local AI coding help without API costs

- Open-source contributors: Using/fine-tuning for community projects

- Students and learners: Practicing coding with multilingual support

- Researchers in code LLMs: Benchmarking or extending the model

- Teams with limited budget: Preferring offline/open alternatives to paid tools

- Non-English programmers: Benefiting from strong multilingual capabilities

How To Use

- Visit GitHub: Go to github.com/THUDM/CodeGeeX2 for README and installation guide

- Install VS Code extension: Search 'CodeGeeX' in VS Code marketplace or use provided link

- Local setup: Clone repo, install dependencies (transformers, torch), download weights from Hugging Face

- Run inference: Use provided scripts or integrate via transformers pipeline

- Prompt in IDE: Write partial code or natural language comment; accept suggestions

- Web demo: Try online playground if available on Hugging Face or official links

- Fine-tune: Use PEFT or full training scripts for custom datasets

How we rated CodeGeeX2

- Performance: 4.3/5

- Accuracy: 4.4/5

- Features: 4.2/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.5/5

- Customization: 4.6/5

- Data Privacy: 5.0/5

- Support: 4.1/5

- Integration: 4.4/5

- Overall Score: 4.4/5

CodeGeeX2 integration with other tools

- VS Code Extension: Official plugin for real-time code completion and chat in IDE

- Hugging Face Transformers: Easy loading and inference via standard pipeline

- Continue.dev: Compatible with open-source autocomplete frameworks

- Local Hardware: Runs offline on GPUs/CPUs with quantization support

- Custom Scripts: Integrate into Jupyter, CLI tools, or build pipelines

Best prompts optimised for CodeGeeX2

- Write a Python function to implement quicksort with detailed comments and edge case handling.

- Convert this JavaScript array sorting code to TypeScript with proper typing: [insert code]

- Generate a React component for a todo list with add/delete/edit functionality and local storage.

- Explain and fix this buggy SQL query for joining users and orders tables: [insert query]

- Create a complete Flask API endpoint for user registration with JWT authentication and validation.

FAQs

Newly Added Tools

About Author