What is FixRes?

FixRes is a method and open-source repo from Meta AI (Facebook Research) to fix the train-test resolution discrepancy in CNNs for better image classification accuracy at higher resolutions.

When was FixRes released?

The paper ‘Fixing the train-test resolution discrepancy’ was published in 2019 (NeurIPS), with the GitHub repo released around the same time.

Is FixRes still maintained?

No, the repository was archived and made read-only on May 1, 2024; it is no longer actively updated.

What performance gains does FixRes provide?

It boosts ImageNet Top-1 accuracy significantly (e.g., ResNet-50 to 79.0% at 384px vs 77.0% baseline at 224px) via resolution-aligned fine-tuning.

What models does FixRes support?

It works with various CNNs like ResNet-50, ResNeXt-101, PNASNet-5, and includes pre-trained FixRes weights for these on ImageNet.

How do I use FixRes?

Clone the GitHub repo, install PyTorch dependencies, download weights, and run provided scripts for evaluation, feature extraction, or fine-tuning.

Is FixRes free?

Yes, the code and models are fully open-source on GitHub with no usage fees or restrictions.

Is FixRes relevant in 2026?

Primarily historical/educational now; influential in 2019 for CNNs but less critical for modern vision transformers and foundation models.

FixRes

About This AI

FixRes is an open-source implementation from Facebook AI Research (now Meta AI) that reproduces the results of the 2019 NeurIPS paper ‘Fixing the train-test resolution discrepancy’.

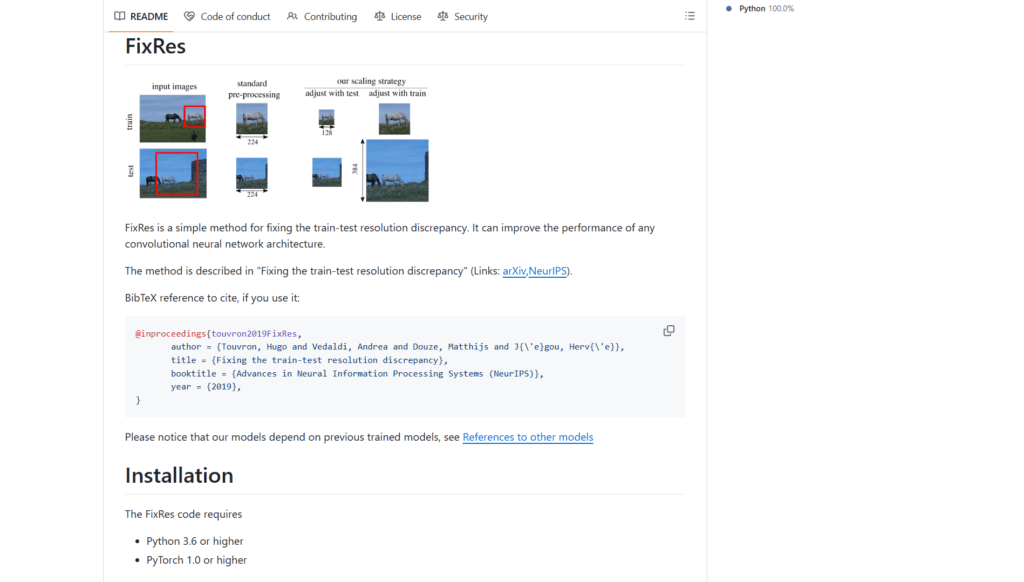

The method addresses a key issue in convolutional neural networks where data augmentations cause objects to appear smaller during training than at test time, leading to performance gaps.

FixRes proposes a straightforward, architecture-agnostic strategy: train at lower resolution for efficiency, then fine-tune the classifier head (or last layers) at the higher test resolution.

This enables strong performance at larger input sizes without full retraining, reducing training time while improving accuracy.

It also supports testing at multiple resolutions and averaging predictions for additional gains.

The repository provides pre-trained models (ResNet-50, ResNeXt-101, PNASNet-5, etc.), evaluation scripts, feature extraction tools, fine-tuning code, and extracted ImageNet features for reproducibility.

Claimed gains include significant Top-1 accuracy improvements on ImageNet (e.g., ResNet-50 from 77.0% at 224px to 79.0% at 384px after FixRes fine-tuning).

Released in 2019 alongside the paper (arXiv:1906.06423), the repo was archived and made read-only in May 2024, indicating no active maintenance.

While influential in its time for resolution-aware training, it is now considered historical research in 2026, with modern vision models (transformers, etc.) handling resolutions differently.

Still useful for academic reproduction, baseline comparisons, or understanding resolution effects in classic CNNs.

Key Features

- Resolution discrepancy fix: Fine-tune classifier at higher test resolution after low-res training

- Architecture agnostic: Works with any CNN (ResNet, ResNeXt, PNASNet, EfficientNet, etc.)

- Pre-trained FixRes models: Enhanced weights for ImageNet at various high resolutions

- Feature extraction: Scripts to extract embeddings/softmax from validation set

- Evaluation and fine-tuning scripts: Ready-to-run code for ImageNet testing and adaptation

- Multi-resolution testing: Average predictions across resolutions for extra accuracy

- Data augmentation support: Includes advanced transforms (CutMix, etc.) for better generalization

- Reproducibility tools: Provided extracted features and checkpoints for quick verification

Price Plans

- Free ($0): Fully open-source repository with code, pre-trained models, and scripts available on GitHub under standard license; no costs or restrictions

Pros

- Significant accuracy gains: Boosts Top-1 on ImageNet by 1-3% at higher resolutions with minimal cost

- Computationally efficient: Only fine-tunes last layers at high res, saving full retrain time

- Easy to apply: Simple method integrable into existing training pipelines

- Strong reproducibility: Detailed scripts, weights, and features for exact paper results

- Architecture independent: Improves various CNN backbones without changes

- Influential research: Cited widely and shaped resolution-aware practices in CV

Cons

- Archived and unmaintained: Read-only since May 2024; no updates or bug fixes

- Outdated in 2026: Focuses on classic CNNs; less relevant for modern ViTs or foundation models

- Requires PyTorch setup: Dependencies and ImageNet data needed for running

- Limited scope: Primarily for classification; not for segmentation/detection

- No modern integrations: No API, web demo, or easy cloud use; local only

- Hardware demands: Fine-tuning at high res still needs GPU resources

- No active community: Low recent activity since archival

Use Cases

- Academic research reproduction: Verify and build upon the 2019 FixRes paper results

- Baseline for resolution studies: Compare modern methods against this classic fix

- CNN fine-tuning experiments: Apply the technique to custom classification tasks

- ImageNet benchmarking: Use provided features/models for quick evaluation

- Educational purposes: Teach resolution effects in deep learning courses

- Legacy CNN improvement: Boost older models on high-res datasets

Target Audience

- Computer vision researchers: Studying resolution impacts or reproducing old papers

- Students and educators: Learning CNN training tricks and augmentation effects

- ML engineers: Applying simple fixes to legacy classification pipelines

- Academic labs: Using as baseline in resolution-aware method comparisons

- Open-source enthusiasts: Forking/experimenting with archived Meta AI code

How To Use

- Clone repo: git clone https://github.com/facebookresearch/FixRes.git

- Install dependencies: pip install -r requirements.txt (PyTorch 1.0+, torchvision, etc.)

- Download models: Get pre-trained FixRes weights from repo links or releases

- Evaluate model: python main_evaluate_imnet.py --input-size 384 --architecture ResNet50 --weight-path ResNet50.pth

- Extract features: python main_extract.py --input-size 384 --architecture ResNet50 --weight-path ResNet50.pth --save-path output_dir

- Fine-tune: python main_finetune.py --input-size 384 --architecture ResNet50 --epochs 56 --batch 64

- Train from scratch: python main_resnet50_scratch.py --batch 64 --epochs appropriate

How we rated FixRes

- Performance: 4.2/5

- Accuracy: 4.5/5

- Features: 4.0/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 3.8/5

- Customization: 4.1/5

- Data Privacy: 5.0/5

- Support: 3.0/5

- Integration: 4.0/5

- Overall Score: 4.1/5

FixRes integration with other tools

- PyTorch Ecosystem: Built on PyTorch and torchvision for model definitions and transforms

- ImageNet Dataset: Designed for standard ImageNet training/evaluation pipelines

- Pretrained Models Repo: Compatible with pretrained-models.pytorch library for base weights

- Local Training Setup: Runs on standard GPU machines with CUDA; no cloud dependency

- Research Frameworks: Easily integrable into custom CV research codebases or Jupyter notebooks

Best prompts optimised for FixRes

- Not applicable - FixRes is a training method and code repo for fixing resolution discrepancy in CNN image classification, not a prompt-based generative tool. It uses scripts and model weights rather than text prompts.

FAQs

Newly Added Tools

About Author