What is FunctionGemma?

FunctionGemma is a specialized 270M-parameter model from Google, fine-tuned from Gemma 3 for reliable function calling, turning natural language into structured API/tool actions on edge devices.

When was FunctionGemma released?

It was released on December 18, 2025, as part of Google’s Gemma family updates.

Is FunctionGemma free to use?

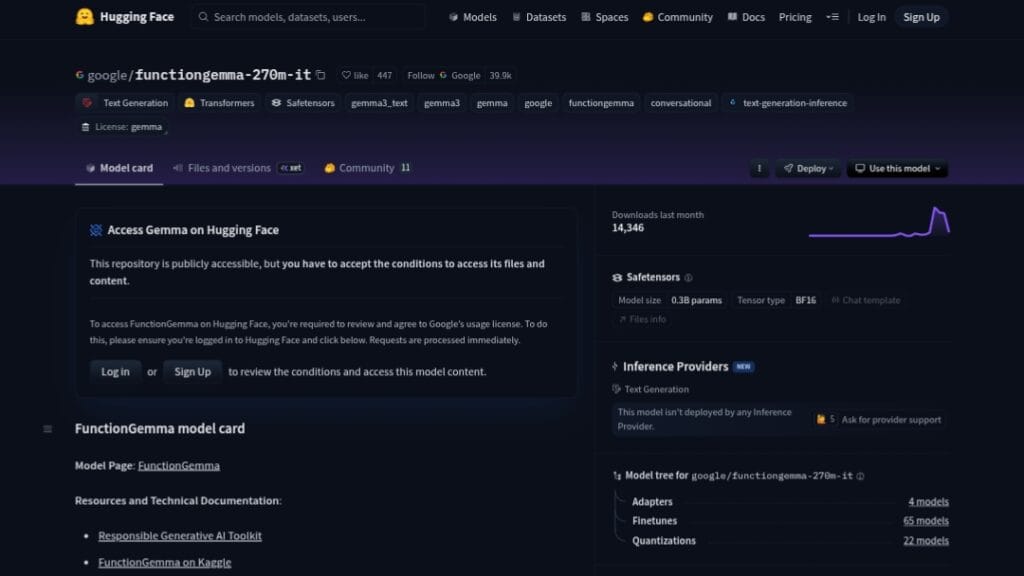

Yes, it is fully open-source with weights available on Hugging Face and Kaggle; no cost for download or local inference.

What devices can run FunctionGemma?

It is optimized for edge hardware like mobile phones, NVIDIA Jetson Nano, and low-power GPUs/CPUs for on-device, offline use.

How accurate is FunctionGemma for function calling?

Baseline accuracy is around 58 percent on Mobile Actions benchmarks, but fine-tuning can boost it significantly (e.g., to 85 percent).

Can I fine-tune FunctionGemma?

Yes, it is designed as a strong base for customization; Google provides cookbooks, datasets (e.g., Mobile Actions), and guides for fine-tuning.

What frameworks support FunctionGemma?

It works with Hugging Face Transformers, Ollama, LM Studio, vLLM, MLX, Llama.cpp, Vertex AI, and more for inference and training.

What is the main use case for FunctionGemma?

Building private, fast, on-device agents for mobile apps, games, IoT, or embedded systems that execute real-world actions from natural language commands.

FunctionGemma

About This AI

FunctionGemma is a specialized fine-tuned variant of Google’s Gemma 3 270M model, optimized specifically for reliable function calling and tool use.

Released on December 18, 2025, it translates natural language inputs into structured function calls and API actions, enabling lightweight, private, local AI agents that run efficiently on edge devices like mobile phones or NVIDIA Jetson Nano.

It supports unified action and chat generation, multi-turn reasoning, command decomposition, and handles complex schemas with longer tool definitions without performance degradation.

Key strengths include high customization potential via fine-tuning (e.g., boosting accuracy from 58 percent to 85 percent on Mobile Actions benchmarks), 256k vocabulary for efficient JSON/multilingual tokenization, and compatibility with broad ecosystems for deployment and training.

Designed for on-device privacy, low latency, and cost-effectiveness, it serves as a strong base for building specialized agents in mobile apps, games, or embedded systems.

Fully open-source with weights available on Hugging Face and Kaggle, it benefits from the Gemma family’s massive adoption (over 300 million downloads across the family in 2025).

Ideal for developers creating fast, offline-capable apps that execute real-world actions (calendar events, device controls, game commands) while maintaining data privacy and minimal compute requirements.

Key Features

- Native function calling: Translates natural language to structured API/tool calls with high reliability

- Unified action and chat: Generates both executable calls and natural language summaries/responses

- Multi-turn reasoning: Handles complex, decomposed commands across conversations

- Lightweight edge deployment: Runs efficiently on mobile CPUs, NPUs, GPUs, and low-power devices

- High customizability: Strong base for fine-tuning to specific domains or tools (e.g., Mobile Actions)

- 256k vocabulary: Efficient handling of JSON, long tool definitions, and multilingual inputs

- Broad ecosystem support: Compatible with Hugging Face, Unsloth, Keras, NeMo, LiteRT-LM, vLLM, MLX, Llama.cpp, Ollama, Vertex AI

- Privacy-focused: Fully on-device execution with no cloud dependency for core inference

- Low-latency performance: Optimized for real-time agentic applications on edge hardware

Price Plans

- Free ($0): Fully open-source model weights, code, and documentation available on Hugging Face and Kaggle; no usage fees or subscriptions required for download or local inference

Pros

- Extremely lightweight: 270M parameters enable fast, low-power on-device use

- Strong fine-tuning base: Significant accuracy gains (up to 85 percent) with domain-specific training

- Privacy and offline capable: Keeps data local, ideal for mobile/embedded agents

- Open-source accessibility: Free weights, code, and guides on Hugging Face/Kaggle

- Ecosystem compatibility: Works with major inference and training frameworks

- Cost-effective: No inference costs for local runs; leverages Gemma family momentum

- Edge AI focus: Addresses reliability bottlenecks in on-device function calling

Cons

- Small size trade-offs: Less general knowledge than larger models; best after fine-tuning

- Requires API definition: Needs clear tool schemas for optimal performance

- Zero-shot limitations: Baseline accuracy lower without customization (58 percent on benchmarks)

- Hardware dependent: Real-time use needs capable edge devices

- Recent release: Limited community fine-tunes/examples compared to older Gemma variants

- No hosted API: Fully local/self-hosted; no cloud fallback

- Vocabulary focus: Optimized for structured outputs but may need prompt engineering

Use Cases

- Mobile app agents: On-device voice commands to control phone features (calendar, flashlight, apps)

- Game AI: Natural language to in-game actions or NPC behaviors

- Embedded systems: IoT device control via spoken or typed instructions

- Offline productivity tools: Local task automation without internet

- Custom agent development: Fine-tune for domain-specific tool calling (e.g., business APIs)

- Edge robotics: Simple command-to-action mapping on low-power hardware

Target Audience

- Mobile developers: Building privacy-focused, on-device AI agents

- Edge AI engineers: Creating low-latency, offline function-calling models

- Game developers: Integrating natural language controls in apps/games

- Embedded/IoT teams: Adding voice/API agents to devices

- AI researchers: Fine-tuning small models for tool use and agents

- Open-source enthusiasts: Experimenting with Gemma family variants

How To Use

- Download model: Get weights from Hugging Face (google/functiongemma-270m-it) or Kaggle

- Set up environment: Install compatible frameworks (e.g., Hugging Face Transformers, Ollama, LM Studio, or Llama.cpp)

- Load and run: Use inference code to input natural language and receive structured function calls

- Define tools: Provide JSON schemas for available functions/APIs in prompts

- Fine-tune (optional): Use provided cookbooks/datasets (e.g., Mobile Actions) to specialize model

- Deploy on device: Integrate with Android/iOS via LiteRT-LM or edge hardware via NVIDIA tools

- Test locally: Try demos like Google AI Edge Gallery app or Physics Playground

How we rated FunctionGemma

- Performance: 4.5/5

- Accuracy: 4.4/5

- Features: 4.6/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.3/5

- Customization: 4.8/5

- Data Privacy: 5.0/5

- Support: 4.5/5

- Integration: 4.7/5

- Overall Score: 4.6/5

FunctionGemma integration with other tools

- Hugging Face Transformers: Primary inference and fine-tuning support

- Ollama / LM Studio: Easy local running and testing on desktop

- Google AI Edge / LiteRT-LM: Optimized deployment on Android and edge devices

- Vertex AI: Cloud fine-tuning or hybrid workflows

- vLLM / MLX / Llama.cpp: High-performance inference options

Best prompts optimised for FunctionGemma

- Create a calendar event for lunch with John tomorrow at 12:30 PM in San Francisco.

- Turn on the flashlight and set brightness to maximum.

- Search for nearby coffee shops and open the first one in maps.

- Send a text to Mom saying I'll be home late tonight.

- Play my workout playlist on Spotify and start at song 3.

FAQs

Newly Added Tools

About Author