What is Gemini 4?

Gemini 4 is Google’s upcoming AI model, projected for Q4 2026, featuring advanced multimodal capabilities, autonomous agents, and integrations with Google services for intelligent assistance.

How does Gemini 4 compare to Gemini 3?

It builds on Gemini 3’s strengths with larger context windows, standardized deep thinking, and added spatial reasoning, while maintaining top benchmark performance.

What is the pricing for Gemini?

Basic access is free, but Pro features start at $20/month via Google AI subscriptions, with API usage around $1.50 per million input tokens.

Does Gemini support multiple languages?

Yes, it supports over 100 languages for text and multimodal processing, making it accessible worldwide.

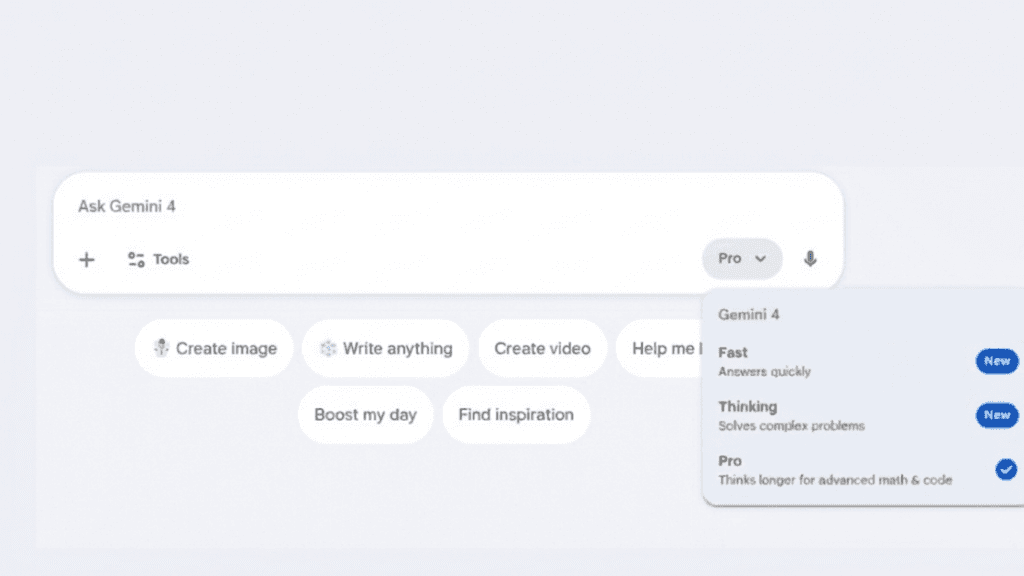

Gemini 4

Google’s Next-Generation Multimodal AI – Massive Scale Reasoning, Autonomous Agents, and Deep Integration Across Google Ecosystem

Last Updated: January 12, 2026

By Zelili AI

About This AI

Gemini 4 is Google’s anticipated flagship multimodal AI model, projected for release in Q4 2026 or early 2027, building on Gemini 3 series with unprecedented scale and capabilities.

It features over 100 trillion parameters (speculative estimates), enhanced multimodal processing of text, images, audio, video, and code, with context windows exceeding 1 million tokens for handling massive datasets or long conversations.

The model introduces autonomous agents for multi-step planning and execution, deep think mode for advanced reasoning, improved real-time perception, spatial/3D understanding, and proactive assistance through integrations with Gmail, Calendar, Maps, YouTube, Android devices, and Project Astra.

Expected strengths include PhD-level reasoning in math/science/coding, long-horizon planning, reduced hallucinations, and seamless ecosystem embedding for everyday productivity, creative work, and device control.

Access via Gemini app (free basic, paid tiers for advanced features), Google AI subscriptions (Pro/Ultra), and Vertex AI API for developers/enterprises.

While details remain speculative until official launch, Gemini 4 aims to redefine AI as a universal assistant with action-oriented intelligence, positioning Google to lead in consumer and enterprise AI.

It builds on Gemini’s current 650 Million+ monthly users (app) and 2 Billion+ for AI Overviews, promising even broader adoption through deeper Google product integration.

Key Features

- Massive parameter scale: Speculated over 100 trillion parameters for frontier-level intelligence

- Extended multimodal processing: Unified handling of text, images, audio, video, code, and spatial data

- Million-plus token context: Processes extremely long inputs for comprehensive analysis

- Autonomous agents: Multi-step planning, tool use, and proactive task execution

- Deep think mode: Advanced reasoning chains for complex math, science, and coding problems

- Real-time perception: Improved live understanding of video, audio, and environmental inputs

- Google ecosystem integration: Native ties to Gmail, Calendar, Maps, YouTube, Android, and Project Astra

- Reduced hallucinations: Enhanced factuality and epistemic humility in responses

- Spatial and 3D capabilities: Better handling of physical world understanding and visualization

- Proactive assistance: Anticipates needs and automates workflows across devices

Price Plans

- Free ($0): Basic access to Gemini app with limited prompts and lighter models

- Google AI Pro ($20/Month): Higher limits, access to advanced reasoning modes, Deep Research, and integrations

- Google AI Ultra ($250/Month): Highest limits, exclusive features like full Veo video gen, Deep Think, and priority access

Pros

- Frontier multimodal excellence: Expected to lead in reasoning, planning, and cross-modal tasks

- Deep Google integration: Seamless with billions of users' daily tools for maximum utility

- Scalable access: Free basic tier plus affordable Pro/Ultra plans for advanced features

- Ecosystem advantage: Leverages Google's vast data and services for real-world relevance

- Future-proof design: Positioned for spatial AI, agents, and long-horizon intelligence

- Developer-friendly API: Vertex AI support for enterprise and custom applications

- Broad language support: Global coverage for multilingual users

Cons

- Speculative details: Features and release remain unconfirmed until official announcement

- Premium for full power: Advanced agents and high limits require paid Google AI Pro/Ultra

- Potential latency: Massive scale may increase response times for complex queries

- Privacy considerations: Deep integrations raise data usage concerns (opt-outs available)

- Competition pressure: Must outperform GPT-5 series and Claude 4 in benchmarks

- Hardware demands: On-device inference may require high-end Pixel or cloud reliance

- Gradual rollout: Features often launch in beta or select regions first

Use Cases

- Daily productivity: Automate emails, scheduling, research, and device control

- Complex reasoning: Solve advanced math, science, coding, and strategic planning

- Multimodal analysis: Process videos, images, documents for insights and summaries

- Creative workflows: Generate content, edit media, and prototype ideas with agents

- Enterprise automation: Build custom agents for business processes via API

- Personal assistance: Proactive help across Google apps and Android ecosystem

- Research and learning: Deep dives into topics with long-context understanding

Target Audience

- Everyday Google users: Seeking smarter search, assistant, and productivity

- Professionals and developers: Needing advanced reasoning and API access

- Students and researchers: For deep analysis and multimodal learning tools

- Creators and content makers: Generating/editing media with integrated AI

- Business teams: Automating workflows and decision-making

- Tech enthusiasts: Exploring frontier multimodal AI capabilities

How To Use

- Access Gemini app: Open gemini.google.com or Gemini mobile app (Android/iOS)

- Sign in: Use Google account for personalized experience and integrations

- Upgrade for Pro/Ultra: Subscribe via Google AI plans for advanced models and limits

- Prompt effectively: Use detailed queries; enable Deep Think for complex tasks

- Upload multimodal input: Add images, audio, video, or files for analysis

- Leverage agents: Ask for multi-step actions or proactive suggestions

- Integrate with Google services: Use in Gmail, Docs, Maps for contextual help

How we rated Gemini 4

- Performance: 4.9/5

- Accuracy: 4.8/5

- Features: 4.9/5

- Cost-Efficiency: 4.5/5

- Ease of Use: 4.7/5

- Customization: 4.6/5

- Data Privacy: 4.4/5

- Support: 4.7/5

- Integration: 4.9/5

- Overall Score: 4.8/5

Gemini 4 integration with other tools

- Gmail and Calendar: Direct integration for email drafting, scheduling, and meeting summaries

- Google Maps and YouTube: Real-time navigation help and video analysis/summarization

- Android Devices: On-device Gemini for voice commands, app control, and contextual assistance

- Vertex AI API: Enterprise-grade integration for custom apps and scalable workflows

- Google Workspace: Enhances Docs, Sheets, Slides with AI generation and analysis

Best prompts optimised for Gemini 4

- Analyze this uploaded 30-minute meeting video: summarize key decisions, extract all action items with owners and deadlines, and suggest follow-up tasks in a prioritized list

- Plan a 7-day trip to Japan for a family of 4 on a $5000 budget: create detailed itinerary with flights, hotels, activities, and daily expenses breakdown

- Debug this Python code [paste code]: identify bugs, explain issues step-by-step, and provide fixed version with performance improvements

- Generate a professional business proposal based on this document [upload PDF]: structure with executive summary, problem statement, solution, pricing, and timeline

- Explain quantum entanglement like I'm 10 years old, then like a physics PhD student, using analogies and equations where appropriate

Gemini 4 is poised to redefine multimodal AI with massive scale, autonomous agents, and deep Google ecosystem integration for proactive assistance. Expected strengths in reasoning, long-context, and real-world utility make it ideal for productivity and creative work. While speculative until launch, it promises to challenge leaders with seamless, action-oriented intelligence across devices.

FAQs

Newly Added Tools

Gemini 4 Alternatives

About Author

Hi Guys! We are a group of ML Engineers by profession with years of experience exploring and building AI tools, LLMs, and generative technologies. We analyze new tools not just as a user, but as someone who understands their technical depth and real-world value.We know how overwhelming these tools can be for most people, that’s why we break down complex AI concepts into simple, practical insights. Our goal is to help you discover these magical AI tools that actually save your time and make everyday work smarter, not harder.“We don’t just write about AI: We build, test and simplify it for you.”