Gemini 4 is Google’s anticipated flagship multimodal AI model, projected for release in Q4 2026 or early 2027, building on Gemini 3 series with unprecedented scale and capabilities.

It features over 100 trillion parameters (speculative estimates), enhanced multimodal processing of text, images, audio, video, and code, with context windows exceeding 1 million tokens for handling massive datasets or long conversations.

The model introduces autonomous agents for multi-step planning and execution, deep think mode for advanced reasoning, improved real-time perception, spatial/3D understanding, and proactive assistance through integrations with Gmail, Calendar, Maps, YouTube, Android devices, and Project Astra.

Expected strengths include PhD-level reasoning in math/science/coding, long-horizon planning, reduced hallucinations, and seamless ecosystem embedding for everyday productivity, creative work, and device control.

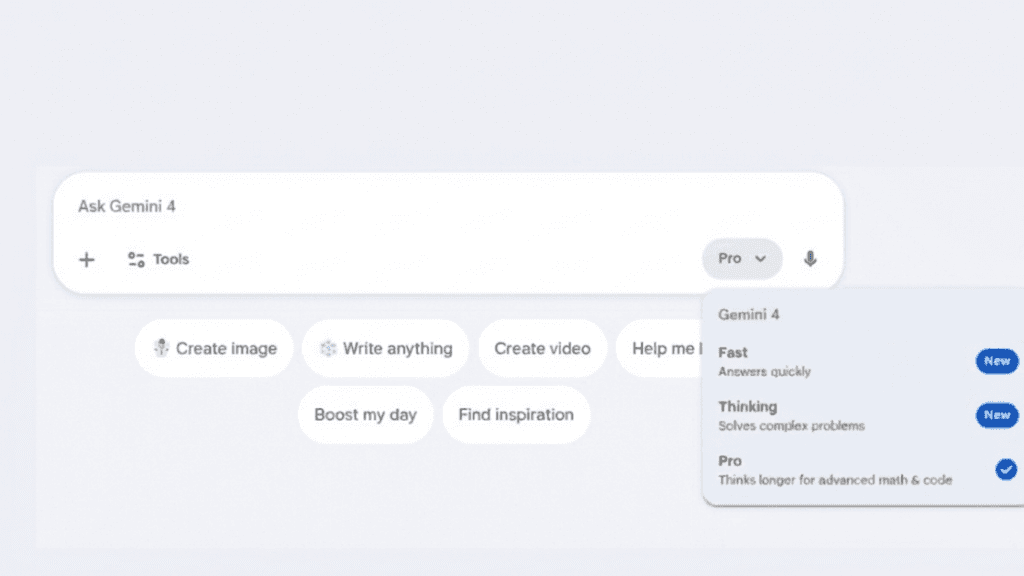

Access via Gemini app (free basic, paid tiers for advanced features), Google AI subscriptions (Pro/Ultra), and Vertex AI API for developers/enterprises.

While details remain speculative until official launch, Gemini 4 aims to redefine AI as a universal assistant with action-oriented intelligence, positioning Google to lead in consumer and enterprise AI.

It builds on Gemini’s current 650 Million+ monthly users (app) and 2 Billion+ for AI Overviews, promising even broader adoption through deeper Google product integration.

Gemini 4 is poised to redefine multimodal AI with massive scale, autonomous agents, and deep Google ecosystem integration for proactive assistance. Expected strengths in reasoning, long-context, and real-world utility make it ideal for productivity and creative work. While speculative until launch, it promises to challenge leaders with seamless, action-oriented intelligence across devices.