What is Gemma Scope 2?

Gemma Scope 2 is an open-source interpretability suite from Google DeepMind, released December 19, 2025, featuring sparse autoencoders and transcoders to analyze internal activations and behaviors of Gemma 3 models (270M to 27B).

When was Gemma Scope 2 released?

It was officially released on December 19, 2025, with weights on Hugging Face, technical paper, blog post, and interactive demos available shortly after.

Is Gemma Scope 2 free to use?

Yes, it is completely free and open-source with all weights, code, tutorials, and demos publicly available under permissive licenses for research and safety work.

What models does Gemma Scope 2 support?

It covers the full Gemma 3 family from 270M to 27B parameters, including pre-trained and instruction-tuned variants, with SAEs/transcoders for every layer.

How does Gemma Scope 2 help AI safety?

It enables tracing risks like jailbreaks, hallucinations, sycophancy, and bias by decomposing activations into interpretable features and analyzing reasoning paths.

Where can I try Gemma Scope 2?

Interactive demo on neuronpedia.org/gemma-scope-2, Colab notebooks for tutorials, and weights on Hugging Face for local use.

What is new in Gemma Scope 2 compared to the original?

It adds coverage for Gemma 3 models, retrained SAEs/transcoders, skip-transcoders, cross-layer support, and broader safety-focused analysis capabilities.

Who should use Gemma Scope 2?

Primarily AI safety researchers, mechanistic interpretability experts, and teams auditing or aligning large language models like Gemma 3.

Gemma Scope 2

About This AI

Gemma Scope 2 is a groundbreaking open-source interpretability toolkit released by Google DeepMind on December 19, 2025, designed to provide researchers with unprecedented visibility into the internal workings of the Gemma 3 family of models.

It consists of a comprehensive suite of sparse autoencoders (SAEs) and transcoders trained across every layer and sub-layer of Gemma 3 models, ranging from 270M to 27B parameters.

These tools act as a ‘microscope’ for LLMs, decomposing dense internal activations into interpretable concepts or features, enabling analysis of emergent behaviors, auditing AI agents, debugging issues, and developing mitigations for risks like jailbreaks, hallucinations, sycophancy, and bias.

Key advancements over Gemma Scope (for Gemma 2) include retrained SAEs/transcoders on Gemma 3, support for skip-transcoders and cross-layer transcoders to better interpret multi-step computations and distributed algorithms, and coverage of the full model family for broader safety research.

The suite empowers the AI safety community to trace potential risks across the entire ‘brain’ of the model, advancing mechanistic interpretability at scale.

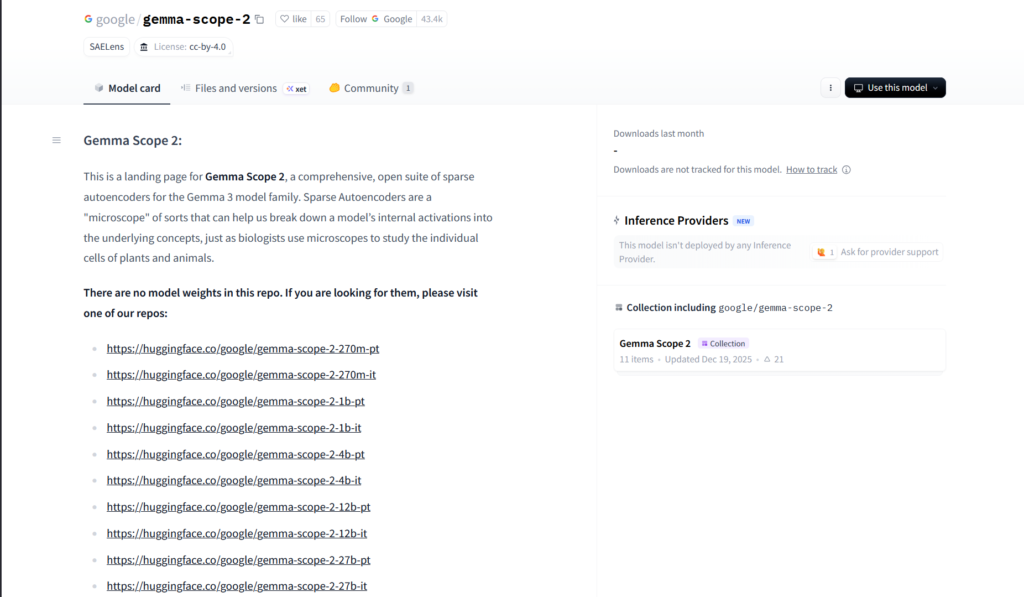

Fully open under permissive licenses, weights are hosted on Hugging Face in separate repos per variant (e.g., gemma-scope-2-27b-pt/it), with interactive demos on Neuronpedia, Colab tutorials, technical paper, and blog post available.

As the largest open interpretability release by an AI lab to date, it accelerates transparent, safe AI development by making complex model behavior understandable and auditable.

Key Features

- Sparse Autoencoders (SAEs): Decompose model activations into interpretable features across all layers of Gemma 3 models

- Transcoders: Enable detailed analysis of internal computations and multi-step reasoning paths

- Skip-Transcoders and Cross-Layer Support: Improved handling of distributed algorithms and complex behaviors

- Full Gemma 3 Coverage: Trained on models from 270M to 27B parameters, pre-trained and instruction-tuned variants

- Interactive Demos: Explore features and activations via Neuronpedia platform

- Colab Tutorials: Step-by-step notebooks for loading, using, and training SAEs in JAX/PyTorch

- Technical Resources: Full report, blog post, and code for reproducing experiments

- Mechanistic Interpretability Focus: Trace risks like hallucinations, jailbreaks, and sycophancy at scale

- Open and Permissive Licensing: Weights and tools freely available for research and safety work

Price Plans

- Free ($0): Fully open-source suite with all SAEs, transcoders, weights, code, demos, and tutorials available on Hugging Face and Google resources; no fees or subscriptions

- Enterprise/Research (Custom): Potential premium support or cloud access through Google Cloud/DeepMind partnerships (not required for core use)

Pros

- Unprecedented scale: Largest open interpretability suite released by an AI lab, covering full Gemma 3 family

- Advanced techniques: Includes cutting-edge skip-transcoders and cross-layer methods for complex behavior analysis

- Community empowerment: Fully open resources accelerate AI safety research and transparency

- Practical tools: Interactive demos and tutorials make it accessible for researchers

- Safety impact: Enables auditing agents, debugging, and mitigating emergent risks

- Builds on proven work: Extends successful Gemma Scope for Gemma 2 with better coverage

- No cost barrier: Completely free for academic and safety-focused use

Cons

- Technical expertise required: Best suited for researchers familiar with mechanistic interpretability

- No end-user app: Primarily for advanced analysis, not casual or production use

- Compute-heavy: Loading and running on large Gemma 3 models needs significant hardware

- Recent release: Limited community examples, extensions, or adoption metrics yet

- Model-specific: Tailored to Gemma 3; not directly applicable to other architectures without adaptation

- Interpretation challenges: Even with tools, understanding billions of features remains complex

- No hosted inference: Requires local or cloud setup for full use

Use Cases

- AI safety research: Analyze emergent behaviors, jailbreaks, hallucinations, and sycophancy in Gemma 3

- Mechanistic interpretability studies: Decompose activations into concepts and trace reasoning paths

- Model auditing and debugging: Inspect internal states for bias, misalignment, or failure modes

- Agent behavior analysis: Understand multi-step computations in AI agents built on Gemma 3

- Academic and open research: Reproduce experiments, extend SAEs, or develop new interpretability methods

- Risk mitigation development: Design interventions based on discovered features and circuits

- Community benchmarking: Compare interpretability across Gemma 3 sizes and variants

Target Audience

- AI safety researchers: Studying and mitigating risks in large language models

- Mechanistic interpretability experts: Working on sparse autoencoders and feature analysis

- Academic institutions: Conducting open research on model internals

- AI alignment teams: Auditing and understanding emergent behaviors

- Independent developers/researchers: Experimenting with open interpretability tools

- Organizations building on Gemma 3: Ensuring transparency and safety in deployments

How To Use

- Access resources: Visit huggingface.co/google/gemma-scope-2 or deepmind.google blog for links

- Download weights: Get specific model (e.g., gemma-scope-2-27b-pt) from Hugging Face repos

- Set up environment: Use provided Colab notebooks or install dependencies (JAX/PyTorch)

- Load SAE/transcoder: Follow tutorials to load and run on Gemma 3 activations

- Analyze features: Explore activations, visualize concepts, and trace behaviors

- Try interactive demo: Use Neuronpedia.org/gemma-scope-2 for browser-based exploration

- Extend research: Reproduce experiments or train custom SAEs with guides

How we rated Gemma Scope 2

- Performance: 4.8/5

- Accuracy: 4.7/5

- Features: 4.9/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.3/5

- Customization: 4.8/5

- Data Privacy: 5.0/5

- Support: 4.5/5

- Integration: 4.7/5

- Overall Score: 4.8/5

Gemma Scope 2 integration with other tools

- Hugging Face: Model weights and collections hosted for easy download and community use

- Google Colab: Official notebooks for loading, running, and experimenting with SAEs/transcoders

- Neuronpedia: Interactive web demo for exploring and visualizing Gemma Scope 2 features

- JAX/PyTorch Frameworks: Native support for analysis and custom training of interpretability tools

- Gemma 3 Ecosystem: Direct compatibility with Gemma 3 models from Google/Kaggle/Hugging Face

Best prompts optimised for Gemma Scope 2

- N/A - Gemma Scope 2 is an interpretability toolkit using sparse autoencoders and transcoders for analyzing Gemma 3 model internals, not a prompt-based generative tool.

- N/A - No text prompts are used for generation; it processes model activations and features directly via code and notebooks.

FAQs

Newly Added Tools

About Author