What is Genmo?

Genmo is a research lab and platform building open-source frontier models for video generation, with Mochi 1 as its flagship text-to-video model for high-fidelity visual storytelling.

When was Mochi 1 released?

Mochi 1 preview was released on October 22, 2024, as an open-source text-to-video model under Apache 2.0 license.

Is Genmo free to use?

Yes, Mochi 1 is completely free and open-source; run locally, customize, or test in the playground with no fees.

What resolution does Mochi 1 support?

Current preview generates 480p videos; HD/720p upgrades were planned for late 2024 or later.

Who founded Genmo?

Genmo was founded in 2022 by brothers Paras Jain (CEO) and Ajay Jain (CTO), AI researchers from UC Berkeley and Google Brain.

How good is Mochi 1 compared to competitors?

It offers state-of-the-art motion quality and prompt adherence among open models, rivaling closed systems like Runway and Kling in preliminary evaluations.

Can I run Genmo models locally?

Yes, full code and weights are available on GitHub and Hugging Face for local inference with compatible hardware.

What funding has Genmo raised?

Genmo raised approximately $28.4M to $30M in Series A funding in 2024, led by NEA.

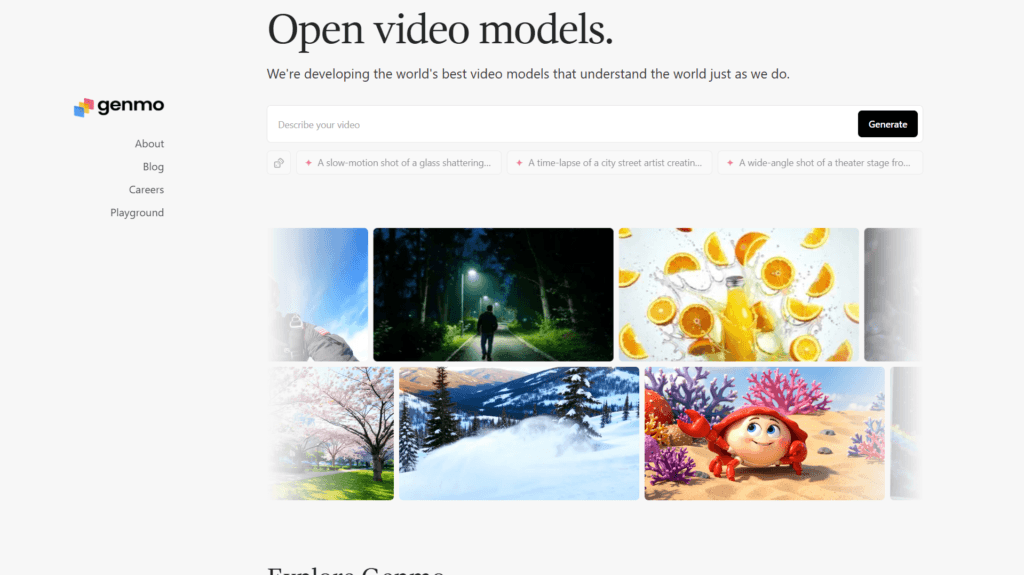

Genmo

About This AI

Genmo is a research lab and creative platform focused on building advanced open-source models for video generation to advance multimodal AGI.

Its flagship Mochi 1 is a cutting-edge open-source text-to-video model (preview released October 2024) that transforms natural language prompts into engaging, high-fidelity visual stories with superior motion quality and prompt adherence.

The model uses an asymmetric design for efficient deployment, supports 480p generation (with HD upgrades planned), and is licensed under Apache 2.0 for free individual and commercial use.

Users can run Mochi 1 locally via GitHub repo, customize through ComfyUI, or test in the Genmo playground for quick experimentation.

The platform emphasizes democratizing high-quality video synthesis, enabling creators to generate content with minimal technical barriers through simple prompts.

Founded in 2022 by brothers Paras Jain (CEO) and Ajay Jain (CTO), Genmo raised $28.4M-$30M Series A in 2024 from NEA and others.

It positions as a leader in open video AI, rivaling closed models like Runway and Kling with its focus on motion realism and accessibility.

Ideal for filmmakers, content creators, researchers, and developers building AI-driven visual storytelling tools.

Key Features

- Text-to-Video Generation: Create high-fidelity videos from natural language prompts with strong motion and prompt adherence

- Open-Source Mochi 1 Model: Apache 2.0 licensed for free use, customization, and commercial applications

- Local and Playground Access: Run model via GitHub repo/ComfyUI or test instantly in Genmo playground

- Asymmetric Model Design: Efficient memory use for deployment while maintaining quality

- High Motion Fidelity: Superior physics and movement realism in generated videos

- Customizable Workflows: Fine-tuning support (e.g., LoRA) and integration with tools like ComfyUI

- Preview Capabilities: Generate 480p videos with planned HD/720p upgrades

- Research Focus: Advances in multimodal generative AI for video world understanding

Price Plans

- Free ($0): Full open-source access to Mochi 1 model, code, weights, and playground testing; no subscription required

- Potential Premium (Not specified): Future hosted or enhanced features may introduce paid options (none confirmed as of 2026)

Pros

- Fully open-source: Free model weights, code, and permissive license for broad innovation

- Strong motion quality: Competitive with closed leaders in visual storytelling and physics

- Accessible for creators: Simple prompt-based generation with no heavy technical setup needed

- Community-driven: Supports local running, fine-tuning, and contributions via GitHub

- Efficient design: Lower deployment costs compared to many large video models

- Rapid evolution: Backed by strong funding and research team for ongoing improvements

- Commercial flexibility: Apache 2.0 allows full use in products or services

Cons

- Preview stage limitations: Current 480p; HD and advanced features still in development

- Requires hardware for local: High-end GPU needed for smooth local inference

- No hosted free tier details: Playground may have limits; heavy use favors local or custom setup

- Recent release: User base and ecosystem integrations still growing

- Potential artifacts: Complex prompts may show inconsistencies in early previews

- Focused on video: Less emphasis on image-only or other modalities compared to multi-tool suites

Use Cases

- Video content creation: Generate short films, ads, or social clips from text ideas

- Creative storytelling: Turn scripts or concepts into visual prototypes quickly

- Research and experimentation: Fine-tune model or test new video AI techniques

- Game and animation prototyping: Create motion sequences or asset previews

- Marketing visuals: Produce engaging promotional videos without heavy production

- Educational content: Animate explanations or simulations for teaching

- Developer tools: Integrate into apps or build custom generative pipelines

Target Audience

- Content creators and filmmakers: Seeking fast AI video generation for ideas

- AI researchers and developers: Working on video models or open-source contributions

- Marketers and advertisers: Needing quick visual content for campaigns

- Animators and game devs: Prototyping motion and scenes

- Creative hobbyists: Experimenting with generative AI video

- Open-source enthusiasts: Running/customizing frontier models locally

How To Use

- Visit site: Go to genmo.ai and explore playground for quick testing

- Try playground: Enter text prompt in web interface to generate video preview

- Local setup: Clone GitHub repo (github.com/genmoai/mochi), install dependencies

- Download model: Get weights from Hugging Face (genmo/mochi-1-preview)

- Run inference: Use quickstart script to generate videos from prompts

- Customize: Fine-tune with LoRA or integrate in ComfyUI workflows

- Experiment: Adjust parameters for motion quality or style

How we rated Genmo

- Performance: 4.6/5

- Accuracy: 4.5/5

- Features: 4.7/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.4/5

- Customization: 4.8/5

- Data Privacy: 4.9/5

- Support: 4.3/5

- Integration: 4.5/5

- Overall Score: 4.7/5

Genmo integration with other tools

- Hugging Face: Model weights and inference support for easy download and community use

- ComfyUI: Direct integration for custom workflows and node-based generation

- GitHub Repository: Full code, demos, and community contributions for developers

- Local Hardware: Runs on personal GPUs; no cloud required for core model

- Third-Party Tools (Potential): Compatible with video editors like Premiere or DaVinci via exported clips

Best prompts optimised for Genmo

- A serene Japanese garden at cherry blossom season, petals gently falling into a koi pond, slow cinematic pan across traditional architecture, soft morning light, ultra realistic, 8k detail

- Epic space battle between starships in a nebula, lasers flashing, explosions in slow motion, dynamic camera tracking through debris, sci-fi cinematic style, high detail

- A cute animated cat playing piano in a cozy room, jazz music vibe, colorful cartoon style, smooth animation, whimsical and joyful

- Surreal dreamscape of floating islands connected by waterfalls, steampunk airships drifting by, golden hour lighting, fantasy art style, intricate details

- Realistic urban street scene in rainy Tokyo at night, neon reflections on wet pavement, people with umbrellas, atmospheric cyberpunk mood, cinematic quality

FAQs

Newly Added Tools

About Author