What is GLM-130B?

GLM-130B is an open bilingual (English and Chinese) 130 billion parameter pre-trained language model released by Tsinghua KEG in 2022, known for strong few-shot learning and bilingual capabilities.

When was GLM-130B released?

It was conceived in late 2021, training started May 2022, and officially open-sourced on August 4, 2022.

Is GLM-130B free to use?

Yes, it is completely open-source with weights available on Hugging Face and GitHub under a permissive license; no costs for download or local use.

Who developed GLM-130B?

Developed by Tsinghua University’s Knowledge Engineering Group (KEG) with collaborators including Zhipu.AI.

What are the main strengths of GLM-130B?

Excellent bilingual performance, strong few-shot/zero-shot learning, and competitive results on benchmarks at the time of release.

Is GLM-130B still relevant in 2026?

It has been largely superseded by newer models like GLM-4, Llama 3, Qwen, etc., but remains useful for research and bilingual studies.

How much hardware does GLM-130B require?

Full inference needs multiple high-end GPUs (e.g., 8x A100 40GB or equivalent); quantization or CPU offloading can reduce requirements.

Where can I try GLM-130B?

Check the Hugging Face Space zai-org/GLM-130B for a demo (may be limited), or run locally via transformers library.

GLM-130B

About This AI

GLM-130B is an open bilingual (English and Chinese) bidirectional dense pre-trained language model with 130 billion parameters, developed by Tsinghua KEG and collaborators.

Released in 2022 as part of the General Language Model (GLM) series, it was pre-trained using the GLM algorithm on a massive corpus of roughly 400 billion tokens (200 billion each for English and Chinese).

The model demonstrates strong few-shot learning capabilities across various tasks including question answering, sentiment analysis, natural language inference, and more, often matching or approaching the performance of much larger closed models at the time.

It supports bilingual understanding with high proficiency in both languages, making it suitable for cross-lingual applications and Chinese-dominant tasks where many Western models fall short.

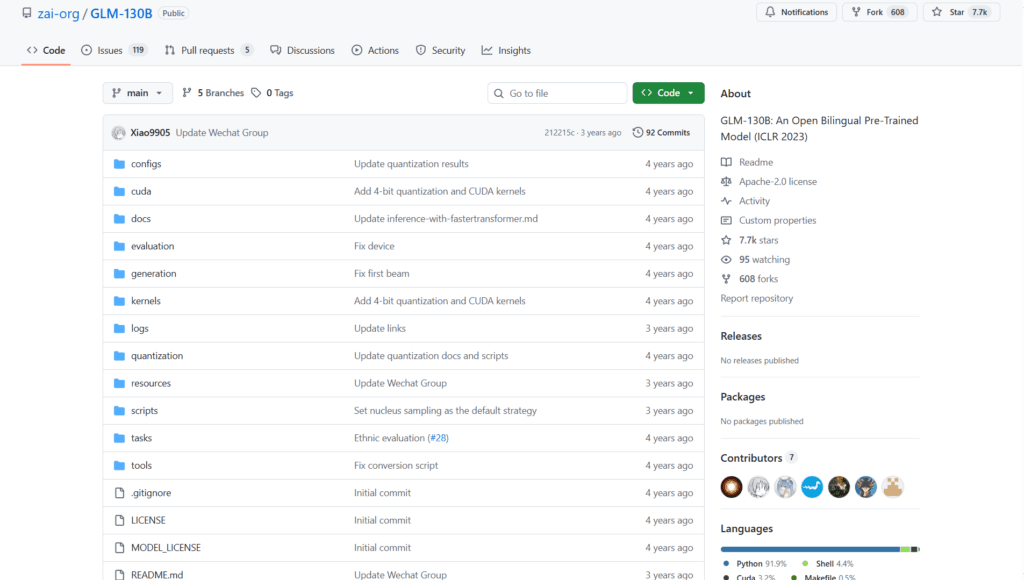

The project was conceived in late 2021, trained on sponsored clusters starting May 2022, and open-sourced under a permissive license with weights available on Hugging Face and GitHub.

While superseded by newer models in the GLM/ChatGLM series (like GLM-4), GLM-130B remains a landmark open model for its scale and bilingual focus, influencing subsequent research in large-scale pre-training.

It requires significant hardware (e.g., multiple high-end GPUs) for inference due to its size but can be quantized or run with optimizations.

The Hugging Face Space (zai-org/GLM-130B) provides a demo interface, though it may be limited or archived given the model’s age and evolution to newer GLM versions.

Key Features

- Bilingual pre-training: Balanced high-quality training on English and Chinese corpora for strong performance in both languages

- 130 billion parameters: Dense bidirectional architecture enabling advanced few-shot and zero-shot learning

- General Language Model algorithm: Uses GLM pre-training objective for better masked modeling and generation

- Few-shot learning excellence: Competitive results on SuperGLUE, MMLU-like tasks, and cross-lingual benchmarks at release

- Open weights and code: Fully available on Hugging Face and GitHub for research and fine-tuning

- High Chinese proficiency: Superior handling of Chinese language tasks compared to many English-centric models

- Support for downstream tasks: Adaptable for QA, classification, translation, summarization, and more

Price Plans

- Free ($0): Fully open-source model weights, code, and documentation available on Hugging Face and GitHub; no costs for download or local use

- Cloud Inference (Variable): Potential paid hosted inference via third-party platforms if available (not native)

Pros

- Landmark open model: One of the first massive open bilingual LLMs, inspiring the GLM/ChatGLM family

- Strong bilingual capabilities: Excellent English-Chinese balance and cross-lingual transfer

- Permissive open-source license: Allows free research, commercial fine-tuning, and deployment

- Influential in Chinese AI: Helped advance open large model development in China

- Proven few-shot performance: Matched closed models of similar era on diverse benchmarks

Cons

- Outdated by 2026 standards: Superseded by newer GLM-4, Llama, Qwen, etc., with better efficiency and capabilities

- Extremely resource-intensive: 130B size requires massive GPU memory for full inference

- Limited modern usage: Most users have migrated to smaller/faster successors like ChatGLM-6B or GLM-4

- No native chat tuning: Base pre-trained model; lacks instruction/chat fine-tuning of later versions

- Demo space may be inactive: Hugging Face Space (zai-org/GLM-130B) shows limited or no recent activity

- No recent updates: Original 2022 release with no major follow-up maintenance

Use Cases

- Bilingual research: Studying cross-lingual transfer and Chinese NLP with a large open model

- Fine-tuning experiments: Adapting for domain-specific tasks in English or Chinese

- Historical benchmarking: Comparing performance of 2022-era open LLMs

- Academic studies: Analyzing pre-training scaling laws and bilingual data effects

- Legacy applications: Running in environments where newer models are too resource-heavy

Target Audience

- AI researchers in NLP: Studying large-scale bilingual pre-training and GLM architecture

- Chinese language model developers: Building upon early open bilingual foundations

- Academic institutions: Using for papers, theses, and experiments on open LLMs

- Open-source enthusiasts: Exploring historical milestones in massive open models

How To Use

- Download from Hugging Face: Access model at THUDM/GLM-130B or similar repo

- Install dependencies: Use transformers library with trust_remote_code=True

- Load model: model = AutoModel.from_pretrained('THUDM/glm-130b', trust_remote_code=True)

- Run inference: Use pipeline or custom generation for text completion/filling

- Quantize if needed: Apply 8-bit or 4-bit quantization for reduced memory

- Fine-tune locally: Use PEFT or full fine-tuning on domain data

- Demo via space: Try the Hugging Face Space zai-org/GLM-130B if active

How we rated GLM-130B

- Performance: 4.0/5

- Accuracy: 4.2/5

- Features: 3.8/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 3.5/5

- Customization: 4.5/5

- Data Privacy: 5.0/5

- Support: 3.8/5

- Integration: 4.2/5

- Overall Score: 4.1/5

GLM-130B integration with other tools

- Hugging Face Transformers: Native support via AutoModel and pipeline for easy inference

- GitHub Repository: Original code, training details, and evaluation scripts from Tsinghua KEG

- Local Hardware: Runs on multi-GPU setups with frameworks like DeepSpeed or Megatron

- Research Frameworks: Compatible with PEFT, LoRA, and other fine-tuning libraries

- Older Demo Spaces: Hugging Face Space zai-org/GLM-130B for online testing if still functional

Best prompts optimised for GLM-130B

- GLM-130B is a base pre-trained model, not instruction-tuned. Best use is for masked language modeling or continuation: Fill in the blank: The capital of France is ___

- As a bilingual model, prompt in Chinese: 北京是中国的___,请完成句子。

- For few-shot learning: Example: Input: Apple is a fruit. Output: Fruit. Input: Car is a ___ Output: Vehicle. Now: Dog is a ___

- English continuation: Once upon a time in a faraway land, there lived a brave knight who...

- Chinese generation: 请写一段关于秋天的优美描写:秋风萧瑟,落叶纷飞,...

FAQs

Newly Added Tools

About Author