What is GLM-130B?

GLM-130B is an open bilingual (English and Chinese) 130 billion parameter pre-trained language model released by Tsinghua KEG in 2022, known for strong few-shot learning and bilingual capabilities.

When was GLM-130B released?

It was conceived in late 2021, training started May 2022, and officially open-sourced on August 4, 2022.

Is GLM-130B free to use?

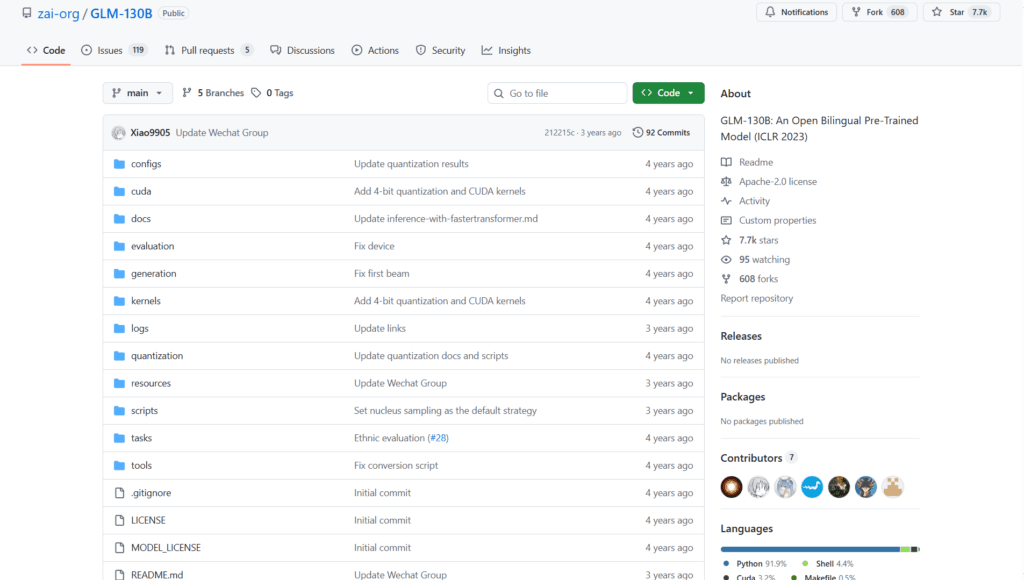

Yes, it is completely open-source with weights available on Hugging Face and GitHub under a permissive license; no costs for download or local use.

Who developed GLM-130B?

Developed by Tsinghua University’s Knowledge Engineering Group (KEG) with collaborators including Zhipu.AI.

What are the main strengths of GLM-130B?

Excellent bilingual performance, strong few-shot/zero-shot learning, and competitive results on benchmarks at the time of release.

Is GLM-130B still relevant in 2026?

It has been largely superseded by newer models like GLM-4, Llama 3, Qwen, etc., but remains useful for research and bilingual studies.

How much hardware does GLM-130B require?

Full inference needs multiple high-end GPUs (e.g., 8x A100 40GB or equivalent); quantization or CPU offloading can reduce requirements.

Where can I try GLM-130B?

Check the Hugging Face Space zai-org/GLM-130B for a demo (may be limited), or run locally via transformers library.