What is GPT-NeoX?

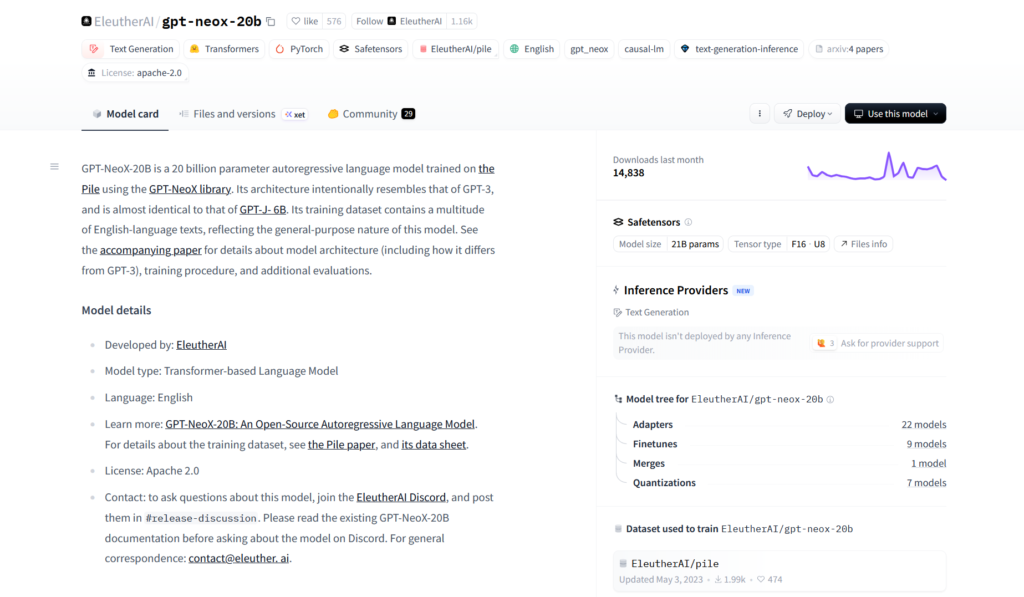

GPT-NeoX is an open-source large language model family from EleutherAI, with the main GPT-NeoX-20B model offering 20 billion parameters as a transparent alternative to GPT-3.

When was GPT-NeoX released?

GPT-NeoX-20B was officially released in April 2022 by EleutherAI.

Is GPT-NeoX free to use?

Yes, it is completely free and open-source under Apache 2.0 license with full weights and code available on Hugging Face.

What dataset was GPT-NeoX trained on?

It was trained on The Pile, an 800 GB diverse open-source dataset curated by EleutherAI.

How do I run GPT-NeoX locally?

Load it via Hugging Face transformers library in Python; requires significant GPU VRAM (quantization helps reduce memory needs).

What are the main strengths of GPT-NeoX?

Strong few-shot performance, code generation, reasoning, full reproducibility, and open training details make it great for research and local use.

Does GPT-NeoX support fine-tuning?

Yes, the full model, tokenizer, and training code are open, enabling fine-tuning on custom datasets.

How does GPT-NeoX compare to modern models?

It is smaller and outperformed by newer open models like Llama 3 or Mistral, but remains valuable for its transparency and local deployment ease.

GPT-NeoX

About This AI

GPT-NeoX is an open-source autoregressive large language model family developed by EleutherAI, designed as a fully reproducible alternative to closed models like GPT-3.

The flagship model GPT-NeoX-20B features 20 billion parameters trained on the Pile dataset (800 GB of diverse text), using a GPT-3-like architecture with rotary positional embeddings, parallel attention, and other optimizations for stability and efficiency.

It excels in few-shot learning, reasoning, code generation, and general knowledge tasks, achieving strong results on benchmarks like LAMBADA (62.2 percent accuracy), Hellaswag, PIQA, and others, often competitive with or surpassing similarly sized proprietary models at release.

Key strengths include full open weights, tokenizer, training code (via the GPT-NeoX library), and evaluation harness, enabling researchers and developers to fine-tune, deploy, or extend the model freely.

Released in April 2022 under Apache 2.0 license, it remains widely used for research, custom applications, local inference, and as a base for further open models.

Available on Hugging Face with easy loading via transformers library, it supports text generation, continuation, classification, and more with parameters like temperature, top-k/top-p sampling, and repetition penalty.

While newer models have surpassed it in scale and performance, GPT-NeoX-20B continues to serve as a foundational open LLM for academic work, efficient deployment on consumer hardware (with quantization), and community experimentation.

Key Features

- 20 billion parameters: Large-scale autoregressive transformer for high-capacity language understanding and generation

- Trained on The Pile: 800 GB diverse, high-quality dataset for broad knowledge and reduced bias

- Rotary positional embeddings: Improved long-sequence handling and extrapolation beyond training context

- Parallel attention layers: Architectural optimizations for faster training and inference stability

- Full open-source stack: Model weights, tokenizer, training code (GPT-NeoX library), and eval harness released

- Few-shot and zero-shot learning: Strong performance on downstream tasks without fine-tuning

- Code generation capabilities: Competitive results on HumanEval and other programming benchmarks

- Text continuation and completion: High-quality generation with controllable sampling methods

- Hugging Face integration: Seamless loading and inference via transformers library

- Quantization support: Runs efficiently on consumer GPUs with 4-bit/8-bit versions available

Price Plans

- Free ($0): Fully open-source model weights, code, and tokenizer available on Hugging Face under Apache 2.0; no costs for download or local use

- Cloud Hosting (Variable): Run via paid cloud GPUs (e.g., RunPod, Vast.ai) or hosted APIs if third-party providers offer it

Pros

- Completely open and reproducible: Full transparency in weights, code, data, and training process

- Strong performance at 20B scale: Matched or beat many closed models of similar size upon release

- Community-driven: Backed by EleutherAI's commitment to open research and accessibility

- Versatile for research: Ideal base for fine-tuning, alignment studies, and domain adaptation

- Efficient inference options: Quantized versions enable local running on mid-range hardware

- Long context potential: Rotary embeddings support better extrapolation for extended inputs

- No usage restrictions: Apache 2.0 allows commercial, research, and derivative use freely

Cons

- Outdated by 2026 standards: Smaller and weaker than modern 70B+ models like Llama 3 or newer

- High VRAM requirements: Full 20B model needs significant GPU memory without heavy quantization

- Limited context length: Native 2048 tokens; extensions possible but not native

- No multimodal support: Pure text model; no vision or audio capabilities

- Training data cutoff: Knowledge limited to Pile (up to 2021-ish); no real-time updates

- Slower inference vs newer architectures: Less optimized than MoE or later designs

- Less fine-tuned variants: Fewer community chat-tuned versions compared to newer open models

Use Cases

- Academic and AI research: Studying scaling laws, fine-tuning, alignment, or safety experiments

- Local LLM deployment: Run on personal hardware for privacy-focused chatbots or tools

- Code generation and assistance: Power IDE plugins or local coding helpers

- Text completion tasks: Creative writing, story generation, or data augmentation

- Custom domain adaptation: Fine-tune on specialized datasets for niche applications

- Benchmarking and evaluation: Compare open models or test new techniques

- Educational purposes: Teach LLM internals by inspecting/training small-scale versions

Target Audience

- AI researchers and academics: Needing transparent, reproducible large models

- Open-source developers: Building local AI tools or extending base models

- Privacy-focused users: Running LLMs offline without cloud dependency

- Students and educators: Learning about transformer architecture and training

- Indie developers: Prototyping AI features with free high-capacity models

- Organizations avoiding vendor lock-in: Preferring fully open alternatives to closed APIs

How To Use

- Install transformers: pip install transformers torch accelerate

- Load model: from transformers import GPTNeoXForCausalLM, GPTNeoXTokenizerFast; model = GPTNeoXForCausalLM.from_pretrained('EleutherAI/gpt-neox-20b'); tokenizer = GPTNeoXTokenizerFast.from_pretrained('EleutherAI/gpt-neox-20b')

- Generate text: inputs = tokenizer('The future of AI is', return_tensors='pt'); outputs = model.generate(**inputs, max_new_tokens=50); print(tokenizer.decode(outputs[0]))

- Use pipeline: from transformers import pipeline; generator = pipeline('text-generation', model='EleutherAI/gpt-neox-20b'); print(generator('Hello world', max_length=100))

- Quantize for efficiency: Use bitsandbytes or auto-gptq for 4-bit/8-bit loading to reduce VRAM use

- Fine-tune: Use PEFT or full fine-tuning scripts from EleutherAI repo for custom datasets

- Run locally: Ensure sufficient GPU RAM (around 40-50GB for fp16; less with quantization)

How we rated GPT-NeoX

- Performance: 4.2/5

- Accuracy: 4.3/5

- Features: 4.5/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.4/5

- Customization: 4.8/5

- Data Privacy: 5.0/5

- Support: 4.2/5

- Integration: 4.6/5

- Overall Score: 4.5/5

GPT-NeoX integration with other tools

- Hugging Face Transformers: Native loading and inference support via the official library

- LangChain / LlamaIndex: Easy chaining for RAG, agents, or tool-use applications

- Local LLM Frontends: Compatible with Oobabooga text-generation-webui, LM Studio, SillyTavern

- VS Code Extensions: Use with Continue.dev or similar for local code assistance

- Research Frameworks: Integrate with EleutherAI's lm-evaluation-harness for benchmarking

Best prompts optimised for GPT-NeoX

- Write a detailed Python function to implement quicksort with comments explaining each step

- Continue this story in a cyberpunk style: The neon rain fell on the empty streets as Jax plugged into the matrix one last time...

- Explain quantum entanglement to a high school student using simple analogies and no equations

- Generate a professional email declining a job offer while maintaining good relationships

- Translate this paragraph from English to formal French for a business proposal: [insert text]

FAQs

Newly Added Tools

About Author