What is GPT-NeoX?

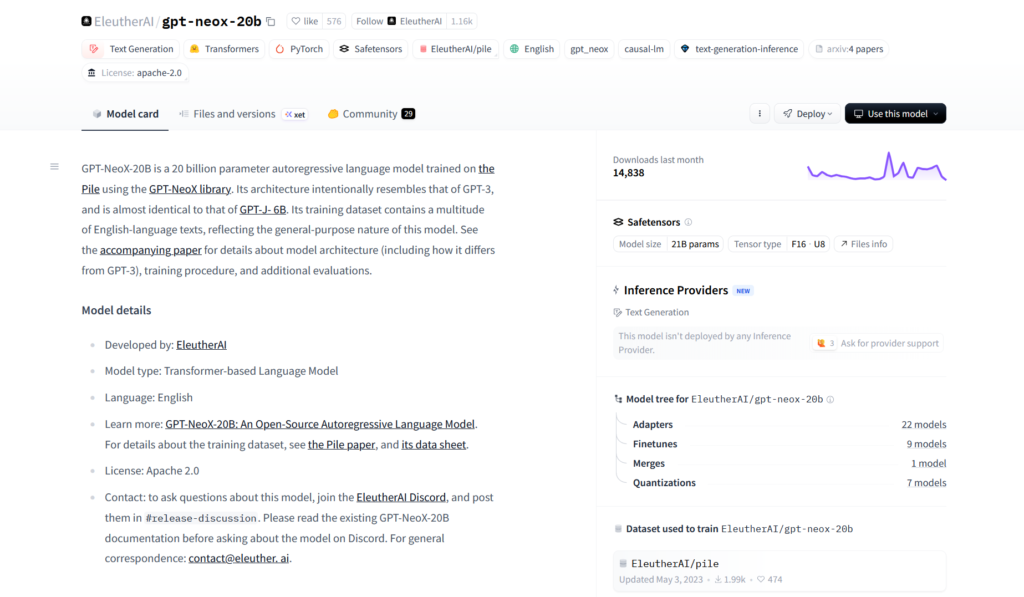

GPT-NeoX is an open-source large language model family from EleutherAI, with the main GPT-NeoX-20B model offering 20 billion parameters as a transparent alternative to GPT-3.

When was GPT-NeoX released?

GPT-NeoX-20B was officially released in April 2022 by EleutherAI.

Is GPT-NeoX free to use?

Yes, it is completely free and open-source under Apache 2.0 license with full weights and code available on Hugging Face.

What dataset was GPT-NeoX trained on?

It was trained on The Pile, an 800 GB diverse open-source dataset curated by EleutherAI.

How do I run GPT-NeoX locally?

Load it via Hugging Face transformers library in Python; requires significant GPU VRAM (quantization helps reduce memory needs).

What are the main strengths of GPT-NeoX?

Strong few-shot performance, code generation, reasoning, full reproducibility, and open training details make it great for research and local use.

Does GPT-NeoX support fine-tuning?

Yes, the full model, tokenizer, and training code are open, enabling fine-tuning on custom datasets.

How does GPT-NeoX compare to modern models?

It is smaller and outperformed by newer open models like Llama 3 or Mistral, but remains valuable for its transparency and local deployment ease.