What is HY-Motion 1.0?

HY-Motion 1.0 is Tencent’s open-source text-to-3D human motion generation model series, scaling to 1B parameters with Diffusion Transformer and Flow Matching for realistic skeleton animations from text prompts.

When was HY-Motion 1.0 released?

It was open-sourced on December 30, 2025, with inference code, pretrained models, Hugging Face Space demo, and official site made available.

Is HY-Motion 1.0 free to use?

Yes, it is completely free and open-source with full weights and code on Hugging Face under Tencent Hunyuan Community license; no usage fees.

What are the model sizes in HY-Motion 1.0?

Standard version has 1 billion parameters; Lite version has 460 million for lighter deployment while maintaining strong performance.

What formats does HY-Motion 1.0 output?

It generates SMPL-H skeleton animations exportable in FBX, BVH, GLB for direct use in Blender, Unity, Unreal Engine, and other 3D tools.

How many motion categories does HY-Motion 1.0 support?

It covers over 200 categories including locomotion, sports, dance, gestures, and complex multi-step actions with high diversity.

Where can I try HY-Motion 1.0 online?

Use the interactive demo on Hugging Face Spaces at huggingface.co/spaces/tencent/HY-Motion-1.0; enter text prompts to generate animations.

Who developed HY-Motion 1.0?

Developed by Tencent’s Hunyuan 3D Digital Human Team as part of their AI research and open-source efforts.

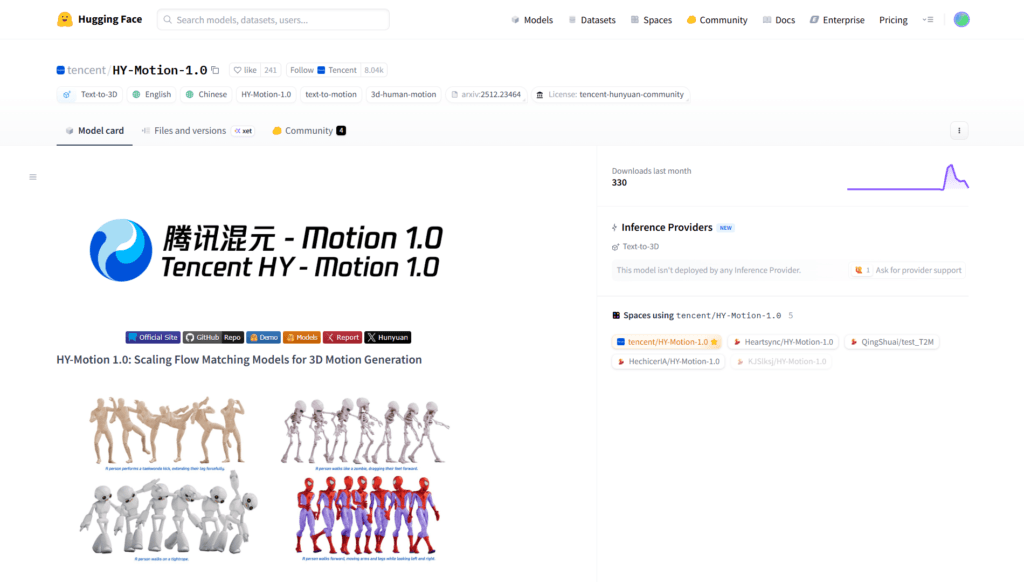

HY-Motion 1.0

About This AI

HY-Motion 1.0 is a series of state-of-the-art text-to-3D human motion generation models from Tencent’s Hunyuan team, released December 30, 2025.

Built on Diffusion Transformer (DiT) architecture with Flow Matching training, it scales to 1 billion parameters (standard version) and 460 million (Lite variant), producing high-quality skeleton-based 3D character animations from natural language prompts.

The model excels in instruction-following, motion diversity, temporal coherence, and physical realism across 200+ motion categories including locomotion, sports actions, dance, gestures, and complex multi-step behaviors.

Outputs are in standard formats (SMPL-H skeletons) compatible with Blender, Unity, Unreal Engine, and other 3D pipelines for direct import and use.

Key strengths include significant improvements over prior open-source models in semantic alignment, motion quality, and artifact reduction (e.g., foot sliding) via large-scale data, fine-tuning, and physics-based rewards.

Fully open-source under Tencent Hunyuan Community license with inference code, pretrained weights, Hugging Face Space demo, and official site for easy testing.

Supports text prompts in English/Chinese, zero-shot generalization, and integration into animation workflows without motion capture or manual keyframing.

Ideal for game developers, animators, VFX artists, researchers, and creators needing fast, high-fidelity 3D motion assets from simple descriptions.

Key Features

- Text-to-3D Motion Generation: Converts natural language prompts into realistic skeleton-based 3D human animations

- Billion-Parameter Scale: 1B standard model for superior quality and instruction following; 460M Lite for efficiency

- Flow Matching DiT Architecture: Combines diffusion transformers with flow matching for coherent, diverse motions

- 200+ Motion Categories: Covers locomotion, sports, dance, gestures, complex actions, and multi-step behaviors

- High Semantic Alignment: Strong prompt adherence with minimal artifacts like foot sliding or unnatural poses

- Standard Output Formats: SMPL-H skeletons exportable to FBX, BVH, GLB for Blender, Unity, Unreal Engine

- Zero-Shot Generalization: Generates novel motions without specific training examples

- Hugging Face Space Demo: Interactive online testing with prompt input and animation preview

- Open-Source Full Stack: Inference code, pretrained weights, and evaluation tools on Hugging Face/GitHub

- Multilingual Prompt Support: Works well with English and Chinese text descriptions

Price Plans

- Free ($0): Fully open-source with pretrained models, inference code, Hugging Face demo, and no usage restrictions under Tencent Hunyuan Community license

- Enterprise/Cloud (Custom): Potential future hosted options or premium support via Tencent (not available yet)

Pros

- State-of-the-art open-source performance: Outperforms prior models in motion quality, diversity, and prompt adherence

- Completely free and open: No usage fees; Apache-compatible license with full code and weights

- Easy integration: Direct export to popular 3D engines and pipelines

- High scalability: Billion-parameter power enables complex, realistic animations

- Online demo available: Test via Hugging Face Space without local setup

- Physics-aware training: Reduces common artifacts for more natural results

- Rapid release momentum: Inference code and models available immediately on announcement

Cons

- Requires GPU for local inference: Heavy model needs strong hardware for reasonable speed

- Recent release: Community support, fine-tuning examples, and integrations still emerging

- No hosted API pricing: Fully local/self-hosted; no official cloud service mentioned

- Limited to skeleton motion: Outputs SMPL-H poses, not full textured/rigged characters

- Prompt sensitivity: Complex or ambiguous descriptions may require rewriting for best results

- No mobile/web native: Demo is HF Space; core use is developer/local deployment

- Evaluation ongoing: Human evals strong but broader benchmarks evolving

Use Cases

- Game animation prototyping: Generate character actions for Unity/Unreal without motion capture

- Film and VFX pre-vis: Create realistic motion sequences from script descriptions

- 3D content creation: Quickly produce animations for avatars, metaverse, or AR/VR

- Research in motion generation: Benchmark, fine-tune, or extend for new domains

- Educational tools: Visualize human movements for sports science or dance training

- AI agent simulation: Create training data for embodied AI with diverse motions

- Automated asset pipeline: Script batch generation for large-scale projects

Target Audience

- Game developers: Needing fast, high-quality character animations

- 3D animators and VFX artists: Accelerating pre-production workflows

- AI researchers: Studying large-scale motion models and flow matching

- Metaverse/AR/VR creators: Building dynamic avatar behaviors

- Developers using Unity/Unreal/Blender: Integrating text-driven motion

- Open-source enthusiasts: Experimenting with billion-parameter generative models

How To Use

- Visit Hugging Face Space: Go to huggingface.co/spaces/tencent/HY-Motion-1.0 for online demo

- Enter prompt: Type descriptive text (English/Chinese) like 'a person performing a karate kick'

- Generate animation: Click run to produce 3D motion sequence preview

- Download output: Export skeleton animation in supported formats

- Local setup: Clone GitHub repo Tencent-Hunyuan/HY-Motion-1.0

- Install dependencies: Follow README for PyTorch, transformers, etc.

- Run inference: Use provided scripts with downloaded weights for batch or custom use

How we rated HY-Motion 1.0

- Performance: 4.6/5

- Accuracy: 4.7/5

- Features: 4.8/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.3/5

- Customization: 4.7/5

- Data Privacy: 5.0/5

- Support: 4.4/5

- Integration: 4.6/5

- Overall Score: 4.7/5

HY-Motion 1.0 integration with other tools

- Hugging Face: Model weights, inference code, and interactive demo space for testing

- GitHub Repository: Full open-source code and community contributions via Tencent-Hunyuan/HY-Motion-1.0

- 3D Engines: Direct export to Blender, Unity, Unreal Engine via FBX/BVH/GLB formats

- Animation Pipelines: Compatible with Maya, Houdini, or custom tools for motion integration

- Local Development: Runs on PyTorch with GPU acceleration; no external cloud required

Best prompts optimised for HY-Motion 1.0

- A professional basketball player performing a powerful slam dunk on a court, dynamic jump, ball in hand, crowd in background, realistic motion

- A graceful ballerina executing a perfect pirouette in a spotlight on stage, elegant dress flowing, smooth rotation and balance

- A ninja warrior performing a series of high-speed flips and sword strikes in a misty bamboo forest at night

- A person running energetically through a park in autumn, leaves falling, natural arm swing and stride

- A fitness trainer demonstrating a proper deadlift technique in a gym, slow controlled movement with perfect form

FAQs

Newly Added Tools

About Author