What is HY-Motion 1.0?

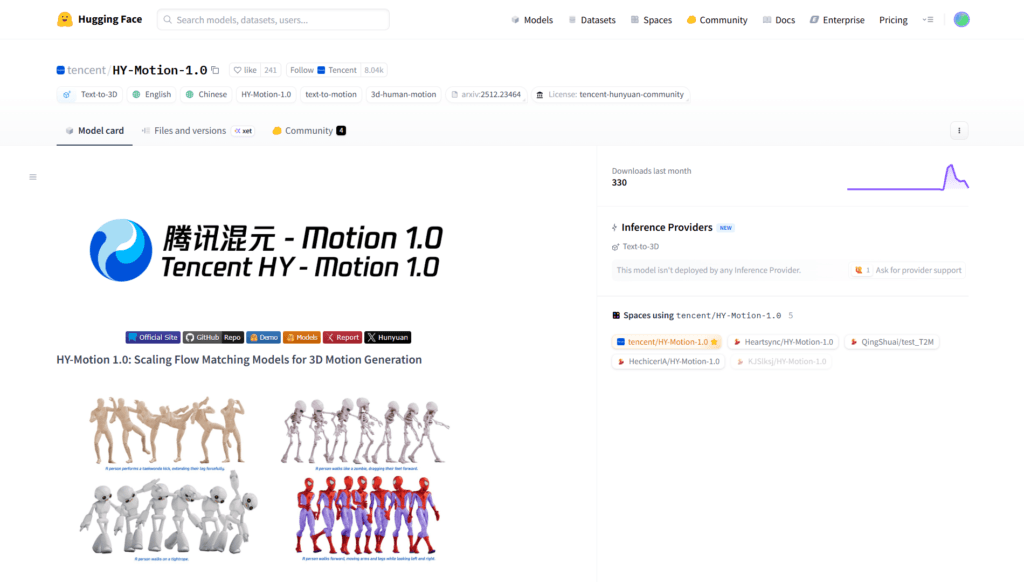

HY-Motion 1.0 is Tencent’s open-source text-to-3D human motion generation model series, scaling to 1B parameters with Diffusion Transformer and Flow Matching for realistic skeleton animations from text prompts.

When was HY-Motion 1.0 released?

It was open-sourced on December 30, 2025, with inference code, pretrained models, Hugging Face Space demo, and official site made available.

Is HY-Motion 1.0 free to use?

Yes, it is completely free and open-source with full weights and code on Hugging Face under Tencent Hunyuan Community license; no usage fees.

What are the model sizes in HY-Motion 1.0?

Standard version has 1 billion parameters; Lite version has 460 million for lighter deployment while maintaining strong performance.

What formats does HY-Motion 1.0 output?

It generates SMPL-H skeleton animations exportable in FBX, BVH, GLB for direct use in Blender, Unity, Unreal Engine, and other 3D tools.

How many motion categories does HY-Motion 1.0 support?

It covers over 200 categories including locomotion, sports, dance, gestures, and complex multi-step actions with high diversity.

Where can I try HY-Motion 1.0 online?

Use the interactive demo on Hugging Face Spaces at huggingface.co/spaces/tencent/HY-Motion-1.0; enter text prompts to generate animations.

Who developed HY-Motion 1.0?

Developed by Tencent’s Hunyuan 3D Digital Human Team as part of their AI research and open-source efforts.