What is IQuest Coder V1?

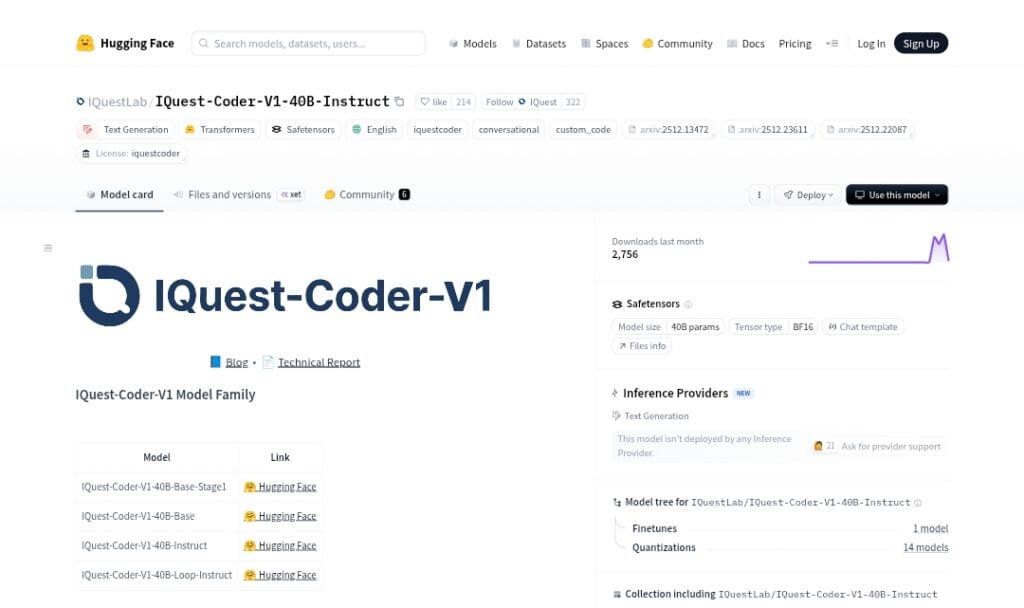

IQuest Coder V1 is a family of open-source code LLMs from IQuestLab (Ubiquant), released January 1, 2026, using Code-Flow training for autonomous software engineering with 128K context.

When was IQuest Coder V1 released?

It was officially released on January 1, 2026, with models available on Hugging Face and GitHub shortly after.

Is IQuest Coder V1 free to use?

Yes, it is completely free and open-source with full weights and code on Hugging Face; no subscriptions or fees required.

What sizes and variants does IQuest Coder V1 have?

Models range from 7B to 40B parameters, including Instruct, Thinking, and Loop-Instruct variants for different use cases.

What benchmarks does IQuest Coder V1 perform well on?

It achieves 76.2% on SWE-Bench Verified, 81.1% on LiveCodeBench v6, and 49.9% on BigCodeBench, often top among open models.

What is the Code-Flow training paradigm?

Code-Flow learns from real repository evolution, commit transitions, and code changes to improve understanding of dynamic software development.

What context length does IQuest Coder V1 support?

All models feature native 128K context length, allowing entire codebases or long logs in a single prompt.

How do I run IQuest Coder V1 locally?

Download from Hugging Face, use transformers library (v4.52.4+), load model/tokenizer, and generate with appropriate prompts.

IQuest Coder

About This AI

IQuest Coder V1 is a family of open-source code large language models released on January 1, 2026, by IQuestLab (AI research arm of Ubiquant quantitative hedge fund).

It introduces the innovative Code-Flow training paradigm that learns from real-world repository evolution, commit transitions, and dynamic code transformations for superior code understanding and generation.

The series includes variants from 7B to 40B parameters, with Instruct for general coding assistance, Thinking for advanced reasoning-driven tasks, and Loop-Instruct with recurrent mechanisms for optimized capacity.

All models feature native 128K context length to handle entire codebases, long logs, multi-file repos, or tool traces without external hacks.

It achieves top open-source performance on benchmarks like SWE-Bench Verified (76.2 percent), LiveCodeBench v6 (81.1 percent), and BigCodeBench (49.9 percent), often rivaling or surpassing closed models like Claude Sonnet 4.5 despite much smaller size.

Available fully open on Hugging Face and GitHub under permissive license, it supports local deployment with transformers and is ideal for developers seeking high-performance, controllable, and private code AI.

No hosted service or paid API is mentioned; focus is on self-hosted inference for enterprises needing data autonomy.

Suited for autonomous agentic coding, deep code intelligence, competitive programming, and software engineering tasks.

Key Features

- Code-Flow training paradigm: Learns from commit history and code evolution for realistic understanding

- Native 128K context length: Handles multi-file repositories and long traces in-prompt

- Multiple variants: Instruct for general use, Thinking for reasoning, Loop for recurrent efficiency

- High benchmark performance: Top open-source scores on SWE-Bench, LiveCodeBench, BigCodeBench

- Autonomous software engineering: Supports agentic tasks, tool use, and multi-step code generation

- Open-source weights: Full models (7B to 40B) available on Hugging Face for local inference

- Competitive programming strength: Excels in problem-solving and code contests

- Deep code intelligence: Advanced understanding of complex codebases and transformations

Price Plans

- Free ($0): Fully open-source with all model weights, code, and inference support available on Hugging Face and GitHub; no costs or subscriptions required

- Cloud Inference (Custom): Potential third-party hosted options via services like Groq or Together AI (not official)

Pros

- Outstanding open-source performance: Rivals closed models at fraction of size and cost

- Long native context: Ideal for real-world large codebases without workarounds

- Completely free and open: Permissive license, no usage fees, full local control

- Specialized for coding: Strong in agentic, reasoning, and evolution-aware tasks

- Deployable anywhere: Runs on consumer hardware with quantization options

- Rapid community adoption: Quick GGUF conversions and discussions post-release

- Data privacy focus: Self-hosted nature suits enterprises with sensitive code

Cons

- Requires strong hardware: 40B model needs high VRAM GPUs for full-speed inference

- Setup and inference complexity: Local deployment requires transformers, dependencies, and optimization

- No hosted service: No easy web UI or API; purely self-hosted focus

- Benchmark controversies: Some claims questioned (e.g., SWE-Bench environment issues)

- Early release stage: Limited fine-tuning examples and ecosystem maturity

- Potential reward-hacking risks: As with agentic models, needs careful evaluation

- Limited non-coding utility: Highly specialized for code; weaker on general tasks

Use Cases

- Autonomous coding agents: Build self-improving code generation and debugging systems

- Competitive programming: Solve LeetCode-style problems with high accuracy

- Large codebase analysis: Understand and refactor entire repositories in-context

- Software engineering tasks: Generate, fix, and evolve code with commit-like reasoning

- Developer productivity tools: Integrate into IDEs for real-time code assistance

- Research in code LLMs: Fine-tune or extend for new domains or agents

- Enterprise private AI: Run sensitive code tasks on-prem without data leakage

Target Audience

- Software developers and engineers: Seeking powerful local coding assistant

- AI researchers in code generation: Experimenting with agentic and long-context models

- Competitive programmers: Using top-tier open models for contests

- Enterprises with code privacy needs: Running self-hosted specialized LLMs

- Open-source AI enthusiasts: Deploying and contributing to cutting-edge code models

- Quantitative finance teams: Leveraging Ubiquant-backed tech for internal tools

How To Use

- Visit Hugging Face: Go to huggingface.co/IQuestLab for model cards and weights

- Clone GitHub repo: Download code and examples from github.com/IQuestLab/IQuest-Coder-V1

- Install dependencies: Use pip install transformers>=4.52.4 and other requirements

- Load model: from transformers import AutoModelForCausalLM, AutoTokenizer; model = AutoModelForCausalLM.from_pretrained('IQuestLab/IQuest-Coder-V1-40B-Instruct')

- Generate code: Provide prompt with system instructions for coding tasks and generate completions

- Use quantization: Apply GGUF or bitsandbytes for lower VRAM usage on consumer GPUs

- Integrate in IDE: Use with Continue.dev, Cursor, or custom scripts for real-time assistance

How we rated IQuest Coder

- Performance: 4.8/5

- Accuracy: 4.7/5

- Features: 4.6/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.3/5

- Customization: 4.8/5

- Data Privacy: 5.0/5

- Support: 4.2/5

- Integration: 4.5/5

- Overall Score: 4.7/5

IQuest Coder integration with other tools

- Hugging Face Transformers: Direct loading and inference with official model hub support

- GitHub Repository: Full code examples, technical report, and community contributions

- IDE Plugins (via Continue.dev/Cursor): Community integrations for real-time code completion and editing

- vLLM / Ollama / LM Studio: High-throughput inference servers and local runners for deployment

- GGUF Quantizations: Community-quantized versions for lower-resource hardware compatibility

Best prompts optimised for IQuest Coder

- You are an expert software engineer. Fix this bug in the provided code and explain the changes step-by-step: [paste buggy code]

- Implement a complete React component for a responsive dashboard with charts, using Tailwind CSS and Recharts library: [detailed spec]

- Refactor this large Python class into smaller, testable functions with proper type hints and docstrings: [paste code]

- Solve this LeetCode hard problem with optimal time/space complexity and provide clean code: [problem description]

- Generate a full FastAPI backend with SQLAlchemy ORM, JWT auth, and CRUD endpoints for a todo app: [requirements]

FAQs

Newly Added Tools

About Author