What is LTX-Video?

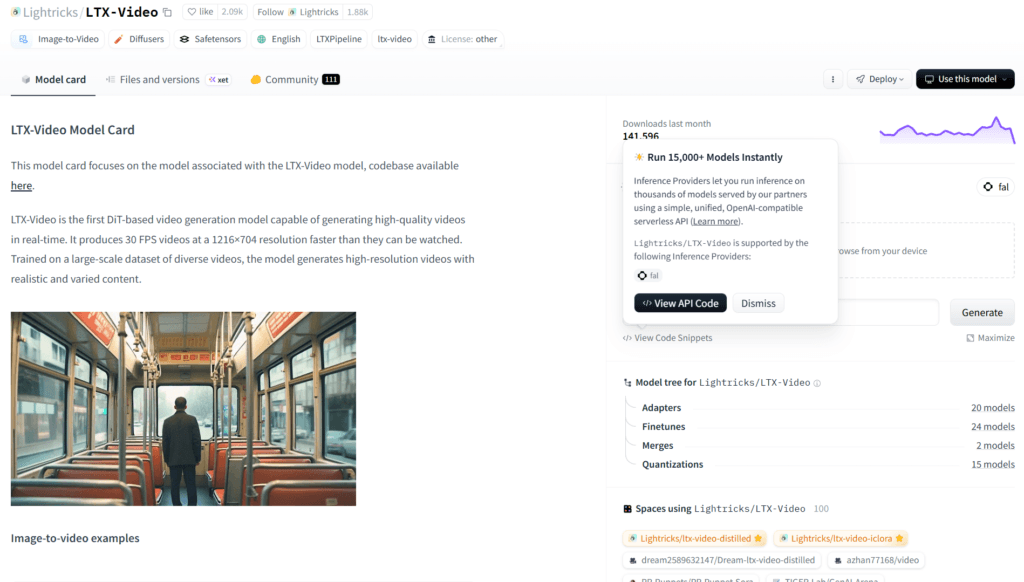

LTX-Video is an open-source DiT-based video generation model from Lightricks that combines text-to-video, image-to-video, extensions, and synchronized audio-visual output in one efficient model.

When was LTX-Video released?

Initial release in November 2024, with major updates through 2025 (v0.9.8 in July 2025) and LTX-2 evolution in October 2025.

Is LTX-Video free to use?

Yes, fully open-source with code under Apache-2.0 and weights available; commercial use may require contacting Lightricks for certain licenses.

What video lengths and resolutions does LTX-Video support?

Up to 60 seconds (257 frames) per generation; native 1216×704 at 30 FPS, up to 4K/50 FPS in advanced modes.

Does LTX-Video generate audio?

Yes, LTX-2 supports synchronized generation of visuals, dialogue, ambience, and music in one coherent pass.

What hardware is needed for LTX-Video?

Tested on CUDA 12.2+; distilled/FP8 variants run on 8GB VRAM GPUs (e.g., RTX 4060); full models need more.

How does LTX-Video integrate with other tools?

Supports Diffusers, ComfyUI (dedicated nodes), Hugging Face, and community trainers for LoRA/fine-tuning.

Who developed LTX-Video?

Developed by Lightricks, creators of apps like Facetune and Videoleap, with focus on accessible AI video tools.

LTX-Video

About This AI

LTX-Video is an open-source video generation model from Lightricks, built on DiT architecture, combining synchronized audio and video generation in a single model.

It supports text-to-video, image-to-video, multi-keyframe conditioning, keyframe-based animation, video extension (forward/backward), video-to-video transformations, and hybrid modes.

The model delivers high-fidelity outputs with realistic motion, diverse content, production-ready quality, and multiple performance modes including distilled and quantized variants for faster inference.

Key capabilities include up to 60 seconds (257 frames) generation, native resolutions like 1216×704 at 30 FPS (up to 4K/50 FPS in advanced setups), low-latency previews, and strong prompt adherence.

Released initially in November 2024 with iterative updates through 2025 (v0.9.8 in July 2025), it evolved to LTX-2 in October 2025 for enhanced audio-video coherence.

Fully open-source code under Apache-2.0, model weights on Hugging Face, supports Diffusers, ComfyUI integrations, and community trainers for LoRA/fine-tuning.

Optimized for efficiency with distilled models (15x faster), FP8 quantization (3x speedup on consumer GPUs), and low VRAM options (as low as 1GB for some variants).

Ideal for creators, developers, filmmakers, and researchers needing accessible, high-quality generative video without proprietary lock-in.

Key Features

- Text-to-Video Generation: Create videos from detailed prompts with synchronized audio and realistic motion

- Image-to-Video Animation: Animate static images or keyframes into dynamic clips

- Multi-Keyframe Conditioning: Use multiple images as control points for precise animation paths

- Video Extension: Extend clips forward or backward while maintaining consistency

- Video-to-Video Transformation: Apply style or content changes to existing videos

- Synchronized Audio-Visual Output: Generate coherent visuals, dialogue, ambience, and music in one pass (LTX-2)

- High Fidelity Modes: Production-ready outputs with strong prompt adherence and diversity

- Performance Variants: Distilled (faster), quantized (FP8 for low VRAM), and mix models

- Long Duration Support: Up to 60 seconds/257 frames in single generation

- Integration Support: Diffusers, ComfyUI, and community trainers for custom LoRAs

Price Plans

- Free ($0): Fully open-source model weights, code, and inference under permissive license; no usage fees for personal/research use

- Commercial License (Contact): For unrestricted commercial applications or high-volume use; contact Lightricks for terms

Pros

- High efficiency: Distilled models 15x faster, FP8 3x speedup on consumer GPUs

- Audio-video sync: First model to generate coherent sound and visuals together

- Versatile conditioning: Strong multi-input support (text, image, keyframe, video)

- Open-source accessibility: Full code Apache-2.0, weights on Hugging Face

- Community ecosystem: ComfyUI workflows, trainers, and third-party demos

- Production quality: High fidelity at 4K/50 FPS in advanced setups

- Low VRAM options: Runs on 8GB GPUs with quantization

Cons

- High VRAM for full models: 13B variants need more memory without distillation

- Setup complexity: Requires Python/CUDA environment and dependencies

- Prompt sensitivity: Best results need long descriptive prompts

- Occasional inconsistencies: May not perfectly match complex prompts

- Limited hosted demos: Primarily local; online via third-parties like Fal.ai

- Early audio limitations: Full sync in LTX-2; earlier versions visual-only

- Hardware dependent: Optimal on NVIDIA GPUs with CUDA 12.2+

Use Cases

- Content creation: Generate short videos for social media, ads, or marketing

- Film prototyping: Storyboard scenes, animate concepts, or pre-vis shots

- Game cinematics: Create trailers or in-game cutscenes from keyframes

- Educational videos: Animate explanations with synchronized narration

- Video editing: Extend clips, apply transformations, or add effects

- AI research: Fine-tune with community trainer for custom styles

- Professional workflows: Integrate via API or ComfyUI for batch generation

Target Audience

- AI creators and hobbyists: Experimenting with open-source video gen

- Filmmakers and animators: Prototyping scenes without heavy rendering

- Marketers and advertisers: Quick video assets for campaigns

- Game developers: Generating cinematics or procedural clips

- Researchers: Studying or extending video diffusion models

- Developers: Integrating into apps via Diffusers/ComfyUI

How To Use

- Clone repo: git clone https://github.com/Lightricks/LTX-Video.git and cd LTX-Video

- Setup env: python -m venv env; source env/bin/activate; pip install -e .[inference-script]

- Download weights: Get model from Hugging Face (e.g., ltxv-13b-0.9.8-distilled)

- Run inference: python inference.py --prompt "PROMPT" --input_image_path IMAGE.jpg --height 704 --width 1216 --num_frames 17 --seed 42

- Use ComfyUI: Install ComfyUI-LTXVideo nodes for visual workflow

- Customize: Adjust config yaml for distilled/quantized modes or extensions

- Train LoRA: Use community trainer repo for fine-tuning on custom data

How we rated LTX-Video

- Performance: 4.6/5

- Accuracy: 4.5/5

- Features: 4.8/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.3/5

- Customization: 4.7/5

- Data Privacy: 5.0/5

- Support: 4.4/5

- Integration: 4.7/5

- Overall Score: 4.7/5

LTX-Video integration with other tools

- Diffusers Library: Official support for seamless inference and pipeline usage in Python scripts

- ComfyUI: Dedicated nodes and workflows via ComfyUI-LTXVideo for visual node-based generation

- Hugging Face: Model weights, spaces, and community demos hosted for easy access and testing

- Third-Party Platforms: Online inference via Fal.ai, Replicate, and LTX-Studio for no-setup trials

- LoRA Trainer: Community repo for fine-tuning and custom adapters on personal datasets

Best prompts optimised for LTX-Video

- A cinematic drone shot flying over a misty mountain valley at golden hour sunrise, dramatic lighting, volumetric fog, realistic physics, high detail, synchronized ambient nature sounds and wind

- Anime-style cyberpunk city chase scene with neon lights and rain, fast camera tracking a speeding motorcycle, dynamic motion blur, dramatic music and rain effects, 30 FPS

- Slow-motion macro shot of water droplets falling on fresh flower petals in morning dew, soft bokeh background, gentle lighting, relaxing ambient rain and nature sounds

- Futuristic robot assembly line in high-tech factory, precise mechanical movements, sparks and lights, industrial camera pan, synchronized factory hum and metallic clanks

- Fantasy wizard casting spell in ancient library, glowing runes and floating books, epic camera orbit, magical chimes and ethereal music, high fantasy style

FAQs

Newly Added Tools

About Author