What is MedGemma?

MedGemma is Google’s collection of open generative models based on Gemma 3, optimized for medical text and image comprehension to accelerate healthcare AI development.

When was MedGemma released?

Initial versions launched in May 2025, with MedGemma 1.5 4B released on January 13, 2026, adding advanced imaging support.

Is MedGemma free to use?

Yes, MedGemma models are fully open and free for research and commercial use, downloadable from Hugging Face with no fees.

What model sizes are available in MedGemma?

Variants include MedGemma 4B multimodal (efficient), MedGemma 27B text-only, and MedGemma 27B multimodal for complex tasks.

What medical tasks does MedGemma support?

It handles medical image interpretation (radiology, pathology), report generation, EHR understanding, clinical QA, triaging, and structured data extraction.

Can MedGemma be used clinically?

No, it is a developer foundation model requiring validation, adaptation, and verification; not for direct clinical diagnosis or patient care.

How many downloads has MedGemma had?

MedGemma has seen millions of downloads and hundreds of community variants on Hugging Face since release.

Where can I download MedGemma?

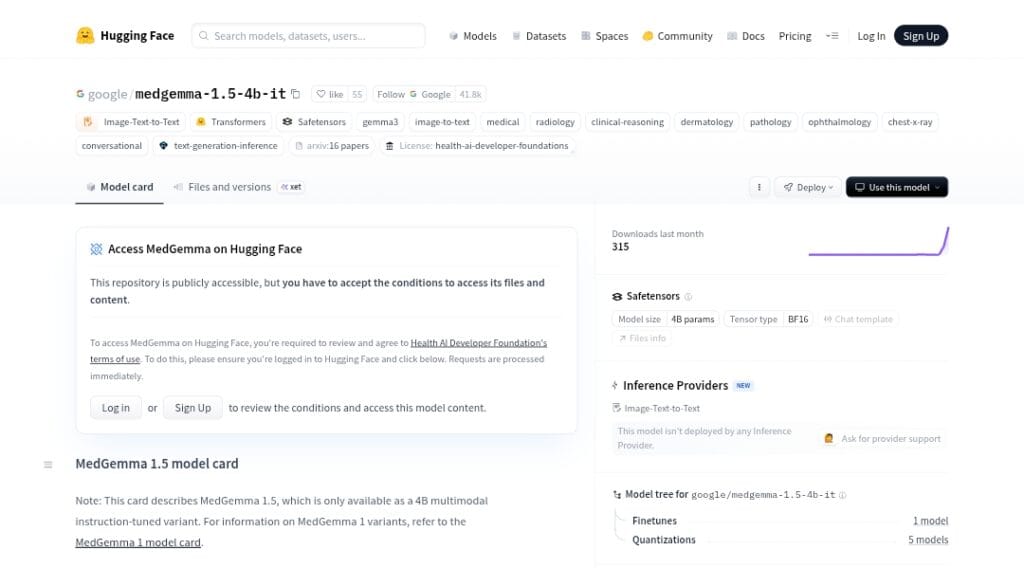

Models are available on Hugging Face (google/medgemma variants) and Google Cloud Vertex AI for scaling.

MedGemma

About This AI

MedGemma is a collection of open generative models from Google DeepMind, built on Gemma 3 architecture and optimized for medical text and image understanding.

It includes variants like MedGemma 4B multimodal (text and image inputs), MedGemma 27B text-only, and MedGemma 27B multimodal, with updates such as MedGemma 1.5 4B for high-dimensional imaging (CT, MRI, histopathology, longitudinal chest X-rays).

Capabilities cover medical image interpretation (radiology, pathology, dermatology, ophthalmology), report generation, clinical reasoning, EHR understanding, structured data extraction from lab reports, question answering, triaging, summarization, and agentic orchestration.

MedGemma retains general Gemma 3 abilities while excelling in medical tasks through specialized training on de-identified medical data, including a medically-tuned SigLIP vision encoder (MedSigLIP).

Released in phases starting May 2025 (initial 4B/27B), with MedGemma 1.5 in January 2026, it is fully open for research and commercial use under permissive terms.

Models are available on Hugging Face and Vertex AI for fine-tuning, scaling, and deployment, with millions of downloads and hundreds of community variants reported.

Intended as a developer starting point for healthcare AI applications (not direct clinical use without validation), it supports privacy-preserving development and acceleration of medical research.

Key Features

- Multimodal medical comprehension: Processes text and images (chest X-rays, CT, MRI, histopathology, dermatology) for interpretation and reasoning

- High-dimensional imaging support: Handles 3D/4D scans, longitudinal analysis, and anatomical localization in MedGemma 1.5 4B

- Clinical report generation: Produces structured radiology/pathology reports from images

- EHR and document understanding: Extracts insights from electronic health records, lab reports, and FHIR data

- Medical question answering: Answers preclinical, triaging, and decision-support queries

- Agentic capabilities: Supports orchestration for multi-step medical workflows

- MedSigLIP vision encoder: Medically-tuned image encoder for superior visual understanding

- General capabilities retention: Maintains non-medical reasoning, instruction-following, and multilingual support

- Fine-tuning ready: Optimized for adaptation on custom medical datasets with low compute

- Open deployment options: Downloadable weights for local use or scaling on Vertex AI

Price Plans

- Free ($0): Fully open-source models downloadable from Hugging Face for research and commercial use with no fees; fine-tuning and deployment on own hardware

- Vertex AI (Paid, usage-based): Cloud scaling, fine-tuning, and hosting on Google Cloud Vertex AI with pay-per-use pricing

Pros

- Strong medical performance: Outperforms similar-sized models on multimodal medical benchmarks and approaches specialized SOTA

- Open and accessible: Fully open for research/commercial use with millions of downloads and community variants

- Efficient variants: 4B size enables compute-friendly fine-tuning and deployment

- Privacy control: Developers retain full control over data and infrastructure

- Versatile applications: Supports imaging, EHR, QA, summarization, and agentic tools

- Rapid updates: Iterations like 1.5 add advanced imaging and longitudinal support

- General skills preserved: Handles mixed medical/non-medical tasks effectively

Cons

- Not clinical-grade: Requires validation, adaptation, and verification for real healthcare use

- Potential inaccuracies: Outputs may be preliminary or erroneous without fine-tuning

- Hardware needs: Larger 27B variant requires significant compute for inference

- Validation required: Developers must test rigorously on intended use cases

- Limited direct deployment: Best as foundation; not plug-and-play for production without customization

- Regulatory considerations: Not intended for direct patient management without oversight

- Recent release: Some advanced features still evolving with community feedback

Use Cases

- Medical image analysis: Interpret radiology, pathology, and multi-modal scans for classification and reporting

- Clinical decision support: Assist with triaging, summarization, and question answering from EHRs

- Healthcare app development: Build patient interviewing, pre-visit prep, or radiology explainers

- Research acceleration: Fine-tune for specialized medical tasks or agentic workflows

- Educational tools: Create interactive learning aids for medical students (e.g., CXR interpretation)

- Structured data extraction: Convert unstructured lab reports or notes into JSON/FHIR formats

- Longitudinal monitoring: Analyze serial imaging like chest X-rays over time

Target Audience

- Healthcare AI developers: Building custom medical apps or tools with vision-language models

- Medical researchers: Experimenting with multimodal analysis in radiology, pathology, etc.

- Health tech startups: Creating diagnostic aids, triage systems, or EHR analyzers

- Academic institutions: Studying medical AI, fine-tuning for specific domains

- Enterprises in life sciences: Scaling models on cloud for internal R&D

- Clinicians and educators: Developing training or decision-support prototypes

How To Use

- Access models: Visit deepmind.google/models/gemma/medgemma or Hugging Face for downloads

- Agree to terms: Review and accept Health AI Developer Foundations terms on Hugging Face

- Download weights: Get MedGemma variants (4B/27B) from Hugging Face repo

- Load in code: Use transformers library (e.g., AutoModelForCausalLM.from_pretrained('google/medgemma-4b-it'))

- Prompt examples: Input medical images/text for interpretation, report generation, or QA

- Fine-tune: Adapt on custom datasets using provided notebooks or Vertex AI

- Deploy: Run locally, on cloud (Vertex AI), or integrate into apps

How we rated MedGemma

- Performance: 4.7/5

- Accuracy: 4.6/5

- Features: 4.8/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.4/5

- Customization: 4.8/5

- Data Privacy: 4.9/5

- Support: 4.5/5

- Integration: 4.7/5

- Overall Score: 4.7/5

MedGemma integration with other tools

- Hugging Face: Model weights and inference pipelines for easy download and community fine-tuning

- Vertex AI (Google Cloud): Scalable fine-tuning, deployment, and hosting for production healthcare apps

- Transformers Library: Direct integration via Hugging Face transformers for Python-based workflows

- Agentic Frameworks: Supports orchestration in LangChain or similar for multi-step medical agents

- Custom EHR Systems: Potential for FHIR-compatible integration in healthcare software

Best prompts optimised for MedGemma

- Interpret this chest X-ray image: describe findings, possible diagnoses, and recommendations in a structured radiology report format

- Analyze the attached MRI scan of the brain: identify abnormalities, localize lesions, and suggest differential diagnoses

- Summarize this de-identified EHR discharge note: extract key diagnoses, medications, follow-ups, and action items

- Extract structured JSON data from this lab report image: include test names, values, units, and reference ranges

- Answer this clinical question based on the provided histopathology slide image: is this consistent with melanoma?

FAQs

Newly Added Tools

About Author