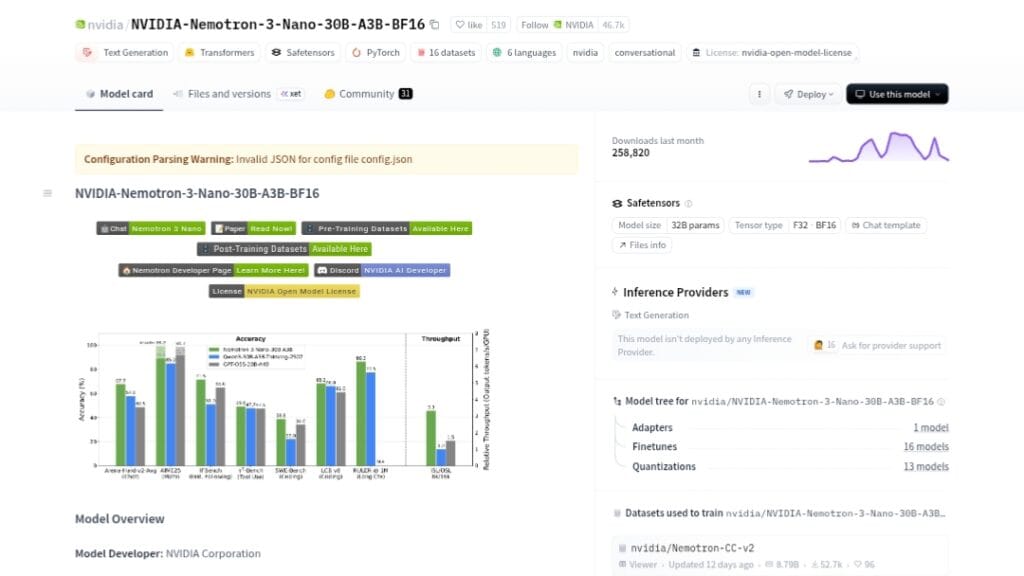

What is Nemotron-3-Nano-30B-A3B?

Nemotron-3-Nano-30B-A3B is NVIDIA’s open-source 31.6B MoE model (3.6B active) optimized for efficient reasoning, agentic tasks, long-context (1M tokens), coding, and chat with hybrid Mamba-Transformer architecture.

When was Nemotron-3-Nano released?

It was released on December 15, 2025, with BF16/FP8 variants; NVFP4 ultra-efficient version followed in late January 2026.

Is Nemotron-3-Nano free to use?

Yes, it’s fully open-source under NVIDIA Open Model License with weights, data, and recipes available on Hugging Face; run locally or via free API tiers.

What are the key benchmarks for Nemotron-3-Nano?

It excels in AIME25 (99.2% with tools), LiveCodeBench (68.3%), GPQA, Arena-Hard-v2, and RULER long-context, often outperforming Qwen3-30B and GPT-OSS-20B.

What hardware is needed for Nemotron-3-Nano?

High-end NVIDIA GPUs (H200/B200 optimal) for real-time/high-throughput; NVFP4 variant boosts efficiency on Blackwell up to 4x.

Does Nemotron-3-Nano support tool calling?

Yes, it features native tool calling, multi-step agentic workflows, and reasoning traces for complex tasks like RAG and automation.

What is the context length of Nemotron-3-Nano?

It supports up to 1 million tokens with strong long-range retention on RULER benchmarks, ideal for massive documents or codebases.

How does Nemotron-3-Nano compare to Qwen3-30B?

It often surpasses Qwen3-30B in reasoning (AIME, LiveCodeBench), agentic performance, long-context, and inference speed while being open-source.