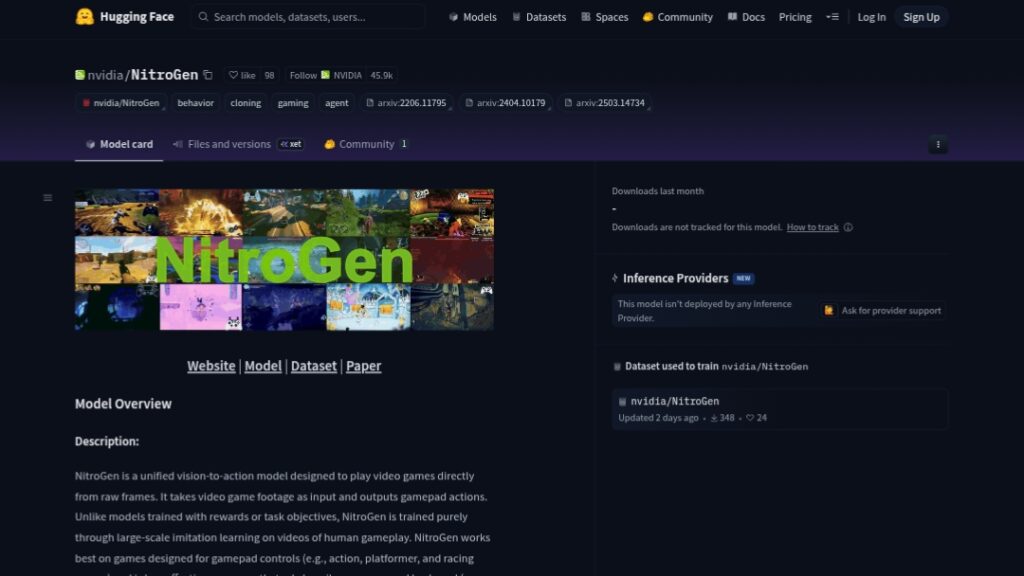

What is NitroGen?

NitroGen is an open-source vision-to-action foundation model from NVIDIA that plays over 1000 diverse video games directly from raw pixel frames by predicting gamepad actions.

When was NitroGen released?

NitroGen was released on December 19, 2025, with model weights, dataset, code, and paper made publicly available.

Is NitroGen free to use?

Yes, it is completely free and open-source with weights and code on Hugging Face/GitHub under NVIDIA’s non-commercial license for research purposes.

How was NitroGen trained?

It uses large-scale behavior cloning on 40,000 hours of internet gameplay videos across 1000+ games, without rewards or game-specific APIs.

What games does NitroGen play best?

It excels at gamepad-controlled titles like action, platformers, racing, RPGs, and battle royales; weaker on heavy mouse/keyboard games like RTS or MOBAs.

How can I run NitroGen?

Clone the GitHub repo, install dependencies, download checkpoint from Hugging Face, start inference server, and run on Windows game executables.

What license does NitroGen use?

Governed by NVIDIA One-Way Noncommercial License; backbone (SigLip2) is Apache 2.0; intended strictly for research/non-commercial use.

What are NitroGen’s implications beyond gaming?

Skills learned from games can transfer to embodied AI, robotics simulation, autonomous systems training, and real-world control tasks.

NitroGen

About This AI

NitroGen is an open-source unified vision-to-action foundation model developed by NVIDIA researchers in collaboration with Stanford, Caltech, UChicago, UT Austin, and others.

It enables generalist gaming agents to play a wide variety of commercial video games directly from raw RGB video frames by predicting gamepad actions through large-scale behavior cloning.

Trained on 40,000 hours of internet-scale gameplay videos spanning more than 1,000 diverse titles, it learns to handle action, platformer, racing, RPG, battle royale, 2D/3D games, and more without game-specific APIs or reward signals.

The model takes 256×256 RGB inputs and outputs a 21×16 action tensor (two continuous 2D joystick vectors plus 17 binary buttons).

Architecture combines a SigLip2 Vision Transformer backbone with a Diffusion Matching Transformer (DiT) for action prediction.

It demonstrates strong zero-shot competence in complex tasks like combat, navigation, high-precision platforming, and exploration, with up to 52 percent relative improvement in task success rates when fine-tuned on unseen games compared to scratch-trained models.

Released in December 2025 with full weights on Hugging Face, dataset, universal Gymnasium API simulator for commercial games, evaluation suite, and code on GitHub under NVIDIA’s non-commercial license (backbone Apache 2.0).

Ideal for advancing embodied AI research, game testing automation, NPC behavior training, and transferring skills to robotics or real-world simulation.

As an open model, it promotes progress in generalist agents beyond narrow RL-trained bots.

Key Features

- Vision-to-action prediction: Maps raw 256x256 RGB game frames directly to gamepad actions (joysticks and buttons)

- Large-scale imitation learning: Trained purely via behavior cloning on 40,000 hours of unlabeled internet gameplay videos

- Multi-game generalization: Zero-shot competence across 1000+ titles in diverse genres (action, platformer, racing, RPG, etc.)

- High-precision control: Handles fine movements in 2D platformers and complex decision-making in 3D games

- Strong transfer learning: Up to 52 percent relative success rate improvement when fine-tuned on unseen games

- Universal simulator API: Gymnasium wrapper to run commercial games with agent control for evaluation

- Open dataset and eval suite: 40k-hour action-annotated videos plus multi-task benchmark across 10+ games

- Efficient architecture: SigLip2 ViT backbone + Diffusion Matching Transformer for scalable inference

- Research-focused release: Full weights, code, dataset, and paper available for community advancement

Price Plans

- Free ($0): Fully open-source model weights, code, dataset, and tools available on Hugging Face and GitHub under NVIDIA non-commercial license; no usage fees for research purposes

- Commercial (Not Available): License restricts commercial use; contact NVIDIA for potential enterprise licensing

Pros

- Groundbreaking generalization: First open model to play 1000+ diverse games from pixels alone

- Internet-scale training: Leverages massive public gameplay data without expensive human demos

- Strong transfer performance: Significant gains when fine-tuned on new titles compared to scratch baselines

- Fully open ecosystem: Weights, dataset, code, simulator, and eval suite released for research

- Embodied AI potential: Skills transferable to robotics and real-world simulation domains

- Non-commercial license clarity: Enables broad academic and open-source experimentation

Cons

- Non-commercial license: Restricted to research/non-commercial use per NVIDIA terms

- Gamepad focus limitation: Best on controller games; weaker on mouse/keyboard-heavy titles (RTS/MOBA)

- Hardware demands: Inference requires capable GPU for real-time or large-scale use

- Setup complexity: Local deployment needs Git clone, pip install, checkpoint download, and game wrappers

- No hosted demo: No simple web interface; requires technical setup to run

- Early-stage maturity: Released December 2025; community integrations and fine-tuning examples emerging

- Potential noise in data: Trained on unfiltered internet videos which may include suboptimal play

Use Cases

- Game AI research: Benchmarking generalist agents across diverse titles and tasks

- Game development testing: Automate playtesting, bug hunting, or NPC behavior prototyping

- Embodied AI transfer: Adapt gaming skills to robotics simulation or real-world control

- Autonomous agent training: Fine-tune on specific games for stronger performance

- Educational simulations: Create interactive game-based learning environments

- Procedural content evaluation: Test AI in generated or varied game worlds

Target Audience

- AI researchers in embodied intelligence: Studying generalist agents and imitation learning

- Game developers and studios: Exploring AI for testing, NPCs, or procedural generation

- Robotics and simulation engineers: Transferring pixel-to-action skills to physical domains

- Academic institutions: Using open dataset and model for courses/projects

- Open-source AI community: Fine-tuning or extending the foundation model

How To Use

- Clone repository: git clone https://github.com/MineDojo/NitroGen.git and cd NitroGen

- Install dependencies: pip install -e . to set up environment

- Download checkpoint: hf download nvidia/NitroGen ng.pt (or from Hugging Face)

- Start inference server: python scripts/serve.py path/to/ng.pt

- Run agent on game: python scripts/play.py --process 'game.exe' for Windows titles

- Prepare game: Ensure game runs and exposes window for frame capture

- Monitor output: Observe agent actions and performance in real-time

How we rated NitroGen

- Performance: 4.6/5

- Accuracy: 4.5/5

- Features: 4.7/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.0/5

- Customization: 4.8/5

- Data Privacy: 5.0/5

- Support: 4.2/5

- Integration: 4.4/5

- Overall Score: 4.6/5

NitroGen integration with other tools

- Hugging Face: Model weights and dataset hosted for easy download and experimentation

- GitHub Repository: Full code, inference scripts, and deployment instructions available

- Gymnasium API: Universal simulator wrapper to run commercial games with agent control

- MineDojo Ecosystem: Ties into broader MineDojo framework for embodied AI research

- Local GPU Setup: Runs natively on NVIDIA hardware with PyTorch/CUDA

Best prompts optimised for NitroGen

- Not applicable - NitroGen is a vision-to-action gaming agent model that takes raw RGB frames as input and predicts gamepad actions; it does not use text prompts for generation.

FAQs

Newly Added Tools

About Author