What is NitroGen?

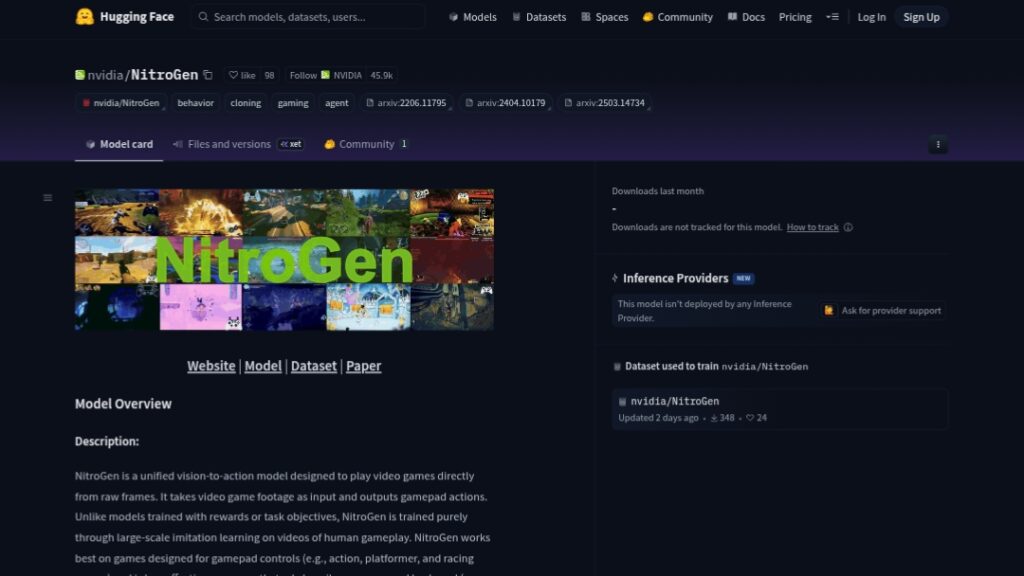

NitroGen is an open-source vision-to-action foundation model from NVIDIA that plays over 1000 diverse video games directly from raw pixel frames by predicting gamepad actions.

When was NitroGen released?

NitroGen was released on December 19, 2025, with model weights, dataset, code, and paper made publicly available.

Is NitroGen free to use?

Yes, it is completely free and open-source with weights and code on Hugging Face/GitHub under NVIDIA’s non-commercial license for research purposes.

How was NitroGen trained?

It uses large-scale behavior cloning on 40,000 hours of internet gameplay videos across 1000+ games, without rewards or game-specific APIs.

What games does NitroGen play best?

It excels at gamepad-controlled titles like action, platformers, racing, RPGs, and battle royales; weaker on heavy mouse/keyboard games like RTS or MOBAs.

How can I run NitroGen?

Clone the GitHub repo, install dependencies, download checkpoint from Hugging Face, start inference server, and run on Windows game executables.

What license does NitroGen use?

Governed by NVIDIA One-Way Noncommercial License; backbone (SigLip2) is Apache 2.0; intended strictly for research/non-commercial use.

What are NitroGen’s implications beyond gaming?

Skills learned from games can transfer to embodied AI, robotics simulation, autonomous systems training, and real-world control tasks.