What is Olmo 3.1?

Olmo 3.1 is the updated release from Allen Institute for AI featuring 7B and 32B models with Think (reasoning) and Instruct (chat/tool use) variants, fully open with end-to-end transparency.

When was Olmo 3.1 released?

Olmo 3 launched November 20, 2025; Olmo 3.1 update with new 32B checkpoints arrived December 12, 2025.

Is Olmo 3.1 free and open-source?

Yes, completely free under permissive license with full weights, data, code, checkpoints, and training details available on Hugging Face.

What are the main variants in Olmo 3.1?

Olmo 3.1 Think 32B (extended RL for reasoning), Olmo 3.1 Instruct 32B (chat and tool use), plus refreshed 7B models and RL Zero variants.

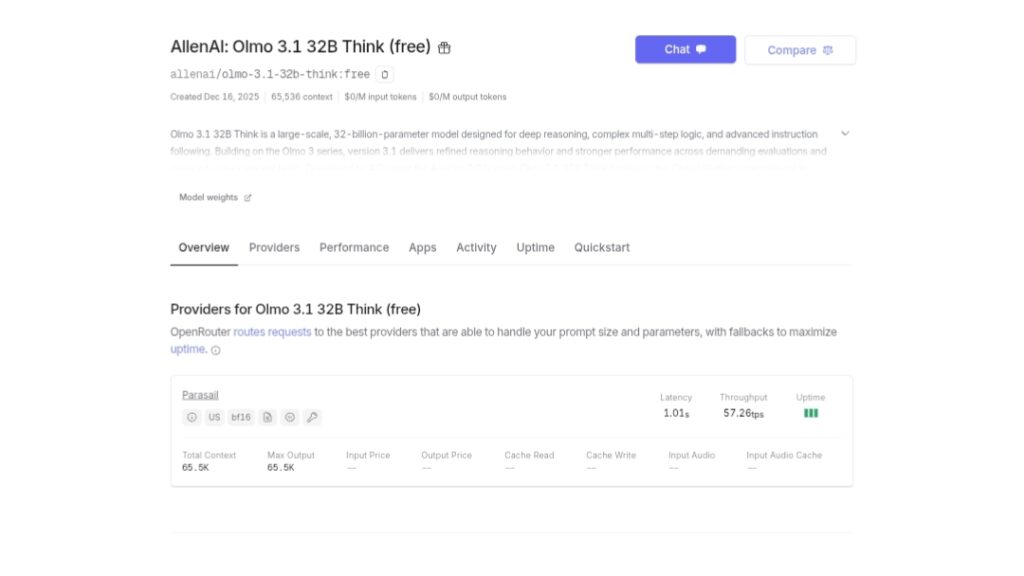

What context length does Olmo 3.1 support?

Up to 65,536 tokens, enabling analysis of long documents or multi-step reasoning without losing context.

How does Olmo 3.1 compare to other open models?

Olmo 3.1 Think 32B is the strongest fully open reasoning model, competitive with closed systems on math, coding, and instruction benchmarks.

Where can I try or download Olmo 3.1?

Download weights from Hugging Face (allenai/olmo-3 collection); test in Ai2 Playground or via API partners like OpenRouter.

What makes Olmo 3.1 unique?

Full end-to-end openness including training data provenance, reproducible flows, and OlmoTrace for tracing outputs to sources.

Olmo 3.1

About This AI

Olmo 3.1 is the updated flagship release from the Allen Institute for AI (Ai2), building on Olmo 3 with enhanced reasoning and instruction-following.

It includes Olmo 3.1 Think 32B (extended RL for superior chain-of-thought reasoning) and Olmo 3.1 Instruct 32B (optimized for chat, tool use, multi-turn dialogue).

The family supports 7B and 32B scales with long-context (up to 65K tokens), strong performance in math, coding, reasoning, function calling, and knowledge recall.

Fully open with end-to-end transparency: full training data (Dolma 3 corpus), code, checkpoints, logs, post-training recipes, and provenance via OlmoTrace.

Olmo 3.1 Think 32B excels in benchmarks like MATH, HumanEvalPlus, IFEval, ZebraLogic, and IFBench; Instruct 32B fills the gap for larger-scale chat models.

Released December 12, 2025 (update to November 20 Olmo 3 launch), models are available on Hugging Face, Ai2 Playground, and API partners.

Designed for research, RL experimentation, and real-world applications with high efficiency (trained on fewer tokens than rivals).

No usage fees for core models; accessible for developers, researchers, and enterprises seeking trusted, transparent open AI.

Key Features

- 32B and 7B scales: Larger 32B for advanced reasoning; efficient 7B for accessibility

- Think variant: Extended RL for long chain-of-thought, superior math/coding/reasoning

- Instruct variant: Optimized for chat, tool use, multi-turn dialogue, instruction following

- Long context support: Up to 65K tokens for document analysis and complex tasks

- Full transparency: Open data, code, checkpoints, logs, and OlmoTrace for provenance

- Strong benchmarks: Tops fully open models in MATH, HumanEval, IFEval, ZebraLogic

- Efficient training: Fewer tokens than rivals for cost-effective high performance

- RL research ready: Stable long RL runs and artifacts for experimentation

- Multi-turn capabilities: Handles complex dialogues and function calling

- Deployment options: Hugging Face weights, Ai2 Playground, API inference

Price Plans

- Free ($0): Full open-source models, weights, data, code, and artifacts under permissive license; no cost for download or local use

- API/Inference (Partner-based): Usage-based pricing via OpenRouter, Ai2 partners, or hosted platforms (varies)

Pros

- Leading open reasoning: Olmo 3.1 Think 32B is the strongest fully open thinking model

- End-to-end openness: Complete model flow transparency builds trust and enables reproduction

- High performance efficiency: Outperforms rivals with fewer training tokens

- Versatile variants: Think for deep logic, Instruct for practical chat/tool use

- Research empowerment: Artifacts support RL, fine-tuning, and scientific study

- Accessible access: Free weights on Hugging Face; try in Playground or API

- Strong benchmarks: Competitive or leading in math, coding, reasoning tasks

Cons

- Compute intensive: 32B models require significant GPU resources for inference

- No hosted free tier: Playground/API may have limits; local run needs hardware

- Recent release: Adoption and community fine-tunes still emerging

- Knowledge cutoff: Trained up to late 2025; no real-time web access built-in

- Setup for local use: Requires technical expertise to download/run models

- Limited 7B scale: Smaller variant strong but less capable than 32B flagship

- No native multimodal: Text-focused; vision not included in base release

Use Cases

- Advanced reasoning research: Long chain-of-thought experiments, math/science problems

- Coding and development: Complex programming, debugging, code generation

- Instruction following: Chatbots, tool use, multi-turn assistants

- Academic and scientific analysis: Long-document reasoning, knowledge recall

- RL experimentation: Fine-tuning, reinforcement learning on open artifacts

- Enterprise trusted AI: Transparent models for sensitive applications

- Local deployment: Run on-prem for privacy-focused workflows

Target Audience

- AI researchers: Studying open model flows, RL, reasoning transparency

- Developers and engineers: Building agents, tools, or coding assistants

- Academics and scientists: Math, science, long-context analysis

- Enterprises: Needing auditable, open-source LLMs for compliance

- Open-source enthusiasts: Fine-tuning or extending fully transparent models

- Students and educators: Learning advanced AI through reproducible artifacts

How To Use

- Access models: Visit allenai.org/blog/olmo3 or Hugging Face collections/allenai/olmo-3

- Try online: Use Ai2 Playground for instant testing of Think/Instruct variants

- Download weights: Get from Hugging Face (e.g., allenai/Olmo-3.1-32B-Think)

- Local setup: Install via transformers library; load model with AutoModelForCausalLM

- Prompt effectively: Use detailed instructions; request chain-of-thought for reasoning

- API usage: Integrate via OpenRouter or Ai2 partners for hosted inference

- Trace outputs: Leverage OlmoTrace to view training data provenance for responses

How we rated Olmo 3.1

- Performance: 4.8/5

- Accuracy: 4.7/5

- Features: 4.9/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.5/5

- Customization: 4.8/5

- Data Privacy: 5.0/5

- Support: 4.6/5

- Integration: 4.7/5

- Overall Score: 4.8/5

Olmo 3.1 integration with other tools

- Hugging Face: Direct model download, inference pipelines, and community fine-tunes

- Ai2 Playground: Web-based demo for testing Think/Instruct variants instantly

- OpenRouter: Hosted API access for easy integration without local hardware

- Transformers Library: Seamless use in Python scripts with AutoModel/AutoTokenizer

- Ollama/Local Runtimes: Support for local deployment in Ollama and similar tools

Best prompts optimised for Olmo 3.1

- Solve this advanced math problem step-by-step with detailed chain-of-thought reasoning: [insert complex AIME-style problem]

- You are a helpful coding assistant. Write a complete Python program that [describe task], explain your reasoning in detail, and handle edge cases.

- Analyze this long research paper excerpt [paste text]. Summarize key findings, critique methodology, and suggest improvements using structured thinking.

- Act as an expert tutor. Explain quantum mechanics concepts to a college student, using analogies, step-by-step derivations, and verify understanding with questions.

- You have access to tools. Plan and execute a multi-step task: research current AI ethics debates, summarize top arguments, and propose a balanced policy framework.

FAQs

Newly Added Tools

About Author