What is ProEdit?

ProEdit is an open-source, training-free inversion-based method for accurate text-driven image and video editing, solving source image over-injection issues in prompt editing.

When was ProEdit released?

The paper ‘ProEdit: Inversion-based Editing From Prompts Done Right’ was published on arXiv on December 28, 2025; code release for image editing expected shortly after.

Is ProEdit free to use?

Yes, it’s fully open-source under MIT license with code available on GitHub (once released) for free use in research or projects.

What does ProEdit edit?

It specializes in precise changes to attributes like pose, object count, color, layout, or objects while preserving background via latents-shift and attention fusion.

Does ProEdit support video editing?

Yes, the method extends to videos with temporal consistency, though full video code was pending release as of early 2026.

How do I run ProEdit?

Clone the GitHub repo (once code is available), install dependencies, load a base flow model (e.g., FLUX), and run inference scripts with source/target prompts.

Who created ProEdit?

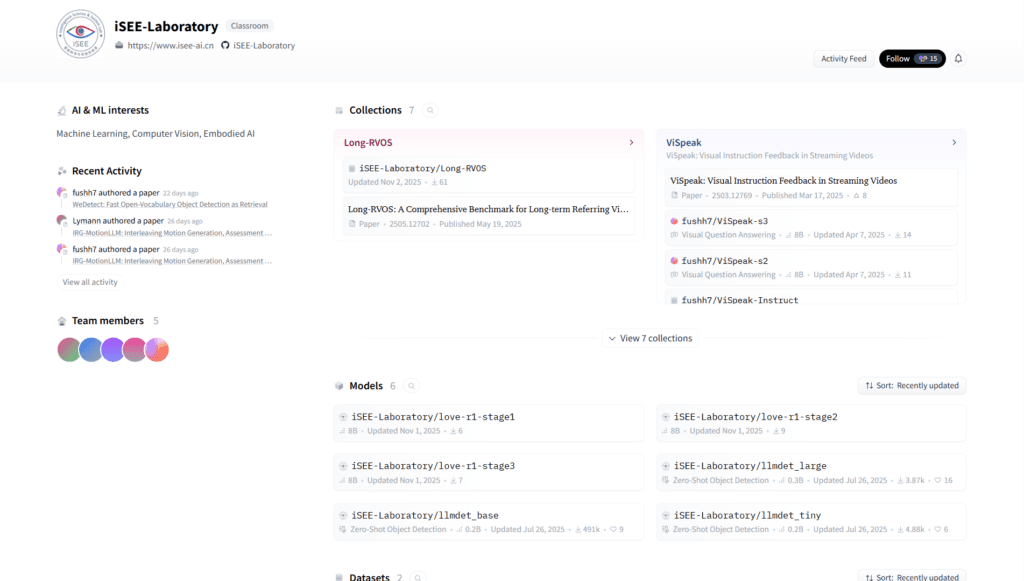

Developed by researchers from Sun Yat-sen University, CUHK MMLab, and NTU, led by Zhi Ouyang, Dian Zheng, and others from iSEE Laboratory.

Is there a demo for ProEdit?

Qualitative results and demos are showcased on the project page (isee-laboratory.github.io/ProEdit); no interactive online demo yet.

ProEdit

About This AI

ProEdit is an open-source, training-free method for high-accuracy text-driven image and video editing based on flow inversion.

Developed by researchers from Sun Yat-sen University and collaborators, it addresses common failures in inversion-based editing where excessive source image information leaks into the edited regions, preventing accurate changes to attributes like pose, object count, color, or layout.

The approach uses a plug-and-play pipeline: first inverts the source image to extract an edit mask via source/target prompt comparison, then applies Latents-Shift to perturb latent distributions in edited areas (reducing source influence), followed by Attention Fusion to combine source/target features selectively during sampling.

This preserves background consistency while enabling faithful edits to specified regions.

It also supports instruction-based editing by leveraging large language models to interpret natural language instructions.

ProEdit builds on prior inversion techniques like PnP-Inversion and flow models (e.g., FLUX), delivering state-of-the-art qualitative results for complex edits.

Released as a research project with paper on December 28, 2025 (arXiv:2512.22118), the code repository (GitHub: iSEE-Laboratory/ProEdit) was announced with image editing implementation expected shortly after, and video editing to follow.

Licensed under MIT for free academic and potential commercial use, it is ideal for researchers, developers, and creators seeking precise, controllable AI image/video manipulation without retraining.

As a recent academic release, it emphasizes methodological innovation over production-ready UI, with demos and results showcased on the project page.

Key Features

- Text-driven editing: Edit images/videos using source and target text prompts for precise attribute changes

- Plug-and-play inversion: Works with existing flow models without additional training

- Edit mask extraction: Automatically identifies edited regions during the first inversion step

- Latents-Shift: Perturbs latent distributions in edited areas to minimize unwanted source information

- Attention Fusion: Selectively fuses source and target attention features during sampling

- Background preservation: Maintains non-edited regions with direct source feature injection

- Instruction-based editing: Uses LLM to interpret natural language instructions for guided edits

- Video editing support: Extends method to temporal consistency in videos (code pending full release)

- High-quality results: Achieves superior qualitative edits for pose, color, count, and layout changes

Price Plans

- Free ($0): Fully open-source under MIT license with code and implementation available on GitHub for personal, research, or commercial use (once released)

Pros

- High editing fidelity: Significantly reduces source leakage for accurate attribute modifications

- Training-free and plug-and-play: Easy to integrate with existing diffusion/flow models

- Open-source under MIT: Free for research, academic, and potential commercial use

- Addresses key limitations: Fixes common inversion failures in prompt-based editing

- Supports complex edits: Handles pose, object count, color, layout, and more effectively

- Video extension potential: Method designed with temporal consistency in mind

- Research-backed: Published on arXiv with qualitative demos and project page

Cons

- Code not fully released yet: As of early 2026, implementation still pending (image editing ~2 weeks from Dec 2025 announcement)

- Academic-focused: No user-friendly GUI; requires technical setup and coding knowledge

- Requires GPU: Diffusion-based methods need significant compute for inversion and sampling

- Limited quantitative benchmarks: Primarily qualitative results; fewer direct comparisons

- Dependency on base models: Relies on external tools like FLUX, PnP-Inversion, etc.

- Early-stage project: Potential bugs or incomplete features until full code drop

- No online demo: Must run locally; no hosted service available

Use Cases

- Creative image manipulation: Change poses, colors, object counts, or layouts in photos

- Video content editing: Apply temporal-consistent edits to clips (once code available)

- Research in diffusion models: Study or extend inversion-based editing techniques

- Prototype testing: Experiment with precise text-prompt edits without model fine-tuning

- Art and design: Generate variations or corrections of existing visuals

- Academic experiments: Benchmark or compare with other inversion methods

Target Audience

- AI researchers: Studying diffusion/inversion techniques and text-driven editing

- Computer vision developers: Building or extending image/video manipulation tools

- Advanced AI enthusiasts: Running local open-source models for custom edits

- Academic institutions: Using in papers, theses, or courses on generative AI

- Content creators: Once code matures, for precise AI-assisted editing workflows

How To Use

- Check repo status: Visit https://github.com/iSEE-Laboratory/ProEdit for latest code release

- Clone repository: git clone https://github.com/iSEE-Laboratory/ProEdit.git once available

- Install dependencies: Follow README (likely pip install requirements.txt with PyTorch, diffusers, etc.)

- Prepare base model: Download compatible flow/inversion model (e.g., FLUX, PnP-Inversion)

- Run inference: Use provided scripts with source image, source prompt, target prompt

- Apply edits: Specify instructions or prompts; output edited image/video

- Customize: Modify latents-shift or fusion parameters for fine control

How we rated ProEdit

- Performance: 4.5/5

- Accuracy: 4.8/5

- Features: 4.4/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 3.5/5

- Customization: 4.7/5

- Data Privacy: 5.0/5

- Support: 3.8/5

- Integration: 4.2/5

- Overall Score: 4.4/5

ProEdit integration with other tools

- Diffusion Frameworks: Compatible with Diffusers, ComfyUI, or custom PyTorch setups via plug-and-play modules

- Base Models: Works with flow-based models like FLUX, and inversion methods such as PnP-Inversion or FireFlow

- Research Tools: Integrates into academic pipelines for benchmarking or extending editing research

- Local GPU Setup: Runs on personal machines with NVIDIA GPUs via Python environment

- Project Page Demos: View qualitative results and potential future code integrations on isee-laboratory.github.io/ProEdit

Best prompts optimised for ProEdit

- N/A - ProEdit is a research method for inversion-based image/video editing using source/target prompts and instructions, not a prompt-based generative tool like Midjourney or Kling. Best 'prompts' are source/target text pairs (e.g., source: 'a cat on a chair', target: 'a dog on a table') or natural language instructions like 'change the cat to a dog while keeping background'.

- N/A - This is code-based; no single prompt field. Use source prompt to describe original image, target prompt for desired edit.

FAQs

Newly Added Tools

About Author