What is ProEdit?

ProEdit is an open-source, training-free inversion-based method for accurate text-driven image and video editing, solving source image over-injection issues in prompt editing.

When was ProEdit released?

The paper ‘ProEdit: Inversion-based Editing From Prompts Done Right’ was published on arXiv on December 28, 2025; code release for image editing expected shortly after.

Is ProEdit free to use?

Yes, it’s fully open-source under MIT license with code available on GitHub (once released) for free use in research or projects.

What does ProEdit edit?

It specializes in precise changes to attributes like pose, object count, color, layout, or objects while preserving background via latents-shift and attention fusion.

Does ProEdit support video editing?

Yes, the method extends to videos with temporal consistency, though full video code was pending release as of early 2026.

How do I run ProEdit?

Clone the GitHub repo (once code is available), install dependencies, load a base flow model (e.g., FLUX), and run inference scripts with source/target prompts.

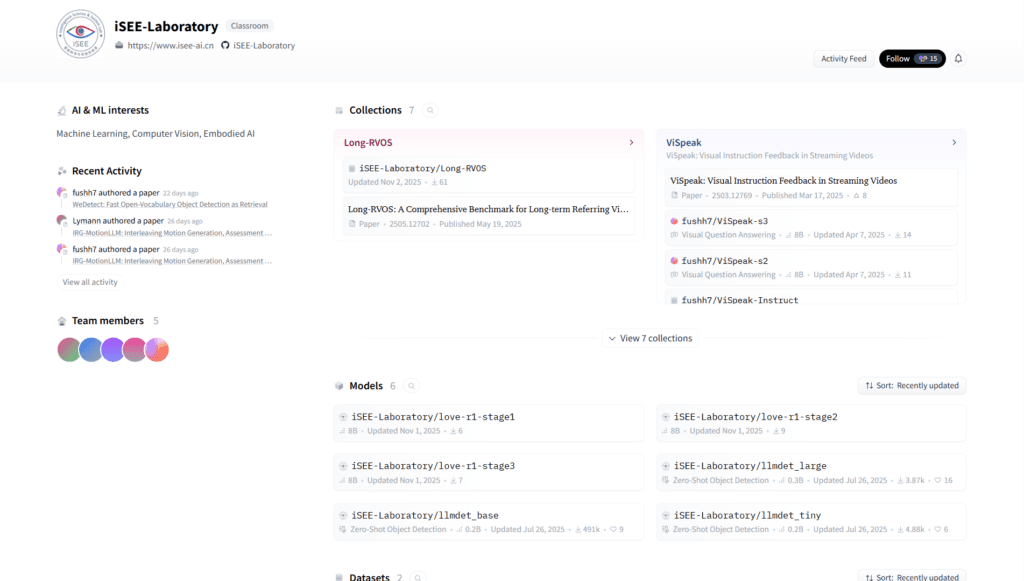

Who created ProEdit?

Developed by researchers from Sun Yat-sen University, CUHK MMLab, and NTU, led by Zhi Ouyang, Dian Zheng, and others from iSEE Laboratory.

Is there a demo for ProEdit?

Qualitative results and demos are showcased on the project page (isee-laboratory.github.io/ProEdit); no interactive online demo yet.