What is RealVideo?

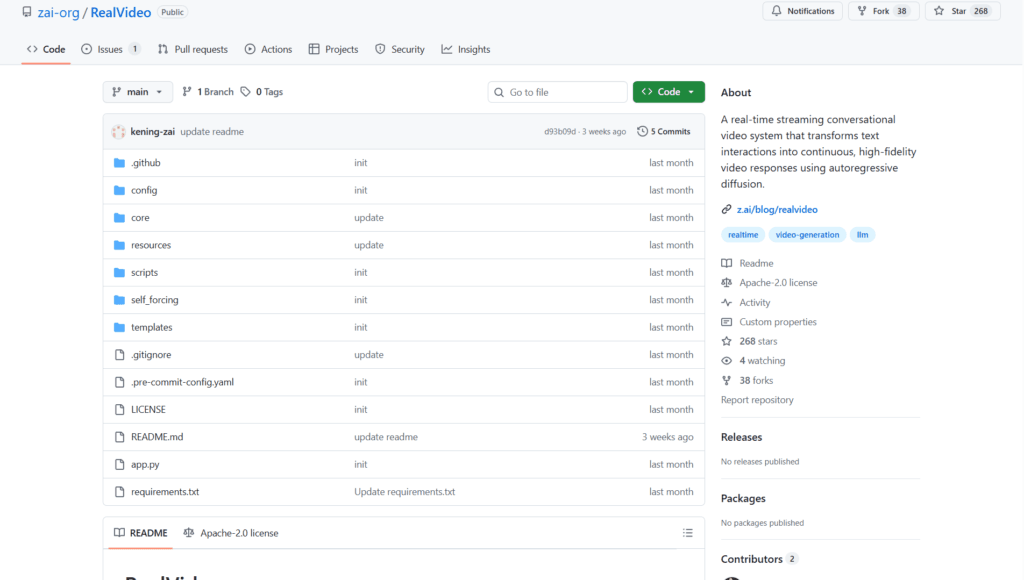

RealVideo is an open-source real-time streaming conversational video system from Z.ai that generates lip-synced high-fidelity video responses from text input using autoregressive diffusion.

When was RealVideo released?

It was released in December 2025, with initial GitHub commits on December 11 and last README update on December 15.

Is RealVideo free to use?

Yes, it is completely free and open-source under Apache-2.0 license with code and model weights available on GitHub and Hugging Face.

What are the key features of RealVideo?

Features include real-time WebSocket streaming, text-to-video dialogue, lip sync, voice cloning, audio-to-video generation, and modular architecture.

What hardware is required for RealVideo?

It needs at least 2 high-end GPUs (e.g., H100/H200 with 80GB each) for real-time performance; one for VAE, others for parallel DiT computation.

How does RealVideo work?

It uses GLM-4.5-AirX/GLM-TTS for audio, autoregressive diffusion for video frames, and WebSocket for live bidirectional communication.

Where can I download RealVideo models?

Model weights are available on Hugging Face (zai-org/RealVideo) and ModelScope (ZhipuAI/RealVideo).

What is RealVideo best suited for?

Ideal for building interactive AI avatars, virtual assistants, real-time video dialogue systems, and research in streaming video generation.