What is ShapeR?

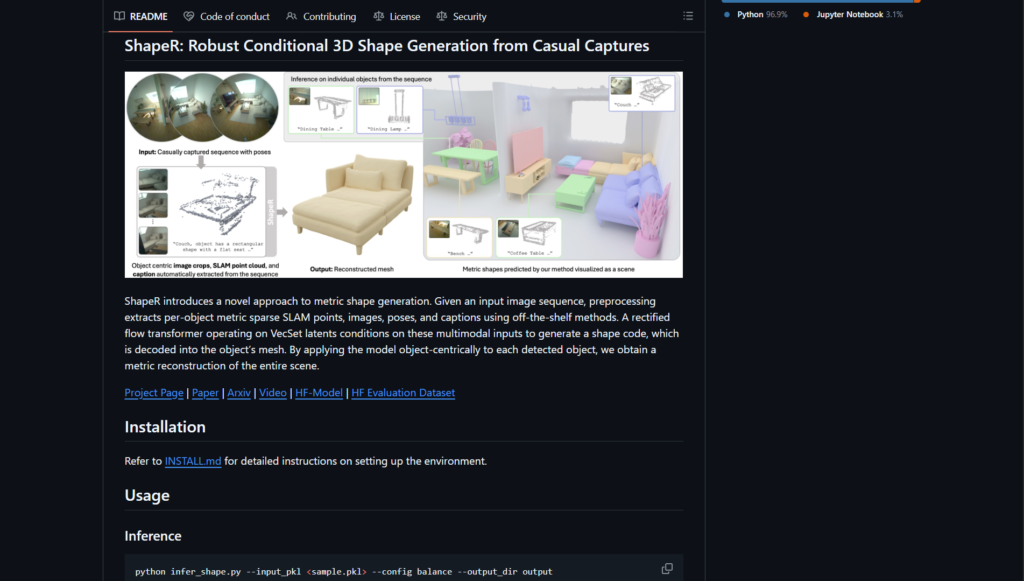

ShapeR is a research project from Meta Reality Labs for robust conditional 3D shape generation and metric reconstruction from casual image sequences using multimodal inputs like SLAM points and captions.

When was ShapeR released?

The code, models, and paper were publicly released on January 16, 2026, with arXiv publication 2601.11514.

Is ShapeR free to use?

Yes, the code and pretrained models are freely available on GitHub and Hugging Face under CC-BY-NC license for non-commercial research use.

What license does ShapeR use?

Primarily CC-BY-NC (Creative Commons Attribution-NonCommercial) for research purposes; check NOTICE file for component-specific terms.

What inputs does ShapeR require?

Preprocessed pickle files containing per-object SLAM point clouds, multi-view images, camera poses, text captions, and optionally ground truth meshes.

Does ShapeR support commercial use?

No, the CC-BY-NC license prohibits commercial applications; suitable only for non-commercial research and academic purposes.

Where can I find the ShapeR paper?

The full paper is available on arXiv (2601.11514) and as PDF on the project page: https://facebookresearch.github.io/ShapeR.

Is there a demo or evaluation dataset for ShapeR?

Yes, pretrained models on Hugging Face, a new evaluation dataset with 178 objects, and code for inference/visualization are provided.