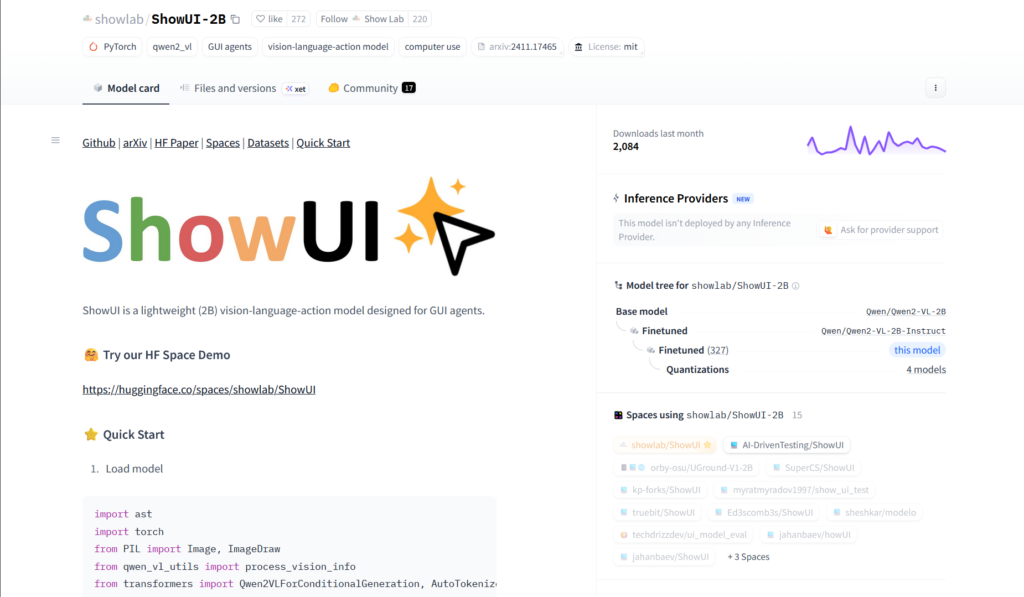

What is ShowUI?

ShowUI is a lightweight 2B open-source vision-language-action model for GUI visual agents, enabling direct screenshot perception and action prediction without text metadata APIs.

When was ShowUI released?

The paper was submitted and model released on November 26, 2024, with code and weights available on GitHub and Hugging Face.

Is ShowUI free to use?

Yes, it is fully open-source under MIT/Apache-2.0 with model weights, code, and demo freely available; no usage fees.

What are ShowUI’s main capabilities?

It supports zero-shot screenshot grounding (75.1% accuracy), navigation on web/mobile/online benchmarks, and end-to-end GUI action execution from visuals.

Where can I download or try ShowUI?

Model weights at huggingface.co/showlab/ShowUI-2B, code at github.com/showlab/ShowUI, and interactive demo Space at huggingface.co/spaces/showlab/ShowUI.

What benchmarks does ShowUI perform well on?

It shows strong results on Mind2Web (web), AITW (mobile), MiniWob (online), and 75.1% zero-shot grounding accuracy.

How does ShowUI differ from other GUI agents?

Unlike text/API-based agents, ShowUI processes screenshots visually like humans, using innovations like UI-guided token selection for efficiency.

What hardware is needed for ShowUI?

The 2B model runs locally on GPUs via transformers/PyTorch; consumer hardware sufficient for inference, though faster with high-end cards.