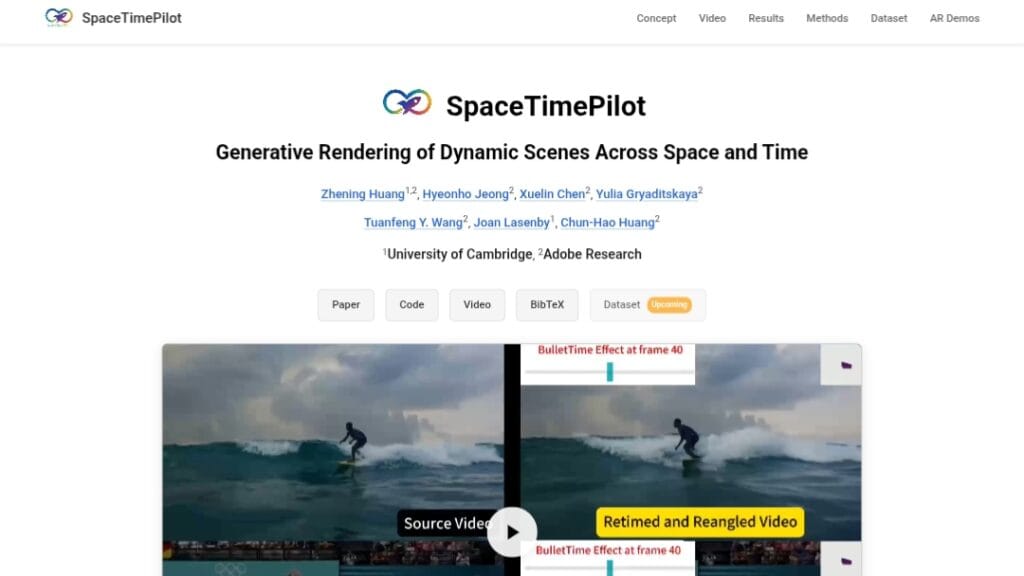

What is SpaceTimePilot?

SpaceTimePilot is an open-source video diffusion model (arXiv Dec 2025) that disentangles space and time for controllable generative rendering, allowing independent changes to camera viewpoint and motion sequence from a single input video.

When was SpaceTimePilot released?

The paper was published on December 31, 2025 (arXiv:2512.25075), with code made available around the same time.

Is SpaceTimePilot free to use?

Yes, it’s fully open-source with code on GitHub under standard licensing; no costs for research or personal experimentation.

What does SpaceTimePilot do?

It enables re-rendering dynamic scenes with free control over spatial viewpoints and temporal motion trajectories independently.

How do I access or run SpaceTimePilot?

Clone the GitHub repo at https://github.com/ZheningHuang/spacetimepilot, install dependencies, and follow the provided scripts for inference or training.

Is there a demo or online version of SpaceTimePilot?

No public hosted demo or Hugging Face Space mentioned; it’s a code-based research model requiring local GPU setup.

What datasets does SpaceTimePilot use?

It introduces CamxTime (synthetic full space-time coverage) and uses temporal-warping on existing multi-view datasets for training.

Who created SpaceTimePilot?

Developed by researchers including Zhening Huang, Hyeonho Jeong, Xuelin Chen, Yulia Gryaditskaya, and others from academic institutions.