What is Step 3.5 Flash?

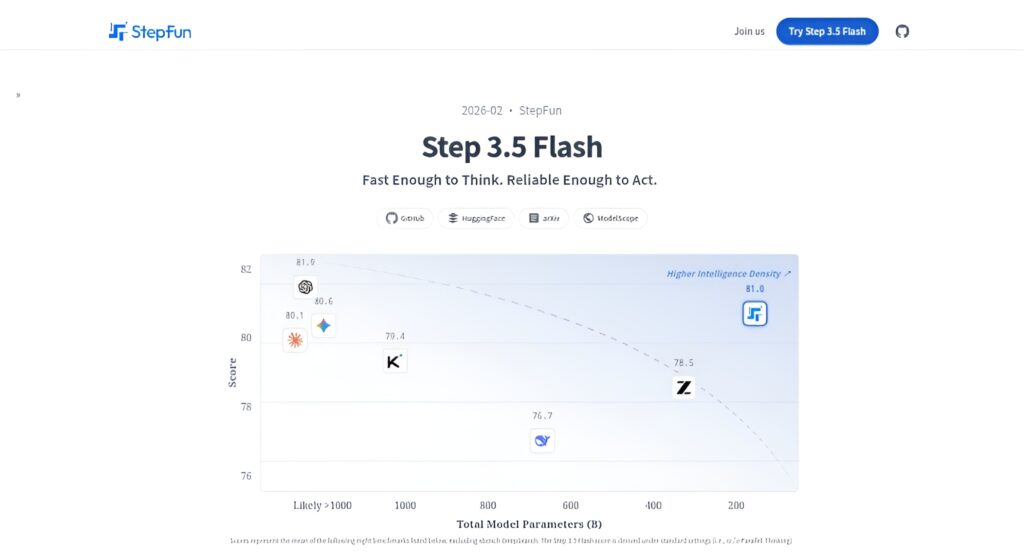

Step 3.5 Flash is StepFun’s open-source sparse MoE foundation model (196B total, 11B active) optimized for fast, frontier reasoning and agentic tasks with 256K context and high throughput.

When was Step 3.5 Flash released?

It was released in early February 2026 (around February 2-3), with weights on Hugging Face and API access shortly after.

Is Step 3.5 Flash free to use?

Yes, the model is completely open-source under Apache 2.0 for local inference; API/hosted access via providers like OpenRouter uses token-based pricing.

What are the key specs of Step 3.5 Flash?

196B parameters (11B active), 256K context, 100-300 tok/s (up to 350 tok/s coding), MTP-3 acceleration, strong on math/coding/agent benchmarks.

How fast is Step 3.5 Flash?

It achieves 100-300 tokens per second in typical use, peaking at 350 tok/s for single-stream coding on high-end hardware.

Can Step 3.5 Flash run locally?

Yes, with GGUF INT4 quantization via llama.cpp or vLLM, it runs on consumer GPUs/Macs with full 256K context support.

What benchmarks does Step 3.5 Flash excel at?

Leads open models in SWE-bench (74.4%), Terminal-Bench (51.0%), AIME/HMMT math, agentic tasks, and overall reasoning averages.

Who should use Step 3.5 Flash?

Developers building agents/coding tools, researchers in reasoning AI, enterprises needing efficient local/cloud LLMs for production workflows.