What is StereoSpace?

StereoSpace is a diffusion-based research framework that converts single monocular images into high-quality stereo pairs without using explicit depth maps or warping, relying on viewpoint-conditioned diffusion in a canonical space.

When was StereoSpace released?

The paper was published on arXiv on December 11, 2025, with a Hugging Face demo and GitHub code released around December 16, 2025.

Is StereoSpace free to use?

Yes, it is completely free with an interactive demo on Hugging Face Spaces and open-source code on GitHub; no paid tiers or subscriptions.

How does StereoSpace work?

It uses viewpoint-conditioned diffusion to generate stereo geometry end-to-end from a single image, inferring correspondences and filling disocclusions without depth estimation.

Where can I try StereoSpace?

Use the free public demo at huggingface.co/spaces/prs-eth/stereospace_web; upload any photo to generate stereo views instantly.

Who created StereoSpace?

Developed by researchers at ETH Zurich’s Photogrammetry and Remote Sensing Lab: Tjark Behrens, Anton Obukhov, Bingxin Ke, Fabio Tosi, Matteo Poggi, Konrad Schindler.

What makes StereoSpace better than other methods?

It outperforms warp-and-inpaint, latent-warping, and warped-conditioning approaches on perceptual comfort (iSQoE) and geometric consistency (MEt3R), especially for thin/translucent objects.

Is StereoSpace open-source?

Yes, the code is available on GitHub (prs-eth/stereospace), and the model/demo is hosted on Hugging Face for free use and experimentation.

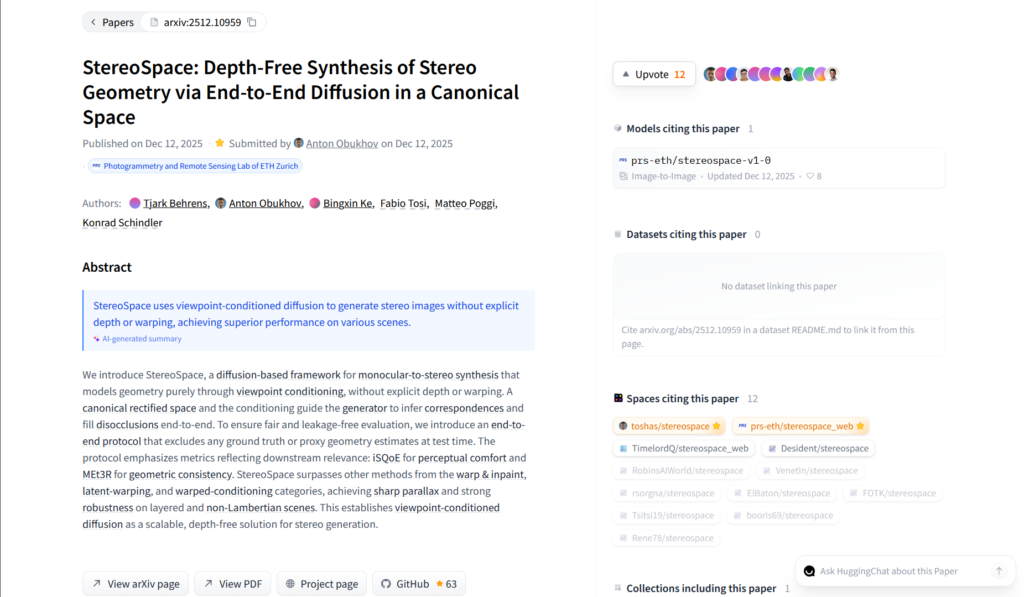

StereoSpace

About This AI

StereoSpace is a cutting-edge diffusion-based framework for converting monocular (single-view) images into high-quality stereo pairs, modeling geometry purely through viewpoint conditioning in a canonical rectified space.

It eliminates the need for explicit depth estimation, warping, or ground-truth geometry during inference, achieving end-to-end synthesis of correspondences and disocclusion filling.

The model excels in challenging scenarios like thin structures, transparencies, layered scenes, and non-Lambertian surfaces, producing sharp parallax and natural stereo effects with superior perceptual comfort and geometric consistency.

Developed by researchers from ETH Zurich’s Photogrammetry and Remote Sensing Lab (authors: Tjark Behrens, Anton Obukhov, Bingxin Ke, Fabio Tosi, Matteo Poggi, Konrad Schindler), the paper was published on December 11, 2025 (arXiv:2512.10959).

It outperforms traditional warp-and-inpaint, latent-warping, and warped-conditioning methods on benchmarks like iSQoE (perceptual stereo quality) and MEt3R (geometric accuracy).

A public interactive demo is available on Hugging Face Spaces, allowing users to upload a single image and generate stereo views instantly.

Code is open-sourced on GitHub (prs-eth/stereospace), and the project page provides additional details and results.

While not a full world model, StereoSpace advances depth-free stereo generation, making it highly relevant for VR/AR content creation, 3D photography, and immersive media from 2D sources.

As a recent research release, it has no widespread user numbers yet but is gaining attention in computer vision and AI communities for its scalable, geometry-free approach.

Key Features

- Depth-free stereo synthesis: Generates stereo pairs from monocular images without explicit depth maps or warping

- Viewpoint-conditioned diffusion: Models geometry via conditioning in a canonical rectified space for end-to-end inference

- Robust handling of challenges: Excels on thin structures, transparencies, layered scenes, and non-Lambertian surfaces

- Sharp parallax and natural effects: Produces realistic stereo with strong disocclusion filling and correspondence inference

- Perceptual and geometric benchmarks: Superior iSQoE (comfort) and MEt3R (consistency) scores over warp-based methods

- Interactive demo: Upload any image on Hugging Face Spaces for instant stereo pair generation

- Open-source code: Full implementation available on GitHub for local running and extension

- Scalable approach: Establishes viewpoint-conditioned diffusion as a viable depth-free alternative for stereo generation

Price Plans

- Free ($0): Completely free research demo on Hugging Face Spaces and open-source code on GitHub; no paid tiers or subscriptions mentioned

- Potential Future (N/A): No commercial or premium plans indicated in paper or project page

Pros

- Eliminates depth dependency: No need for explicit geometry or proxy depth, simplifying pipeline and improving robustness

- High robustness: Handles difficult cases (transparencies, thin objects) better than traditional methods

- Superior quality metrics: Outperforms warp-and-inpaint and latent-warping baselines on perceptual and geometric benchmarks

- Easy demo access: Free interactive web demo on Hugging Face for quick testing

- Open-source availability: Code released on GitHub for research, extension, and local deployment

- Potential VR/AR applications: Enables quick stereo conversion for immersive content from 2D photos

Cons

- Research-focused: Primarily a paper/demo; no production-ready hosted service or API yet

- Limited to stereo pairs: Generates static stereo images, not full 3D models or videos

- Recent release: No widespread adoption or user metrics; still early-stage with potential undiscovered edge cases

- Compute requirements: Diffusion-based inference may be slow on consumer hardware without optimization

- No explicit 3D output: Focuses on stereo views rather than explicit depth maps or meshes

- Demo limitations: Web demo may have queue times or resolution caps during high usage

Use Cases

- 3D photography conversion: Turn 2D photos into stereo pairs for VR/AR viewing or 3D displays

- VR content creation: Quickly generate stereo images from monocular shots for immersive experiences

- Research in stereo vision: Baseline for depth-free geometry synthesis and viewpoint-conditioned diffusion

- Augmented reality prototyping: Create stereo visuals from single images for AR previews

- Creative media: Generate 3D-like effects from 2D artwork or photos for artistic projects

- Film pre-visualization: Test stereo camera setups or depth perception in shots without real stereo capture

Target Audience

- Computer vision researchers: Studying depth-free stereo synthesis and diffusion models

- VR/AR developers: Needing quick stereo conversion for content prototyping

- 3D content creators: Converting 2D images to stereo for immersive media

- Photogrammetry experts: Exploring geometry-free alternatives to traditional stereo matching

- AI enthusiasts: Testing cutting-edge Hugging Face demos for fun or experimentation

- Academic labs: Reproducing or extending the ETH Zurich research

How To Use

- Access demo: Visit huggingface.co/spaces/prs-eth/stereospace_web

- Upload image: Drag and drop or browse a single monocular photo

- Generate stereo: Click process; AI creates left/right views or SBS pair

- View result: Download stereo image or view in 3D/VR mode if supported

- Run locally: Clone GitHub repo (github.com/prs-eth/stereospace), install deps, load model

- Input custom: Use provided scripts for batch or advanced inference

- Experiment: Test on challenging images (transparencies, thin objects) to see robustness

How we rated StereoSpace

- Performance: 4.6/5

- Accuracy: 4.7/5

- Features: 4.5/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.4/5

- Customization: 4.3/5

- Data Privacy: 4.8/5

- Support: 4.2/5

- Integration: 4.4/5

- Overall Score: 4.6/5

StereoSpace integration with other tools

- Hugging Face Spaces: Hosted interactive demo for quick online testing without installation

- GitHub Repository: Full open-source code and implementation for local running and modification

- Potential VR/AR Tools: Stereo outputs compatible with viewers like Side-by-Side formats in VR headsets or apps

- Diffusion Frameworks: Built on standard diffusion pipelines; integrable with ComfyUI or Automatic1111 extensions

- Research Pipelines: Easily extendable in PyTorch-based computer vision workflows

Best prompts optimised for StereoSpace

- N/A - StereoSpace is an image-to-stereo synthesis tool that takes a single uploaded monocular image as input and automatically generates stereo pairs via viewpoint-conditioned diffusion; no text prompts are required or used for generation.

- N/A - The model operates end-to-end from a single photo without additional prompting; simply provide the input image in the demo or code.

FAQs

Newly Added Tools

About Author