What is StereoSpace?

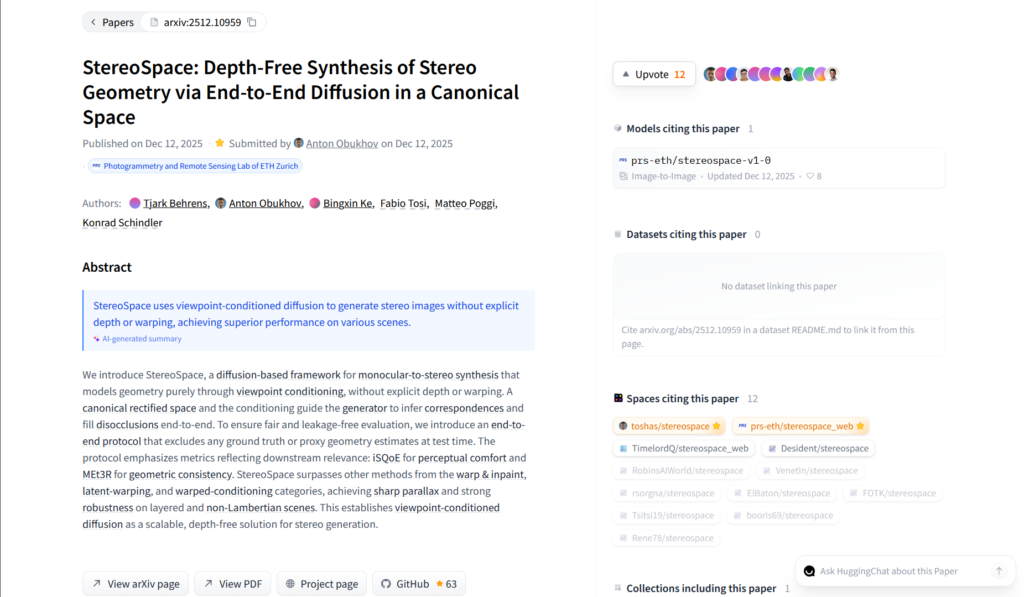

StereoSpace is a diffusion-based research framework that converts single monocular images into high-quality stereo pairs without using explicit depth maps or warping, relying on viewpoint-conditioned diffusion in a canonical space.

When was StereoSpace released?

The paper was published on arXiv on December 11, 2025, with a Hugging Face demo and GitHub code released around December 16, 2025.

Is StereoSpace free to use?

Yes, it is completely free with an interactive demo on Hugging Face Spaces and open-source code on GitHub; no paid tiers or subscriptions.

How does StereoSpace work?

It uses viewpoint-conditioned diffusion to generate stereo geometry end-to-end from a single image, inferring correspondences and filling disocclusions without depth estimation.

Where can I try StereoSpace?

Use the free public demo at huggingface.co/spaces/prs-eth/stereospace_web; upload any photo to generate stereo views instantly.

Who created StereoSpace?

Developed by researchers at ETH Zurich’s Photogrammetry and Remote Sensing Lab: Tjark Behrens, Anton Obukhov, Bingxin Ke, Fabio Tosi, Matteo Poggi, Konrad Schindler.

What makes StereoSpace better than other methods?

It outperforms warp-and-inpaint, latent-warping, and warped-conditioning approaches on perceptual comfort (iSQoE) and geometric consistency (MEt3R), especially for thin/translucent objects.

Is StereoSpace open-source?

Yes, the code is available on GitHub (prs-eth/stereospace), and the model/demo is hosted on Hugging Face for free use and experimentation.