What is TurboDiffusion?

TurboDiffusion is an open-source framework that accelerates video diffusion models by 100-200 times, enabling fast text-to-video and image-to-video generation while keeping high quality.

When was TurboDiffusion released?

It was announced and open-sourced in December 2025, with paper on arXiv dated December 18, 2025, and models updated shortly after.

Is TurboDiffusion free to use?

Yes, it is completely free and open-source under Apache 2.0, with code, models, and instructions available on GitHub and Hugging Face.

What hardware is needed for TurboDiffusion?

It runs efficiently on a single high-end GPU like RTX 5090; quantized versions optimize for consumer hardware.

What models does TurboDiffusion support?

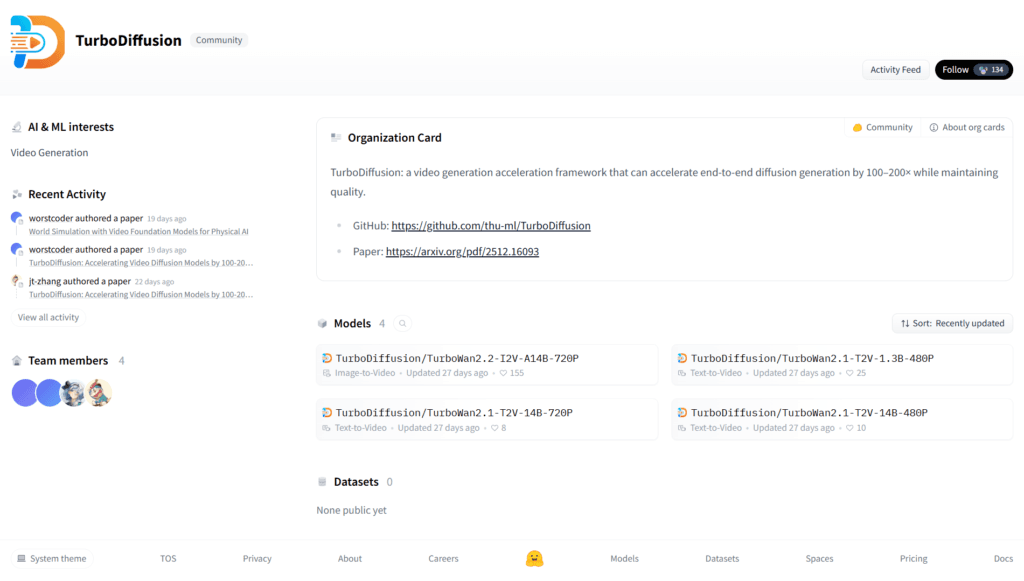

It accelerates Wan-based models such as TurboWan2.2-I2V-A14B-720P (I2V), TurboWan2.1-T2V-1.3B-480P, and 14B variants for T2V at 480P/720P.

How fast is TurboDiffusion compared to normal video AI?

It achieves 100-200x speedup, turning minutes-long generation into seconds (e.g., 1.9s for 5-second SD clips, 24s for HD) on RTX 5090.

Who developed TurboDiffusion?

Jointly developed by Tsinghua University’s TSAIL Lab and ShengShu Technology, with contributions from researchers.

Can TurboDiffusion run locally without internet?

Yes, after downloading models and code, it runs fully offline on your GPU for private, uncensored generation.

TurboDiffusion

About This AI

TurboDiffusion is an open-source acceleration framework developed by Tsinghua University’s TSAIL Lab and ShengShu Technology, designed to dramatically speed up end-to-end video diffusion generation by 100 to 200 times while maintaining high visual quality.

It achieves this through a combination of attention acceleration (low-bit SageAttention and trainable Sparse-Linear Attention), step distillation using rCM, W8A8 quantization for linear layers, and various engineering optimizations.

The framework supports text-to-video (T2V) and image-to-video (I2V) generation, with models like TurboWan2.2-I2V-A14B-720P, TurboWan2.1-T2V-1.3B-480P, and larger 14B variants for 480P/720P resolutions.

Tested on a single RTX 5090 GPU, it reduces generation time for 5-second clips from minutes to seconds (e.g., 1.9s for SD, 24s for HD), enabling near-real-time video synthesis.

Released in December 2025 with full code, checkpoints on Hugging Face, and paper on arXiv, it is licensed under Apache 2.0 for local deployment and research use.

Ideal for creators, researchers, and developers needing fast, high-quality AI video generation without massive compute resources, it marks a step toward practical real-time AI video creation.

Key Features

- 100-200x acceleration: Speeds up full end-to-end video diffusion generation dramatically

- Attention optimization: Uses low-bit SageAttention and trainable Sparse-Linear Attention for faster computation

- Step distillation: Applies rCM for efficient timestep reduction while preserving quality

- W8A8 quantization: Quantizes weights and activations to 8 bits for speed and model compression

- Text-to-Video support: Generates videos from prompts with models like TurboWan2.1-T2V variants

- Image-to-Video support: Animates images into videos using TurboWan2.2-I2V models

- High-resolution output: Supports 480P and 720P resolutions with quality retention

- Single-GPU efficiency: Runs accelerated inference on consumer hardware like RTX 5090

- Open-source implementation: Full code, checkpoints, and easy deployment via GitHub/Hugging Face

Price Plans

- Free ($0): Fully open-source under Apache 2.0 with all code, model checkpoints, and deployment scripts available on GitHub and Hugging Face; no costs or subscriptions required

Pros

- Extreme speed gains: 100-200x faster than baseline diffusion models

- Quality preservation: Maintains comparable visual fidelity to original models

- Accessible on consumer hardware: Works on single high-end GPUs without massive clusters

- Fully open-source: Apache 2.0 license with code, weights, and paper freely available

- Supports multiple resolutions: Handles 480P and 720P video generation efficiently

- Engineering optimizations: Combines multiple techniques for practical real-time use

- Research and creative potential: Enables rapid iteration in video AI workflows

Cons

- Requires strong GPU: Optimal performance needs RTX 5090 or equivalent

- Setup complexity: Local installation involves dependencies, model downloads, and configuration

- Limited to supported models: Accelerates specific Wan-based variants; not universal

- Early-stage framework: Released late 2025 with potential for bugs or refinements

- No hosted demo: Primarily for local/self-hosted use; no simple online interface

- Video length constraints: Best for short clips; longer generations may still be slower

- Quantization trade-offs: Minor quality drops possible in extreme cases

Use Cases

- Rapid video prototyping: Generate short clips quickly for creative testing or storyboarding

- Research in video AI: Experiment with accelerated diffusion for faster iteration cycles

- Content creation acceleration: Produce social media videos, ads, or animations in seconds

- Simulation and visualization: Create dynamic scenes for games, VFX, or educational demos

- Local AI video generation: Run uncensored, private video synthesis on personal hardware

- High-throughput batch generation: Leverage speed for large-scale video datasets

- Real-time applications exploration: Prototype interactive or near-instant video tools

Target Audience

- AI researchers: Studying fast diffusion models and video generation techniques

- Video content creators: Needing quick, high-quality AI video output

- Game developers: Prototyping animations or procedural content

- Developers and hobbyists: Running local, open-source video AI without cloud costs

- VFX artists: Accelerating pre-vis or short clip generation

- Tech enthusiasts: Experimenting with frontier acceleration frameworks

How To Use

- Clone repo: Git clone https://github.com/thu-ml/TurboDiffusion

- Install dependencies: Follow README for PyTorch, diffusers, and other requirements

- Download models: Get checkpoints from Hugging Face (e.g., TurboDiffusion/TurboWan2.2-I2V-A14B-720P)

- Run inference: Use provided scripts with prompt, input image/video if I2V, and settings

- Generate video: Execute command for T2V or I2V; adjust steps/resolution as needed

- Optimize hardware: Use quantized versions on RTX 5090 for maximum speedup

- Experiment: Tweak parameters like guidance scale or add negative prompts

How we rated TurboDiffusion

- Performance: 4.9/5

- Accuracy: 4.7/5

- Features: 4.6/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.3/5

- Customization: 4.5/5

- Data Privacy: 5.0/5

- Support: 4.2/5

- Integration: 4.4/5

- Overall Score: 4.7/5

TurboDiffusion integration with other tools

- Hugging Face: Model checkpoints and inference pipelines hosted for easy download and use

- GitHub Repository: Full open-source code, examples, and community contributions

- Diffusion Frameworks: Compatible with Diffusers library for custom pipelines

- Local GPU Hardware: Runs on consumer NVIDIA GPUs with CUDA support

- Video Editing Tools: Export generated clips in MP4 for import into Premiere, DaVinci, or CapCut

Best prompts optimised for TurboDiffusion

- A serene mountain lake at dawn with mist rising from the water, golden sunlight breaking over peaks, slow cinematic pan across the landscape, realistic style, 720p

- Futuristic cyberpunk city street at night, neon lights reflecting on wet pavement, flying cars zooming overhead, dynamic camera tracking shot, high detail

- Anime-style magical girl transforming in a burst of colorful energy, sparkles and wind effects, dramatic pose, vibrant colors, smooth animation

- Close-up of a chef expertly slicing fresh vegetables in a modern kitchen, steam rising, macro lens focus, warm lighting, realistic motion

- Epic space battle between starships in a nebula, lasers firing, explosions, sweeping orbital camera movement, cinematic sci-fi style

FAQs

Newly Added Tools

About Author