What is TwinFlow?

TwinFlow is an open-source framework (ICLR 2026) for training large-scale few-step image generators using self-adversarial flows, enabling 1-step high-quality generation without external discriminators.

When was TwinFlow released?

The repo and paper appeared around December 2025, with acceptance to ICLR 2026; experimental models like Z-Image-Turbo released shortly after.

Is TwinFlow free to use?

Yes, fully open-source under Apache-2.0 license with code, inference scripts, and models available on GitHub and Hugging Face at no cost.

How many steps does TwinFlow need for generation?

It achieves high-quality results in 1 NFE (step), with 2-4 steps recommended for optimal diversity and fidelity.

What models does TwinFlow support?

Key releases include TwinFlow-Qwen-Image-v1.0 and Z-Image-Turbo; compatible with SD3.5, OpenUni, and Qwen-Image bases.

Does TwinFlow work with ComfyUI?

Yes, community custom nodes like ComfyUI-TwinFlow and others provide integration for node-based workflows.

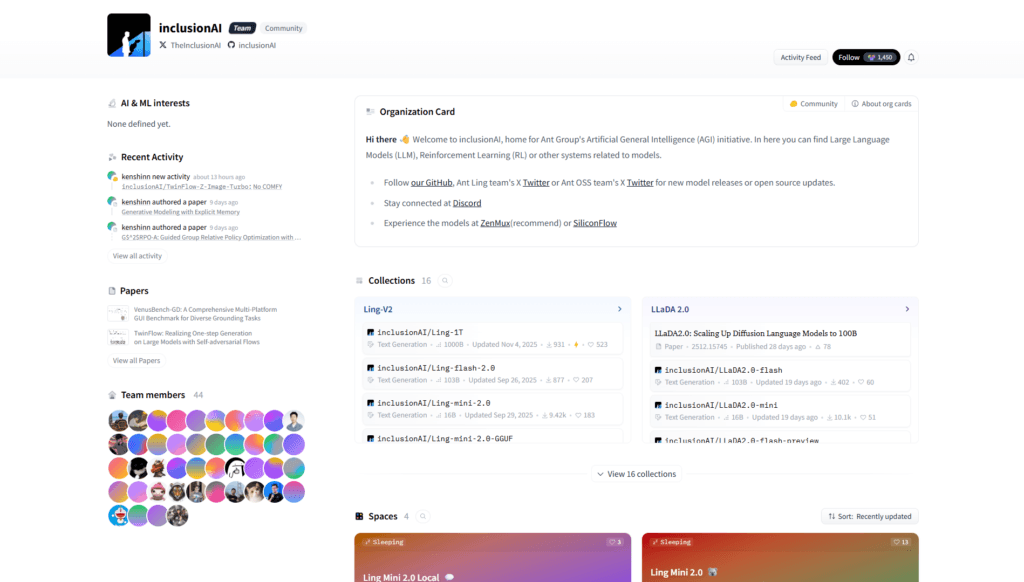

Who created TwinFlow?

Developed by researchers from LINs Lab and InclusionAI (Zhenglin Cheng, Peng Sun, Jianguo Li, Tao Lin).

What are TwinFlow’s main advantages?

Simplifies pipeline (no teachers/discriminators), scales to large models, achieves 1-step generation with strong benchmarks like GenEval 0.83.

TwinFlow

About This AI

TwinFlow is an open-source research framework accepted to ICLR 2026 for enabling high-fidelity one-step and few-step image generation on large-scale models without external discriminators or frozen teachers.

It uses a self-adversarial mechanism with twin trajectories in an extended time domain (t in [-1, 1]), where the negative time branch generates fake data to rectify the flow field through velocity matching.

This simple yet effective approach achieves distribution matching internally, transforming models into efficient few-step generators.

Key models include TwinFlow-Qwen-Image-v1.0 and experimental Z-Image-Turbo for faster inference.

It supports scalable full-parameter training (e.g., on 20B-parameter Qwen-Image) and inference in 1–4 NFEs with strong quality and diversity.

Compatible with Hugging Face Diffusers, it demonstrates superior performance on GenEval (0.83 at 1-NFE) and matches multi-step baselines at reduced compute.

The repo provides inference scripts, sampler configs, training code for SD3.5/OpenUni, and tutorials (e.g., MNIST).

Community integrations exist for ComfyUI workflows.

Built by LINs Lab and InclusionAI contributors, it’s licensed Apache-2.0 and focuses on simplifying generative pipelines for large models.

Key Features

- One-step and few-step generation: High-quality images in 1–4 NFEs without auxiliary networks

- Self-adversarial training: Internal twin trajectories for fake data generation and flow rectification

- Extended time domain: t in [-1, 1] to enable negative branch for adversarial signals

- Velocity matching loss: Minimizes difference between real and fake trajectory velocities

- Large-scale scalability: Full-parameter training on models like 20B Qwen-Image

- Diffusers compatibility: Easy inference with Hugging Face Diffusers library

- Custom sampler support: Configurable stochastic/extrapolation ratios and time controls

- Training code release: Available for SD3.5 and OpenUni under src directory

- ComfyUI integrations: Community workflows for visual node-based usage

- Tutorials and demos: MNIST example and inference scripts provided

Price Plans

- Free ($0): Fully open-source under Apache-2.0; download models/code and run locally at no cost

Pros

- Pipeline simplicity: No external discriminators or teachers required

- High efficiency: 1-NFE generation with quality rivaling multi-step methods

- Strong benchmarks: GenEval 0.83 at 1-NFE, matches 100-NFE baselines at low cost

- Open-source and scalable: Apache-2.0 license with training/inference code

- Community support: ComfyUI nodes and WeChat groups for discussion

- Research impact: Accepted to ICLR 2026, innovative self-adversarial approach

Cons

- Experimental nature: Research-focused; may require tuning for production use

- High compute for training: Full-parameter large-model training needs significant GPUs

- Limited pre-trained models: Few released checkpoints (Qwen-Image, Z-Turbo variants)

- Setup complexity: Requires Diffusers git install and custom sampler configs

- No hosted UI: Local/run-your-own only; no web demo or cloud service

- Early-stage repo: Ongoing updates, no formal releases yet

Use Cases

- Fast image generation research: Experiment with one/few-step diffusion alternatives

- Large-model distillation: Apply self-adversarial flows to scale efficient generators

- ComfyUI workflows: Integrate via community nodes for node-based creative pipelines

- High-diversity sampling: Achieve quality in minimal NFEs for resource-constrained inference

- Text-to-image acceleration: Speed up models like Qwen-Image or SD3.5 variants

Target Audience

- AI researchers: Studying few-step generation and flow matching

- Generative model developers: Building efficient large-scale diffusion models

- ComfyUI users: Seeking faster inference nodes for image gen

- Open-source contributors: Experimenting with self-adversarial techniques

- Academic labs: Reproducing ICLR 2026 results or extending the framework

How To Use

- Clone repo: git clone https://github.com/inclusionAI/TwinFlow

- Install dependencies: pip install git+https://github.com/huggingface/diffusers

- Download models: Get TwinFlow-Qwen-Image or Z-Image-Turbo from Hugging Face

- Run inference: python inference.py (configure sampler for 2-4 steps)

- Customize sampler: Edit sampling_steps, stochast_ratio, etc. in config

- Train own: Use src code for SD3.5/OpenUni (requires heavy compute)

- ComfyUI: Install community nodes like ComfyUI-TwinFlow for GUI workflow

How we rated TwinFlow

- Performance: 4.7/5

- Accuracy: 4.6/5

- Features: 4.5/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.0/5

- Customization: 4.8/5

- Data Privacy: 5.0/5

- Support: 4.2/5

- Integration: 4.4/5

- Overall Score: 4.6/5

TwinFlow integration with other tools

- Hugging Face Diffusers: Native compatibility for inference and model loading

- ComfyUI Custom Nodes: Community implementations like ComfyUI-TwinFlow for node-based workflows

- Stable Diffusion Ecosystem: Works with SD3.5 and OpenUni-based pipelines

- Python Scripts: Direct inference.py and training code for custom setups

- Research Frameworks: Built on RCGM and UCGM repos for flow-matching extensions

Best prompts optimised for TwinFlow

- A cyberpunk cityscape at night with neon lights reflecting on wet streets, flying cars, detailed architecture, cinematic lighting, high resolution, 8k

- A serene Japanese garden in spring with cherry blossoms falling, koi pond, traditional pagoda, soft sunlight filtering through trees, photorealistic, ultra detailed

- Epic fantasy warrior princess riding a dragon over misty mountains at dawn, dramatic clouds, golden hour lighting, dynamic composition, masterpiece quality

- Minimalist product shot of a sleek modern smartphone on black background with subtle reflections, studio lighting, high detail, commercial photography style

- Surreal dreamscape of floating islands with waterfalls cascading into the sky, vibrant colors, magical atmosphere, intricate details, artstation trending

FAQs

Newly Added Tools

About Author