What is UniSH?

UniSH is a research AI model and framework for joint metric-scale 3D reconstruction of scenes and humans from monocular video in a single feed-forward pass.

Who created UniSH?

UniSH was developed by researchers at Hong Kong University of Science and Technology (Murphy Li and team), released on arXiv in January 2026.

Is UniSH free to use?

Yes, it is an open academic research project with paper and likely code/weights freely available; no commercial pricing.

What does UniSH reconstruct?

It jointly recovers high-fidelity scene geometry, human point clouds, camera parameters, and coherent metric-scale SMPL bodies from video.

What input does UniSH take?

Monocular video (single camera footage) as input, no need for depth sensors or multi-view setup.

Is UniSH real-time capable?

The feed-forward design enables efficient inference, though real-time performance depends on hardware and optimization.

Where can I find UniSH code?

Check the project page murphylmf.github.io/UniSH/ or arXiv 2601.01222 for code release; GitHub likely hosts implementation.

What are UniSH’s main applications?

Suited for AR/VR, robotics simulation, motion capture, VFX, autonomous systems, and computer vision research.

UniSH

About This AI

UniSH is a cutting-edge research project and AI model that unifies scene and human reconstruction in a single feed-forward pass.

It takes monocular video as input to jointly recover high-fidelity metric-scale 3D scene geometry, human point clouds, camera parameters, and coherent SMPL bodies.

The framework bridges strong priors from scene reconstruction and human motion recovery (HMR), using a Reconstruction Branch for per-frame camera extrinsics/confidence/pointmaps and a Human Body Branch for global SMPL shape and per-frame pose parameters.

Features are fused via AlignNet to predict global scene scale and SMPL translations for alignment.

It employs robust distillation from an expert depth model for refined human surface details and a two-stage supervision scheme (coarse on synthetic data, fine-tune on real data optimizing geometric correspondence).

UniSH achieves state-of-the-art performance on human-centric scene reconstruction and highly competitive results on global human motion estimation.

Capable of handling challenging dynamic scenes with strong spatial-temporal coherence in one forward pass.

Released as open research in January 2026 with project page, arXiv paper, and code likely available on GitHub.

Primarily for computer vision researchers, 3D reconstruction developers, AR/VR applications, robotics, animation, and motion analysis.

No commercial pricing or hosted service; fully academic/open-source oriented.

Key Features

- Joint 3D scene and human reconstruction: Recovers scene geometry, human point clouds, cameras, and SMPL bodies together

- Feed-forward single-pass inference: No iterative optimization; processes video in one forward network pass

- Monocular video input: Works with ordinary single-camera footage without depth sensors

- Metric-scale output: Produces coherent, real-world sized reconstructions

- Reconstruction Branch: Predicts per-frame camera extrinsics, confidence maps, and pointmaps

- Human Body Branch: Estimates global SMPL shape and per-frame pose parameters

- AlignNet fusion: Aligns scene and human via global scale and per-frame translations

- Robust human detail distillation: Refines surface from expert depth model for high-fidelity humans

- Two-stage supervision: Coarse synthetic pretraining followed by real-data fine-tuning on geometric correspondence

- Strong spatial-temporal coherence: Handles dynamic scenes with consistent geometry and motion

Price Plans

- Free ($0): Fully open academic/research project with paper, project page, and likely code/weights on GitHub; no cost for use, implementation, or experimentation

Pros

- State-of-the-art human-centric reconstruction: Leads benchmarks for unified scene-human tasks

- Competitive global motion estimation: Strong results without complex post-processing

- Efficient single-pass design: Faster inference than iterative or multi-stage methods

- High-fidelity details: Excellent human surface refinement and scene accuracy

- Handles challenging dynamics: Robust to motion, occlusion, and complex interactions

- Open research impact: Advances toward real-time 3D understanding from video

- Metric-scale coherence: Unified alignment prevents scale drift between scene and humans

Cons

- Research-oriented only: No hosted demo or easy-to-use app; requires local implementation

- Hardware demands: Large model likely needs strong GPU for practical inference

- No real-time yet: Feed-forward but not optimized for live video in release

- Limited accessibility: Academic code/weights may require setup and expertise

- No commercial support: Pure research; no enterprise features or API

- Monocular limitations: Performance may degrade on very fast motion or extreme views

- Early-stage release: January 2026 arXiv; community adoption still emerging

Use Cases

- AR/VR content creation: Generate 3D scene-human models from video for immersive experiences

- Robotics and embodied AI: Reconstruct environments with humans for navigation/training

- Motion capture and animation: Accurate human pose/shape from monocular video

- Autonomous systems simulation: Build realistic dynamic scenes with people

- Film and VFX pre-production: Quick 3D reconstruction from footage for digital doubles

- Computer vision research: Benchmarking or extending unified reconstruction methods

- Sports analysis: Track and reconstruct athlete movements in real scenes

Target Audience

- Computer vision researchers: Advancing 3D reconstruction and human motion

- AR/VR developers: Needing fast scene-human modeling from video

- Robotics engineers: Simulating human-included environments

- Animation and VFX artists: Creating digital humans and scenes from real footage

- Academic institutions: Students and professors in CV/ML labs

- AI enthusiasts in 3D vision: Experimenting with open-source reconstruction models

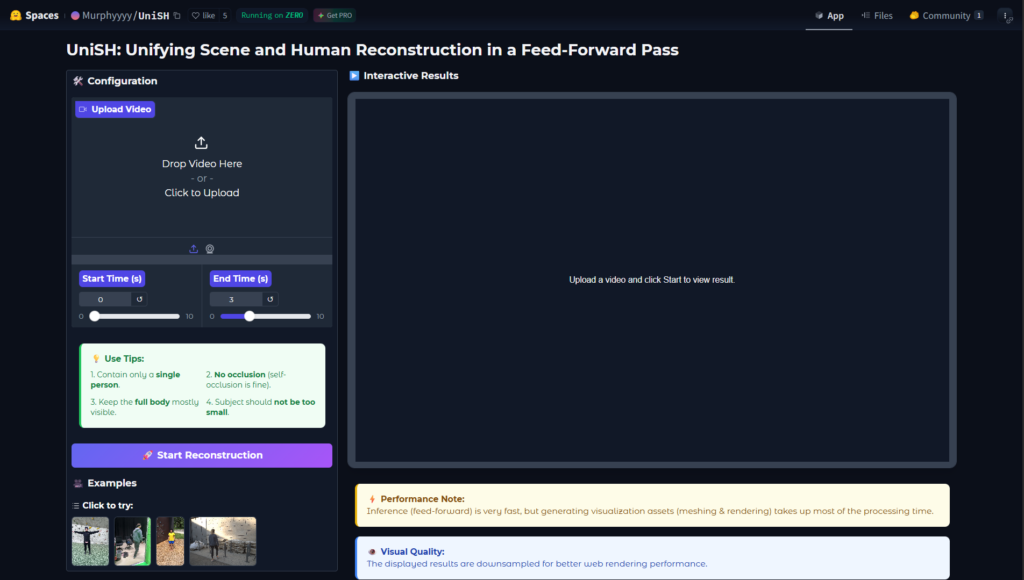

How To Use

- Visit project page: Go to murphylmf.github.io/UniSH for paper, demos, and code links

- Read arXiv paper: Download arXiv:2601.01222 for architecture and method details

- Clone repository: If code released, git clone the GitHub repo (check issues for HF release)

- Install dependencies: Set up PyTorch, dependencies per repo requirements

- Download model: Get pretrained weights if available on Hugging Face or project page

- Run inference: Input monocular video; model outputs scene geometry, SMPL params, point clouds

- Visualize results: Use provided scripts or tools like MeshLab/Blender for 3D viewing

How we rated UniSH

- Performance: 4.8/5

- Accuracy: 4.9/5

- Features: 4.6/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 3.8/5

- Customization: 4.7/5

- Data Privacy: 5.0/5

- Support: 4.0/5

- Integration: 4.4/5

- Overall Score: 4.6/5

UniSH integration with other tools

- PyTorch Ecosystem: Built with PyTorch for easy extension and training

- Hugging Face (Potential): Model weights likely hosted for inference pipelines

- 3D Visualization Tools: Outputs compatible with MeshLab, Blender, Unity/Unreal for rendering

- Computer Vision Libraries: Integrates with Open3D, PyTorch3D for post-processing

- Research Frameworks: Compatible with SMPL/SMPL-X body models and standard CV datasets

Best prompts optimised for UniSH

- N/A - UniSH is a feed-forward computer vision reconstruction model that takes monocular video as input, not text prompts. It processes raw video frames directly without user text descriptions.

- N/A - This is a research model for 3D scene/human reconstruction from video; no text-to-3D or prompt-based generation feature.

- N/A - Usage involves feeding video input to the network; no manual prompting required or supported.

FAQs

Newly Added Tools

About Author