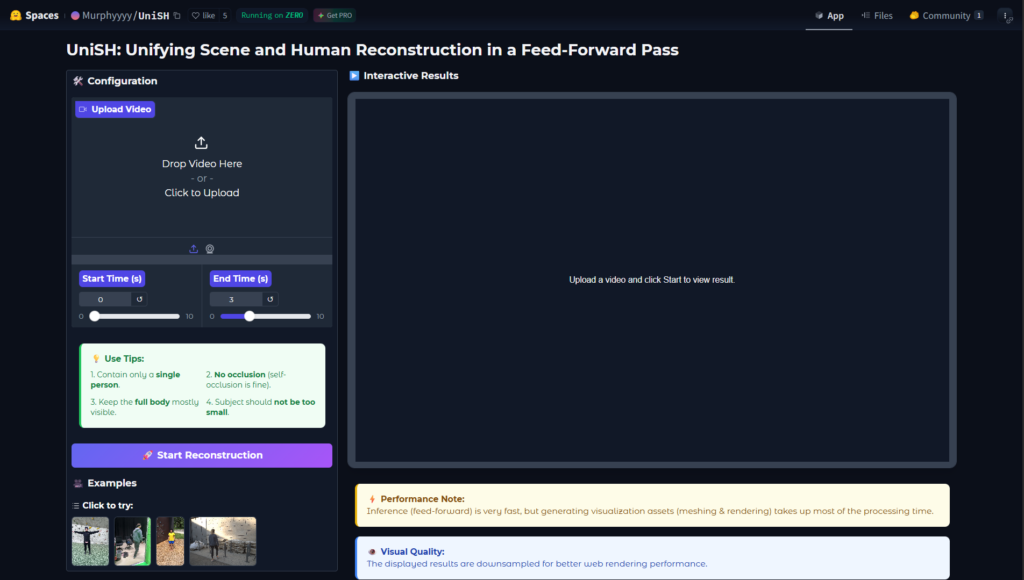

What is UniSH?

UniSH is a research AI model and framework for joint metric-scale 3D reconstruction of scenes and humans from monocular video in a single feed-forward pass.

Who created UniSH?

UniSH was developed by researchers at Hong Kong University of Science and Technology (Murphy Li and team), released on arXiv in January 2026.

Is UniSH free to use?

Yes, it is an open academic research project with paper and likely code/weights freely available; no commercial pricing.

What does UniSH reconstruct?

It jointly recovers high-fidelity scene geometry, human point clouds, camera parameters, and coherent metric-scale SMPL bodies from video.

What input does UniSH take?

Monocular video (single camera footage) as input, no need for depth sensors or multi-view setup.

Is UniSH real-time capable?

The feed-forward design enables efficient inference, though real-time performance depends on hardware and optimization.

Where can I find UniSH code?

Check the project page murphylmf.github.io/UniSH/ or arXiv 2601.01222 for code release; GitHub likely hosts implementation.

What are UniSH’s main applications?

Suited for AR/VR, robotics simulation, motion capture, VFX, autonomous systems, and computer vision research.