What is Zhipu GLM 4.6V?

GLM-4.6V is Zhipu AI’s open-source multimodal vision-language model series (106B and 9B Flash variants) with native tool calling, 128K context, and state-of-the-art visual reasoning released December 8, 2025.

When was GLM-4.6V released?

The GLM-4.6V series was officially released and open-sourced on December 8, 2025, with weights available on Hugging Face and ModelScope.

Is GLM-4.6V free to use?

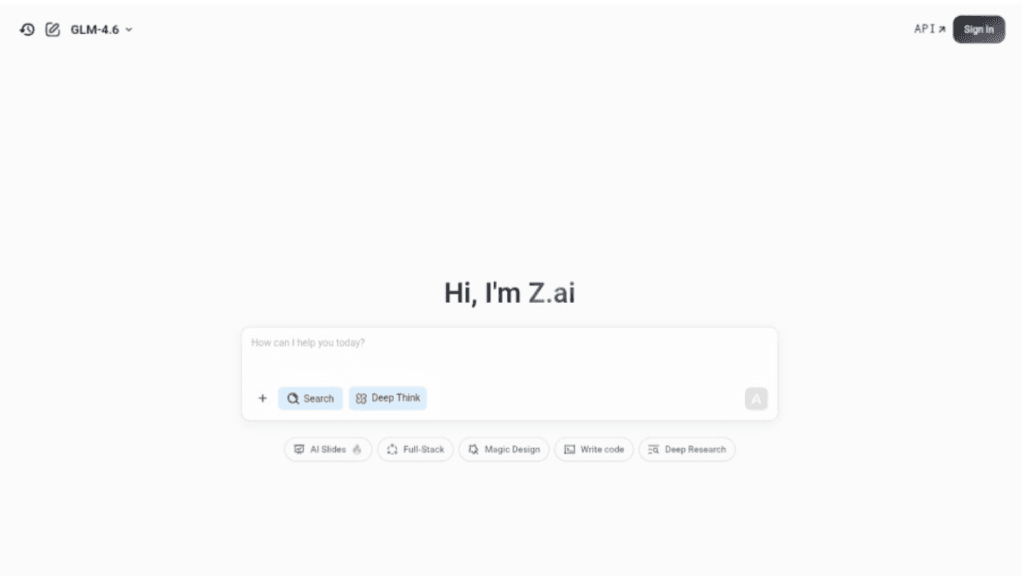

Yes, the full model weights and code are open-source under a permissive license for local/self-hosted use; hosted API via Z.ai platform has token-based pricing.

What are the key features of GLM-4.6V?

Native multimodal function calling from images/videos, 128K context for long documents, top visual understanding (OCR, charts, UI), interleaved generation, and agentic capabilities.

How many parameters does GLM-4.6V have?

GLM-4.6V is 106B parameters (MoE with 12B active); GLM-4.6V-Flash is 9B for lightweight/local deployment.

What benchmarks does GLM-4.6V excel in?

It achieves SoTA among similar-scale open models on MMBench, MathVista, OCRBench, and other multimodal tasks for visual understanding and reasoning.

Can GLM-4.6V run locally?

Yes, especially the 9B Flash variant optimized for low-latency local/edge use; full 106B suited for cloud/high-performance clusters.

Who developed GLM-4.6V?

Developed by Zhipu AI (Z.ai), a leading Chinese AI company known for the GLM series of models.