NVIDIA Nemotron 3 Nano Release: I just woke up to what might be the most significant hardware-software optimization news of late 2025. NVIDIA quietly dropped a bomb that changes the game for local AI.

They just released Nemotron 3 Nano, a 30-billion parameter open model that doesn’t just compete with the giants, it outruns them.

I dove into the technical paper and the weights (which are already up on Hugging Face), and here is the headline: This model runs 2.2x to 3.3x faster than its closest competitors while beating them on critical benchmarks. And yes, you can run it on your home gaming PC.

Topics

ToggleThe “Hybrid” Secret Sauce

The numbers didn’t make sense to me at first. How can a 30B model run this fast? The secret lies in the architecture.

Unlike standard Transformers (like Llama 4 or GPT-OSS), Nemotron 3 Nano uses a Hybrid Mamba-Transformer MoE design.

- Mixture of Experts (MoE): It has 31.6B total parameters, but only activates 3.6B per token. This keeps the compute cost incredibly low.

- Mamba-2 Layers: It replaces standard attention layers with Mamba-2 state-space layers. This is the “Alpha” that explains the speed, Mamba layers are far more efficient at processing information than traditional Transformers, especially at long contexts.

Also Read: OpenAI Secret Image Models Leaked: Chestnut and Hazelnut Spotted in the Wild

Benchmarks: Small Model, Big Brain

NVIDIA is positioning this as a “reasoning” model for agents. I checked the benchmark comparison against the two current open-weight kings: OpenAI’s GPT-OSS-20B and Alibaba’s Qwen3-30B.

Key Benchmark Wins:

- Math (AIME25): Nemotron scores a massive 99.2% (with tools), completely dusting Qwen3’s 85.0%. Even without tools, it hits 89.1%.

- Coding (SWE-Bench): It achieves 38.8% on real-world software engineering tasks, significantly higher than Qwen3’s 22.0%.

- Context: It supports a massive 1 Million token context window, making it perfect for digesting entire codebases or books.

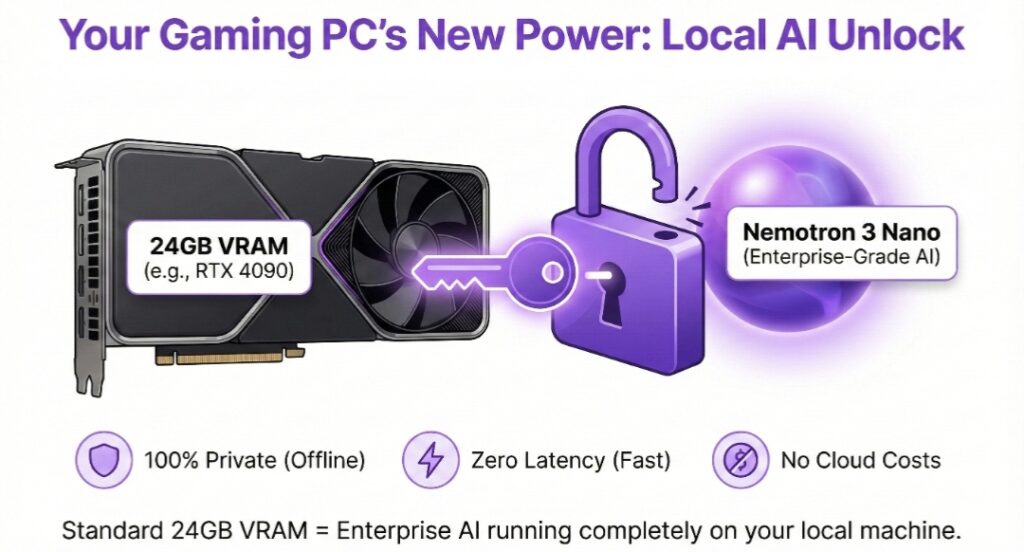

The Local AI Revolution: 24GB is All You Need

For me, this is the most exciting part. Because the model only activates ~3.6B parameters at a time, the memory footprint is surprisingly manageable.

You can run Nemotron 3 Nano locally on a single GPU with 24GB VRAM (like an RTX 3090 or 4090).

- Throughput: It generates tokens at nearly 380 tokens/second on a single H100, which translates to incredibly snappy performance on consumer cards.

- Why it matters: You now have a model smarter than last year’s GPT-4 running entirely offline, private, and fast enough to power real-time voice agents.

Nemotron 3 Nano vs. The World

Here is how the new king stacks up against the open-weight competition:

| Feature | Nemotron 3 Nano | Qwen3-30B Thinking | GPT-OSS-20B |

| Active Params | 3.6B (MoE) | ~5B (MoE) | ~20B (Dense) |

| Context Window | 1,000,000 Tokens | 128k Tokens | 128k Tokens |

| AIME25 (Math) | 99.2% | 85.0% | 91.7% |

| Throughput | 3.3x (vs Qwen) | 1x (Baseline) | 1.5x (vs Qwen) |

| Architecture | Hybrid Mamba-MoE | Transformer MoE | Transformer Dense |

If you are building AI agents that need to loop through tasks quickly like checking code, summarizing logs, or handling customer support this is likely your new default model. The combination of Mamba speed and MoE efficiency means you are paying significantly less per token (or per watt) for intelligence that rivals the best in the world.