Summary Box [In a hurry? Just read this⚡]

Summary Box [In a hurry? Just read this⚡]

- StepFun AI released Step 3.5 Flash, a high-efficiency open-source MoE model with 196B total parameters but only 11B active per token.

- It achieves frontier-level reasoning and agentic performance while delivering extremely fast inference speeds of 100–300 tokens/s (up to 350 tokens/s on coding tasks).

- Supports a massive 256K context window using 3:1 Sliding Window Attention and 3-way Multi-Token Prediction for better throughput and long-context handling.

- Outperforms larger open models like GLM-4.7 and DeepSeek V3.2 on many benchmarks and remains competitive with top proprietary models such as GPT-5.2 and Gemini 3.0 Pro.

- Optimized for local/edge deployment (e.g., high-end Macs, consumer GPUs) with INT4/INT8 quantization, strong agentic tool use, and full open-source availability on Hugging Face and GitHub.

StepFun AI has introduced Step 3.5 Flash, a groundbreaking open-source foundation model that combines high intelligence density with blazing-fast performance.

This sparse Mixture of Experts (MoE) architecture activates just 11 billion of its 196 billion parameters per token, enabling it to deliver frontier-level reasoning and agentic capabilities while achieving inference speeds of 100 to 300 tokens per second, peaking at 350 tokens per second for coding tasks.

Topics

ToggleDesigned for real-time applications, Step 3.5 Flash supports a 256K context window through innovative 3:1 Sliding Window Attention (SWA) with full attention mechanisms.

It also incorporates 3-way Multi-Token Prediction (MTP-3) to enhance throughput, making it ideal for local deployment on devices like high-end Macs or NVIDIA GPUs.

Fast enough to think. Reliable enough to act.

— Yasmine (@CyouSakura) February 2, 2026

Step-3.5-Flash is here @StepFun_ai⚡

Website: https://t.co/HcGbiBN8po

Blog: https://t.co/xm8Hk6tyP3

Powering the next wave of intelligence—from real-time reasoning to reliable agentic action.

We are so back. 🚀

Website:… pic.twitter.com/WYQVqmNNlD

The model emphasizes reliability in agentic workflows, such as tool integration and multi-agent orchestration, positioning it as a go-to solution for developers building intelligent systems.

Advanced Training Techniques Behind the Model

The development of Step 3.5 Flash leverages a scalable reinforcement learning framework called MIS-PO (Metropolis Independence Sampling Filtered Policy Optimization).

Read More: OpenAI Unveils Codex App: Army of AI Coders That Finish Weeks of Work in Days

This method ensures stable, on-policy training by incorporating truncation-aware value bootstrapping and routing confidence monitoring to maintain MoE stability.

These techniques allow the model to handle complex reasoning chains and agentic actions with high consistency, reducing errors in practical scenarios.

Benchmark Performance and Comparisons

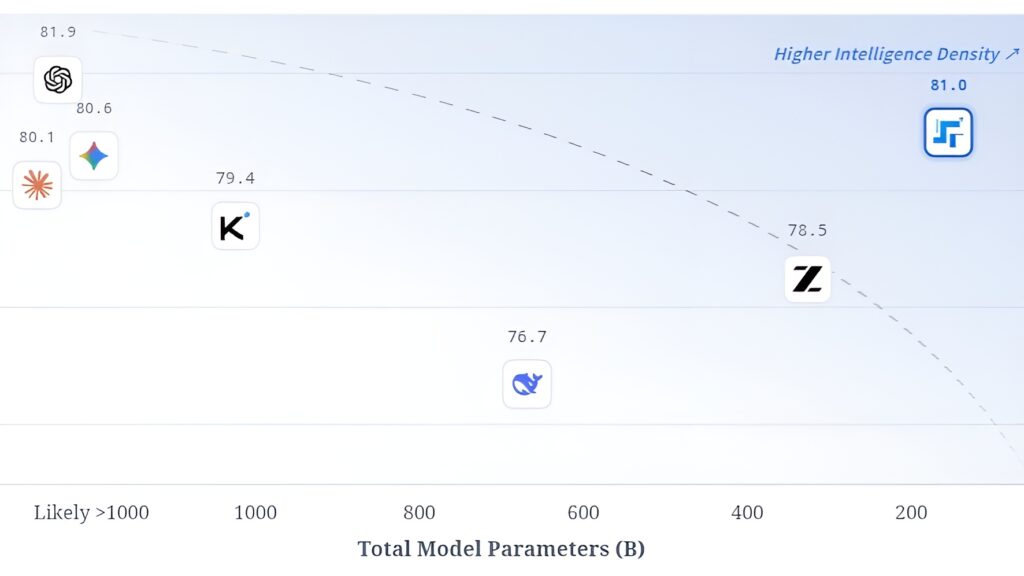

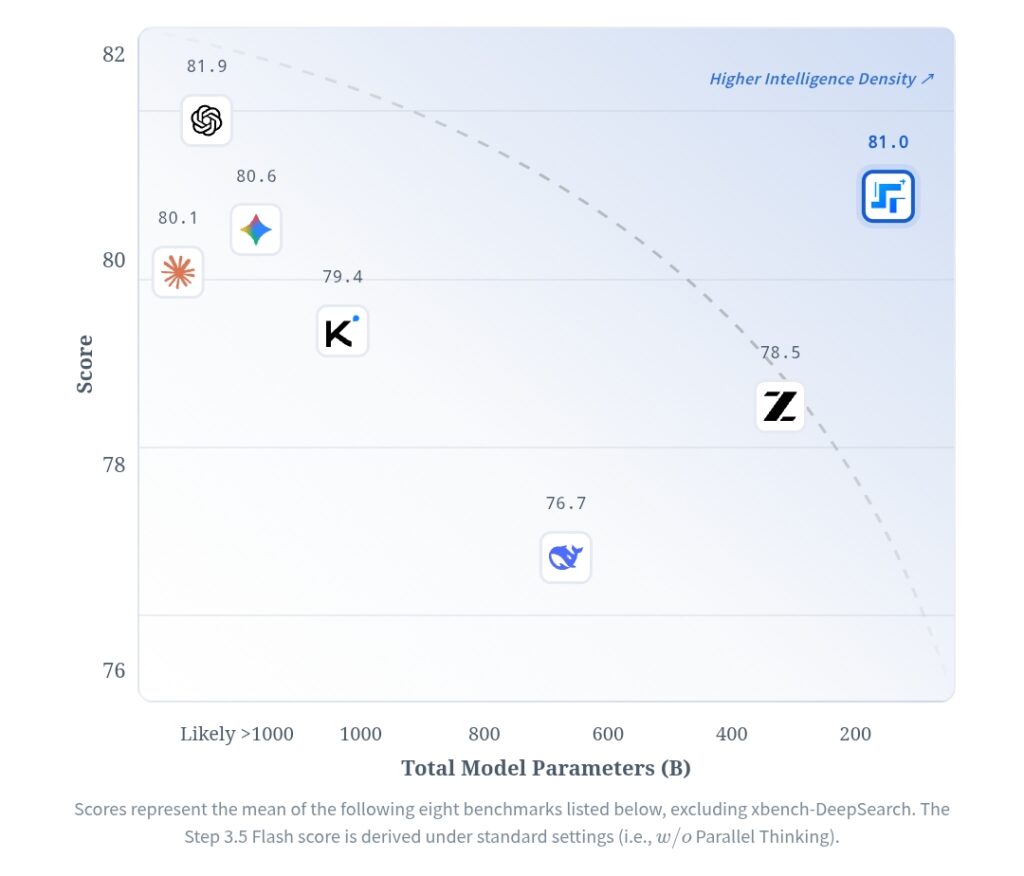

Step 3.5 Flash excels across a range of benchmarks, often outperforming larger open-source models like GLM-4.7 and DeepSeek V3.2, and holding its own against proprietary giants such as GPT-5.2 and Gemini 3.0 Pro.

Its overall mean score on eight key benchmarks (excluding xbench-DeepSearch) stands at 81.0, surpassing GLM-4.7 at 78.5 and DeepSeek V3.2 at 76.7.

Here’s a detailed table of its standout results compared to select competitors:

| Benchmark | Step 3.5 Flash | Gemini 3.0 Pro | Claude Opus 4.5 | DeepSeek V3.2 | GLM-4.7 |

|---|---|---|---|---|---|

| AIME 2025 | 97.3 | 97.3 | 96.1 | 95.0 | 92.8 |

| IMOAnswerBench | 85.4 | 82.0 | 81.8 | 78.3 | 83.3 |

| HMMT 2025 (Avg Feb/Nov) | 98.4/94.0 | 96.2 | 95.4 | 95.3 | 91.4 |

| SWE-Bench Verified | 74.4 | 73.8 | 73.1 | 76.8 | 76.2 |

| Terminal-Bench 2.0 | 51.0 | 41.0 | 46.4 | 54.2 | 50.8 |

| LiveCodeBench-V6 | 86.4 | 84.9 | 83.3 | 85.0 | 84.4 |

| τ²-Bench | 88.2 | 87.4 | 80.3 | 92.5 | 84.1 |

| BrowseComp (w/ Context Manager) | 69.0 | 67.5 | 67.6 | 74.9 | 65.8 |

| xbench-DeepSearch (2025.10) | 54.0 | 35.0 | 40.0 | N/A | N/A |

These scores highlight Step 3.5 Flash‘s strengths in mathematical reasoning, coding accuracy, and agentic reliability, with enhancements like PaCoRe boosting math tasks to near-perfect levels (e.g., 99.8 on AIME 2025 with Python execution).

Key Features for Practical Use

Step 3.5 Flash is packed with features that make it versatile for edge and cloud environments:

- High-Speed Inference: Optimized for INT4 quantized GGUF weights and INT8 KVCache quantization, supporting extended contexts without performance drops.

- Agentic Orchestration: Handles complex workflows like stock analysis or data pipelines, with 62.5% proactive intent clarification and 70.5% average score in advisory tasks.

- Edge-Cloud Synergy: Integrates with Step-GUI for mobile tasks, achieving 57% on AndroidDaily Hard benchmarks versus 40% for edge-only setups.

- Tool Integration: Strong in multi-agent systems and real-world agentic actions, enabling seamless collaboration across devices.

- Open-Source Accessibility: Available on Hugging Face, GitHub, and through StepFun’s API, with support for web, iOS, and Android apps.

Implications for AI Development

By prioritizing efficiency over sheer scale, StepFun AI demonstrates that compact models can punch above their weight in competitive landscapes.

Step 3.5 Flash lowers barriers for local AI deployment, empowering developers, researchers, and enterprises to build faster, more reliable intelligent applications.