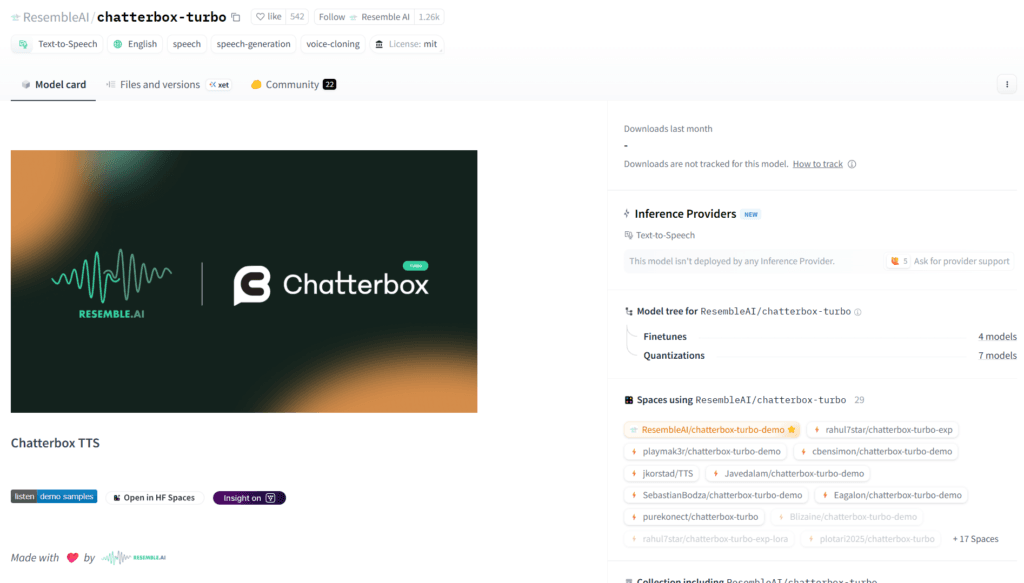

What is Chatterbox Turbo?

Chatterbox Turbo is an open-source text-to-speech model by Resemble AI, optimized for ultra-low latency with one-step generation, zero-shot voice cloning, paralinguistic tags, and built-in watermarking.

When was Chatterbox Turbo released?

It was officially released and announced on December 15, 2025, as per the Hugging Face model card and Resemble AI announcements.

Is Chatterbox Turbo free?

Yes, it’s completely free and open-source under MIT license; run locally with no fees. Resemble AI offers paid production hosting optionally.

What languages does Chatterbox Turbo support?

English only (optimized for speed and quality); multilingual support is in the separate Chatterbox-Multilingual model.

How fast is Chatterbox Turbo?

It achieves sub-200ms end-to-end latency (under 150ms time-to-first-sound reported), making it suitable for real-time voice agents.

Does Chatterbox Turbo support voice cloning?

Yes, zero-shot cloning from just 5-10 seconds of reference audio, with high quality in tests against proprietary models.

What are paralinguistic tags in Chatterbox Turbo?

Tags like [laugh], [chuckle], [cough], [sigh] add natural non-speech sounds and expressiveness to generated audio.

How do I run Chatterbox Turbo locally?

Install via pip install chatterbox-tts, load on GPU with Python code, provide text and optional reference audio, then generate and save WAV.