What is Fun-Audio-Chat?

Fun-Audio-Chat is an open-source Large Audio Language Model (8B parameters) from Alibaba’s Tongyi Fun Team, released December 23, 2025, for natural low-latency speech-to-speech and speech-to-text voice interactions.

Is Fun-Audio-Chat free to use?

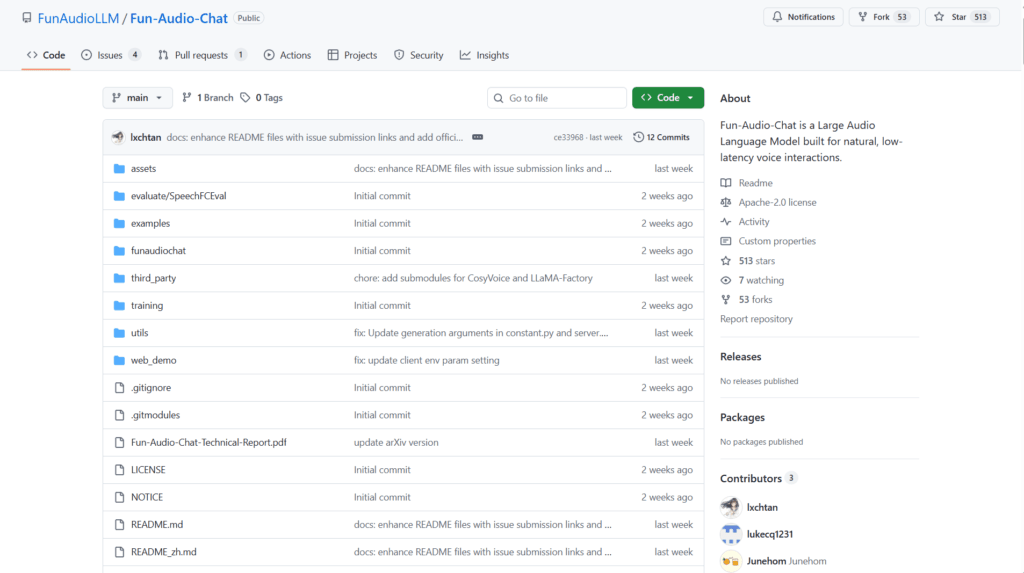

Yes, it is completely open-source under Apache 2.0 license with model weights, code, and inference/demo scripts freely available on GitHub and Hugging Face.

When was Fun-Audio-Chat released?

The model was officially released on December 23, 2025, with technical report on arXiv and open-source code shortly after.

What are the key innovations in Fun-Audio-Chat?

It uses Dual-Resolution Speech Representations (5Hz backbone + 25Hz head) for ~50% compute reduction and Core-Cocktail training to preserve text LLM strength alongside audio capabilities.

What hardware is required for Fun-Audio-Chat?

Inference needs approximately 24GB GPU VRAM; training requires 4x80GB GPUs; supports vLLM for significant speedups.

Does Fun-Audio-Chat support voice empathy?

Yes, it detects emotional tone, pace, and energy in speech and responds appropriately for more natural conversations.

What benchmarks does Fun-Audio-Chat excel in?

It ranks top among similar-sized models on OpenAudioBench, VoiceBench, UltraEval-Audio, MMAU series, and speech function/instruction benchmarks.

How do I run Fun-Audio-Chat locally?

Clone the GitHub repo, install dependencies (Python 3.12, PyTorch 2.8.0, ffmpeg), download weights from Hugging Face, and run inference scripts like infer_s2t.py or infer_s2s.py.

Fun-Audio-Chat

About This AI

Fun-Audio-Chat is an open-source Large Audio Language Model (LALM) developed by Alibaba’s Tongyi Fun Team, released on December 23, 2025.

The 8B-parameter model (with a 30B MoE variant Fun-Audio-Chat-30B-A3B) enables natural, low-latency voice conversations using innovative Dual-Resolution Speech Representations (5Hz efficient backbone + 25Hz refined head) to reduce compute by nearly 50% while preserving high speech quality.

It incorporates Core-Cocktail training to retain strong text LLM capabilities alongside audio understanding, reasoning, and generation.

Key strengths include state-of-the-art performance on spoken QA, audio understanding, speech function calling, speech instruction-following, and voice empathy benchmarks (top rankings among similar-sized models on OpenAudioBench, VoiceBench, UltraEval-Audio, MMAU, MMAU-Pro, MMSU, Speech-ACEBench, Speech-BFCL, Speech-SmartInteract, VStyle).

Supports speech-to-text (S2T), speech-to-speech (S2S), full-duplex two-way communication (Fun-Audio-Chat-Duplex variant), emotional tone detection/response, and tool/function calling via spoken prompts.

Fully open-source under Apache 2.0 with model weights on Hugging Face/ModelScope, training/inference code, web demo, and vLLM integration for up to 50x speedup on long audio.

Requires Python 3.12, PyTorch 2.8.0, ~24GB GPU for inference; ideal for building real-time voice assistants, interactive agents, and multimodal speech applications.

Key Features

- Dual-Resolution Speech Representations: 5Hz backbone for efficiency plus 25Hz head for high-quality speech output

- Core-Cocktail Training: Preserves text LLM knowledge while gaining strong audio capabilities

- Low-Latency Voice Interaction: Natural real-time speech-to-speech conversations with minimal delay

- Voice Empathy: Detects and responds to emotional tone, pace, and energy in speech

- Spoken Function Calling: Executes tools and instructions via voice prompts

- Full-Duplex Support: Simultaneous two-way communication in Duplex variant

- Multimodal Audio Understanding: Excels at spoken QA, audio analysis, and instruction-following

- vLLM Inference Acceleration: Up to 20x speedup for short audios and 50x for long ones

- Open-Source Ecosystem: Full code, weights, web demo, and evaluation scripts available

- High Benchmark Performance: Tops leaderboards for 8B-scale models across audio tasks

Price Plans

- Free ($0): Fully open-source under Apache 2.0; model weights, code, demo, and inference scripts available at no cost for local use and modification

Pros

- State-of-the-art efficiency: 50% less compute with dual-resolution design without quality loss

- Top benchmark rankings: Leads in spoken QA, audio understanding, function calling, and empathy

- Fully open-source: Apache 2.0 license with complete training/inference code and weights

- Real-time low-latency: Enables natural voice conversations suitable for interactive agents

- Emotional intelligence: Unique voice empathy for more human-like responses

- Acceleration support: vLLM integration dramatically speeds up inference

- Community resources: Hugging Face/ModelScope hosting, interactive demo, and paper

Cons

- High hardware requirements: Needs ~24GB GPU VRAM for inference

- Setup complexity: Requires specific Python/PyTorch versions and dependencies

- Limited languages: Primarily English and Chinese (based on LLM backbone)

- No hosted service: Local deployment only; no cloud API mentioned

- Recent release: Adoption and community integrations still growing

- Potential latency variance: Depends on hardware and audio length

- Evaluation focused: Strong on benchmarks but real-world edge cases may vary

Use Cases

- Voice assistants and chatbots: Build natural spoken dialogue systems with emotion awareness

- Interactive AI agents: Real-time voice interaction for gaming, virtual companions, or customer service

- Spoken question answering: Handle audio-based queries with high accuracy

- Speech instruction-following: Execute complex voice commands and function calls

- Audio understanding tasks: Analyze spoken content for insights or summarization

- Research in LALMs: Fine-tune or extend for new audio-language applications

- Accessibility tools: Voice interfaces for hands-free computing

Target Audience

- AI researchers and developers: Experimenting with audio LLMs and voice AI

- Voice application builders: Creating real-time speech-to-speech systems

- Open-source enthusiasts: Deploying and extending large audio models

- Game and virtual agent creators: Adding natural voice interactions

- Multimodal AI teams: Integrating speech with LLM reasoning

- Alibaba/Tongyi ecosystem users: Leveraging related models like CosyVoice

How To Use

- Clone repo: git clone --recurse-submodules https://github.com/FunAudioLLM/Fun-Audio-Chat

- Install dependencies: Use Python 3.12, PyTorch 2.8.0 (CUDA), ffmpeg, and pip install -r requirements.txt

- Download models: From Hugging Face (FunAudioLLM/Fun-Audio-Chat-8B) or ModelScope

- Run inference: python examples/infer_s2t.py for speech-to-text or infer_s2s.py for speech-to-speech

- Launch web demo: Run server (python -m web_demo.server.server) and client (npm run dev)

- Evaluate: Use provided scripts for benchmarks like VoiceBench or OpenAudioBench

- Customize: Modify configs or fine-tune with LLaMA-Factory on your data

How we rated Fun-Audio-Chat

- Performance: 4.8/5

- Accuracy: 4.7/5

- Features: 4.6/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.3/5

- Customization: 4.7/5

- Data Privacy: 5.0/5

- Support: 4.4/5

- Integration: 4.5/5

- Overall Score: 4.7/5

Fun-Audio-Chat integration with other tools

- Hugging Face: Model weights and pipelines for easy download and inference

- ModelScope: Alternative hosting for weights and community access

- vLLM: Inference acceleration backend for significant speedups

- LLaMA-Factory: Training/fine-tuning framework used in development

- CosyVoice: Integrated speech synthesis component for output generation

Best prompts optimised for Fun-Audio-Chat

- N/A - Fun-Audio-Chat is a speech-to-speech / audio language model that processes spoken input directly; no text prompts required for core voice interaction (automatic transcription and response generation).

- N/A - Use spoken questions or commands in real-time voice mode via demo or inference scripts.

- N/A - For evaluation or custom use, provide audio files or live microphone input instead of text prompts.

FAQs

Newly Added Tools

About Author