What is Fun-Audio-Chat?

Fun-Audio-Chat is an open-source Large Audio Language Model (8B parameters) from Alibaba’s Tongyi Fun Team, released December 23, 2025, for natural low-latency speech-to-speech and speech-to-text voice interactions.

Is Fun-Audio-Chat free to use?

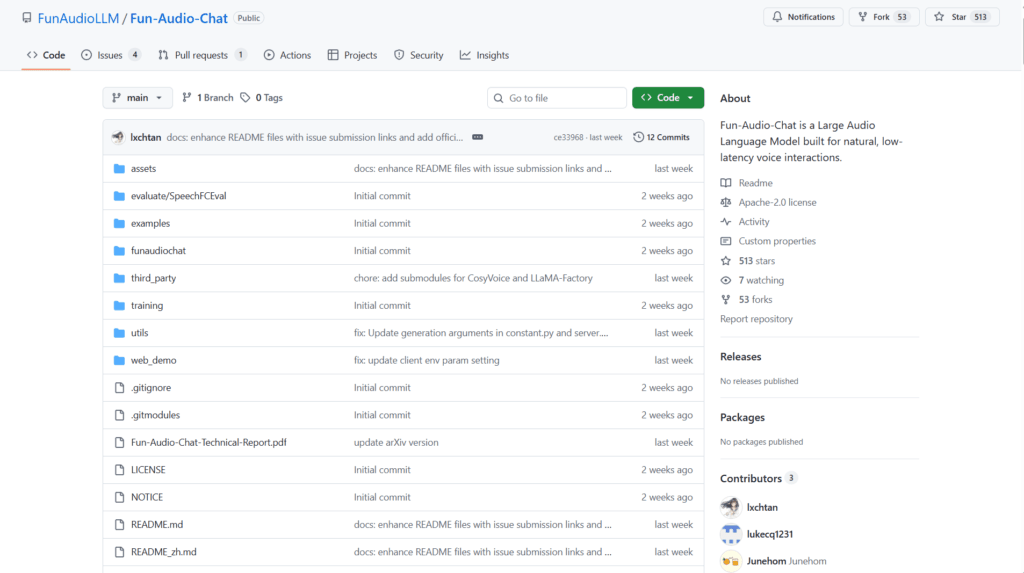

Yes, it is completely open-source under Apache 2.0 license with model weights, code, and inference/demo scripts freely available on GitHub and Hugging Face.

When was Fun-Audio-Chat released?

The model was officially released on December 23, 2025, with technical report on arXiv and open-source code shortly after.

What are the key innovations in Fun-Audio-Chat?

It uses Dual-Resolution Speech Representations (5Hz backbone + 25Hz head) for ~50% compute reduction and Core-Cocktail training to preserve text LLM strength alongside audio capabilities.

What hardware is required for Fun-Audio-Chat?

Inference needs approximately 24GB GPU VRAM; training requires 4x80GB GPUs; supports vLLM for significant speedups.

Does Fun-Audio-Chat support voice empathy?

Yes, it detects emotional tone, pace, and energy in speech and responds appropriately for more natural conversations.

What benchmarks does Fun-Audio-Chat excel in?

It ranks top among similar-sized models on OpenAudioBench, VoiceBench, UltraEval-Audio, MMAU series, and speech function/instruction benchmarks.

How do I run Fun-Audio-Chat locally?

Clone the GitHub repo, install dependencies (Python 3.12, PyTorch 2.8.0, ffmpeg), download weights from Hugging Face, and run inference scripts like infer_s2t.py or infer_s2s.py.