What is FunASR?

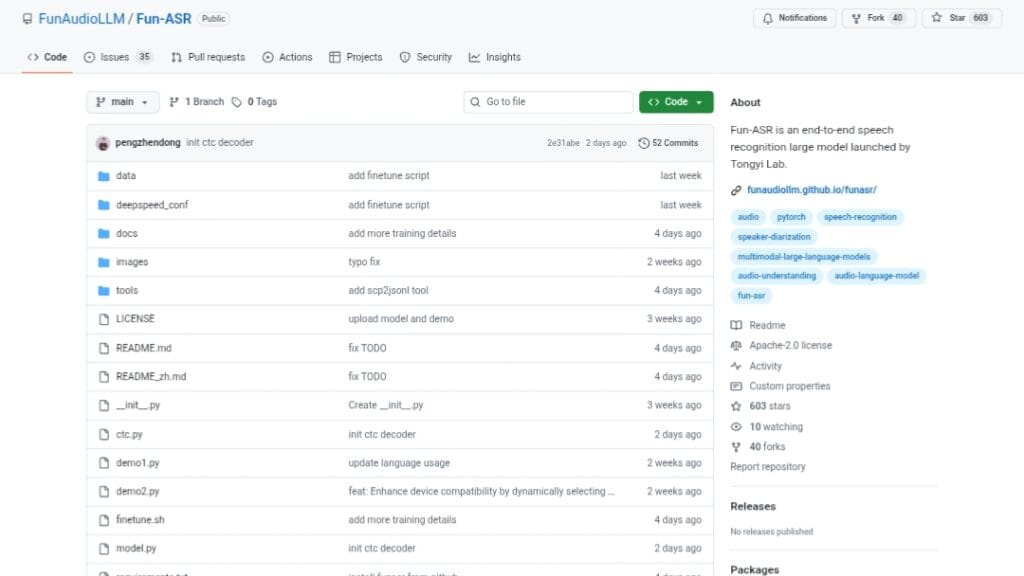

FunASR is an open-source end-to-end speech recognition toolkit from Alibaba/ModelScope, supporting ASR, VAD, punctuation, diarization, emotion recognition, and more with SOTA pretrained models.

Is FunASR free to use?

Yes, it is completely free and open-source under MIT license with full code, pretrained models, and deployment tools available on GitHub and ModelScope.

What languages does FunASR support?

It excels in Chinese (Mandarin + dialects/accents), English, Japanese, Korean, and multilingual via models like SenseVoice and Whisper; Fun-ASR-Nano covers 31 languages.

When was FunASR released or last updated?

Initial release around 2023; major updates continue into 2026, with Fun-ASR-Nano-2512 in Dec 2025 and fixes as recent as Jan 2026.

What are the key models in FunASR?

Popular ones include Paraformer-large (high accuracy/efficiency), Fun-ASR-Nano-2512 (31 languages, real-time), SenseVoiceSmall (multi-task), and Whisper variants.

Does FunASR support real-time transcription?

Yes, it offers low-latency streaming ASR with configurable chunks, VAD for segmentation, and runtime services for real-time deployment.

How popular is FunASR?

It has over 14.8k GitHub stars, 1.6k forks, and is widely used in research and industry as one of the leading open-source ASR toolkits.

How do I install and use FunASR?

Install via pip install -U funasr; load models with AutoModel and run inference on audio files for transcription, or set up runtime for production servers.

FunASR

About This AI

FunASR is a comprehensive open-source speech recognition toolkit developed by Alibaba’s DAMO Academy and ModelScope team, providing an end-to-end pipeline for automatic speech recognition (ASR) and related tasks.

It supports both non-streaming and streaming ASR with high accuracy, low latency, and efficient deployment for industrial applications.

Core capabilities include voice activity detection (VAD), punctuation restoration, speaker diarization, timestamp prediction, keyword spotting, emotion recognition, and multilingual transcription.

The toolkit features SOTA pretrained models like Paraformer (non-autoregressive, high efficiency), Conformer, Whisper variants, SenseVoice (multi-task speech foundation), and the latest Fun-ASR-Nano-2512 (800M parameters, 31 languages, low-latency real-time transcription trained on tens of millions of hours).

It excels in noisy environments, real-world enterprise scenarios (conferences, call centers, in-car speech), and supports Chinese (with dialects/accents), English, Japanese, Korean, and more via multilingual models.

FunASR offers runtime services for offline file transcription (GPU/CPU optimized, RTF as low as 0.0076 single-thread), real-time streaming (chunk-based low latency), and ONNX export for edge deployment.

Released initially around 2023 with major updates through 2025-2026 (Fun-ASR-Nano in Dec 2025, ongoing fixes in Jan 2026), it is fully open-source under MIT license with over 14.8k GitHub stars, active community, and model zoo on ModelScope/Hugging Face.

Installation is simple via pip, with tutorials for inference, training, fine-tuning, and production deployment.

Ideal for developers building ASR applications, researchers in speech AI, enterprises needing robust transcription services, and anyone requiring customizable, high-performance speech-to-text without proprietary dependencies.

Key Features

- End-to-End ASR Pipeline: Integrates VAD, ASR, punctuation, speaker diarization, timestamps, and ITN in one toolkit

- Streaming and Non-Streaming Support: Real-time low-latency transcription with configurable chunk sizes

- Multilingual and Multi-Dialect: Covers Chinese (7 dialects, 26 accents), English, Japanese, Korean, and more via SenseVoice/Whisper

- High-Accuracy Models: Paraformer-large, Fun-ASR-Nano-2512 (31 languages), Conformer, Whisper-large-v3

- Voice Activity Detection: FSMN-VAD for accurate audio segmentation

- Punctuation Restoration: CT-Punc model adds natural punctuation and inverse text normalization

- Speaker Diarization: CAM++ for speaker identification and separation

- Emotion Recognition: Emotion2vec+large for detecting angry, happy, neutral, sad emotions

- Keyword Spotting: FSMN-KWS for real-time keyword detection

- Runtime Services: Optimized offline file (GPU RTF 0.0076) and real-time transcription servers

- ONNX Export: Deploy models on edge devices or non-PyTorch environments

Price Plans

- Free ($0): Fully open-source toolkit, pretrained models, runtime services, and inference code under MIT license; no usage fees

- Cloud (Alibaba Cloud ModelScope): Optional hosted inference/API via Alibaba Cloud (pay-per-use, not part of core toolkit)

- Enterprise Support (Custom): Potential premium consulting or deployment support from Alibaba (not specified in repo)

Pros

- Industrial-Grade Performance: Optimized for real-world noisy scenarios with high accuracy and low RTF

- Extensive Model Zoo: Over 100 pretrained models on ModelScope/Hugging Face for various tasks

- Active Development: Frequent updates (latest in Jan 2026) and strong community (14.8k GitHub stars)

- Full Open-Source: MIT license for code, permissive for models, easy pip install and deployment

- Multilingual Excellence: Strong support for Chinese dialects/accents and global languages

- Efficient Deployment: Streaming, ONNX, GPU/CPU runtime services for production use

- Comprehensive Tasks: Beyond ASR includes VAD, punc, diarization, emotion, KWS

Cons

- Hardware Requirements: Large models (e.g., 800M+) need good GPU for real-time/high-throughput

- Setup for Advanced Use: Runtime services and fine-tuning require additional configuration

- Primary Chinese Focus: Best performance on Mandarin/dialects; some global languages secondary

- Dependency Heavy: Relies on PyTorch, torchaudio, and other libs; no one-click installer

- Documentation Language: Many resources in Chinese alongside English

- No Hosted Service: Self-hosted only; no cloud API from toolkit (Alibaba Cloud separate)

- Edge Cases: Noisy/extreme accents may still require fine-tuning

Use Cases

- Real-time transcription: Live captioning for meetings, calls, or broadcasts with low latency

- Offline batch processing: Transcribe long audio/video files (podcasts, lectures, interviews) with punctuation and timestamps

- Call center analytics: Speaker diarization, emotion detection, and keyword spotting for customer service

- Multilingual applications: ASR for global products supporting Chinese, English, Japanese, Korean

- Research and development: Fine-tune models or benchmark new speech techniques

- Edge device deployment: Use ONNX exports for mobile or embedded ASR

- Content creation: Subtitle generation, lyric/rap recognition, emotion-aware voice analysis

Target Audience

- Developers and AI engineers: Building custom ASR pipelines or integrating speech features

- Researchers in speech AI: Experimenting with SOTA models and multilingual ASR

- Enterprises and startups: Needing robust, deployable transcription for products/services

- Call center operators: Real-time monitoring and analytics with diarization/emotion

- Content platforms: Auto-subtitling videos/podcasts in multiple languages

- Open-source contributors: Extending or fine-tuning the toolkit

How To Use

- Install toolkit: Run pip install -U funasr (or from source: git clone and pip install -e .)

- Load model: Use AutoModel with pretrained name (e.g., paraformer-zh, SenseVoiceSmall)

- Transcribe audio: Call model.generate(input=audio_file) for offline or streaming inference

- Streaming example: Use paraformer-zh-streaming with chunk_size for real-time

- Add VAD/Punc: Combine with fsmn-vad and ct-punc models in pipeline

- Deploy runtime: Follow runtime/readme for offline file or real-time server setup

- Export ONNX: Use funasr-export command for edge deployment

How we rated FunASR

- Performance: 4.8/5

- Accuracy: 4.7/5

- Features: 4.9/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.4/5

- Customization: 4.8/5

- Data Privacy: 5.0/5

- Support: 4.5/5

- Integration: 4.6/5

- Overall Score: 4.8/5

FunASR integration with other tools

- ModelScope and Hugging Face: Direct model downloads and inference pipelines from both hubs

- PyTorch Ecosystem: Built on PyTorch for training, fine-tuning, and custom model development

- ONNX Runtime: Export models to ONNX for cross-platform/edge deployment (CPU/GPU/Web)

- Runtime Services: CPU/GPU servers for offline file and real-time streaming transcription

- Third-Party Frameworks: Compatible with Whisper ecosystem, Qwen-Audio, and other speech libs

Best prompts optimised for FunASR

- Not applicable - FunASR is a speech recognition toolkit that processes audio files/recordings automatically; it does not use text prompts for generation like LLM or image/video tools.

- N/A - Core usage involves providing audio input (WAV/MP3/etc.) directly to models for transcription, not text-based prompting.

FAQs

Newly Added Tools

About Author