What is FunASR?

FunASR is an open-source end-to-end speech recognition toolkit from Alibaba/ModelScope, supporting ASR, VAD, punctuation, diarization, emotion recognition, and more with SOTA pretrained models.

Is FunASR free to use?

Yes, it is completely free and open-source under MIT license with full code, pretrained models, and deployment tools available on GitHub and ModelScope.

What languages does FunASR support?

It excels in Chinese (Mandarin + dialects/accents), English, Japanese, Korean, and multilingual via models like SenseVoice and Whisper; Fun-ASR-Nano covers 31 languages.

When was FunASR released or last updated?

Initial release around 2023; major updates continue into 2026, with Fun-ASR-Nano-2512 in Dec 2025 and fixes as recent as Jan 2026.

What are the key models in FunASR?

Popular ones include Paraformer-large (high accuracy/efficiency), Fun-ASR-Nano-2512 (31 languages, real-time), SenseVoiceSmall (multi-task), and Whisper variants.

Does FunASR support real-time transcription?

Yes, it offers low-latency streaming ASR with configurable chunks, VAD for segmentation, and runtime services for real-time deployment.

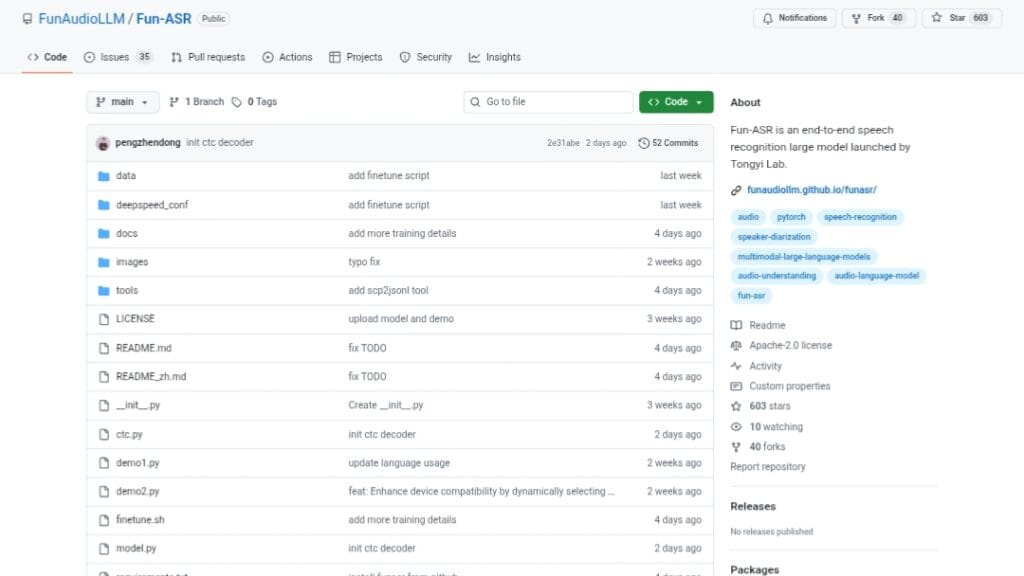

How popular is FunASR?

It has over 14.8k GitHub stars, 1.6k forks, and is widely used in research and industry as one of the leading open-source ASR toolkits.

How do I install and use FunASR?

Install via pip install -U funasr; load models with AutoModel and run inference on audio files for transcription, or set up runtime for production servers.