What is GLM-4-Voice?

GLM-4-Voice is an end-to-end open-source voice model from Zhipu AI that directly understands and generates Chinese and English speech for real-time conversations with emotion control.

When was GLM-4-Voice released?

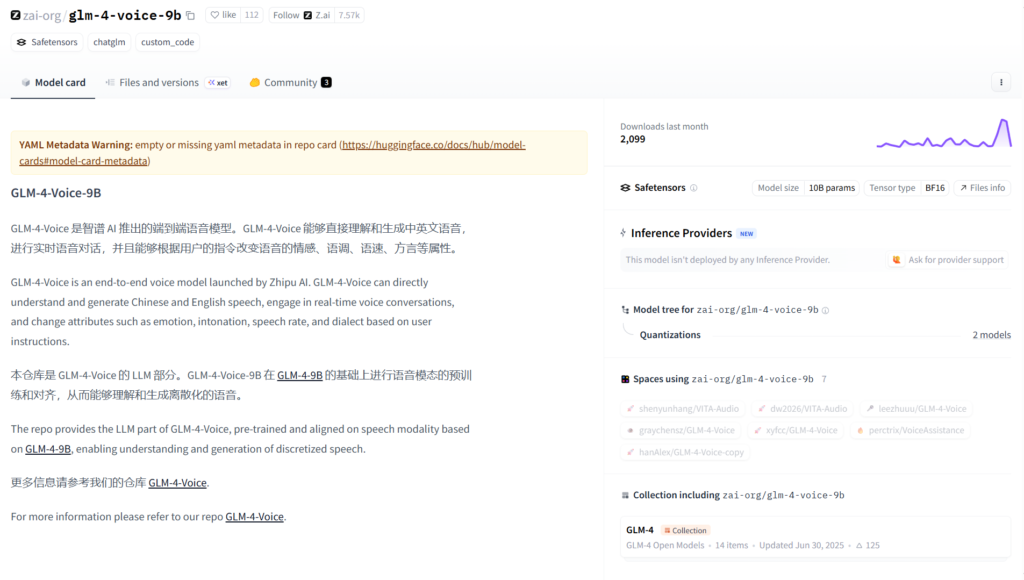

It was released on June 30, 2025, as part of the GLM-4 series, with weights hosted on Hugging Face.

Is GLM-4-Voice free to use?

Yes, it is completely open-source with model weights and code available on Hugging Face under permissive license; no usage fees.

What languages does GLM-4-Voice support?

It primarily supports Chinese and English, including mixed bilingual conversations.

Can GLM-4-Voice change voice emotion?

Yes, users control emotion (happy, sad, angry, calm, etc.) through natural language instructions during generation.

What hardware is needed for GLM-4-Voice?

The 9B model requires a capable GPU for real-time inference; local deployment via Hugging Face transformers.

How does GLM-4-Voice work?

It processes audio input directly to generate audio output, enabling real-time voice dialogue without separate ASR/TTS.

Where can I try GLM-4-Voice?

Use Hugging Face Spaces demo for quick online testing or download weights/code for local running.

GLM-4-Voice

About This AI

GLM-4-Voice is an end-to-end voice model developed by Zhipu AI (Z.ai), capable of directly understanding and generating speech in Chinese and English.

It supports real-time voice conversations with natural flow, low latency, and high expressiveness.

Users can control voice emotion, tone, and style through natural language instructions during generation, enabling expressive outputs like happy, sad, angry, or calm speech.

The model handles bilingual mixed conversations seamlessly, maintaining context across languages.

Key strengths include realistic prosody, accurate pronunciation for Chinese/English, and robust performance on noisy or accented inputs.

With 9B parameters, it achieves fast inference suitable for interactive applications.

Released open-source on Hugging Face under permissive license, it includes inference code and weights for local deployment.

Ideal for voice assistants, real-time translation dubbing, interactive storytelling, language learning tools, gaming NPCs with voice, and accessibility applications.

It builds on GLM-4 series multimodal foundation, extending to audio understanding and generation without separate ASR/TTS modules.

Community-driven with demos and examples available for quick testing.

Key Features

- End-to-end speech processing: Direct audio input to audio output without intermediate text steps

- Real-time voice dialogue: Low-latency conversational speech in Chinese and English

- Emotion and style control: Modify voice tone via natural language instructions (e.g., speak happily, angrily, calmly)

- Bilingual support: Seamless handling of mixed Chinese-English conversations

- Natural prosody and pronunciation: High-quality intonation, rhythm, and accent accuracy

- Robust to noise/accents: Performs well on varied input conditions

- Open-source inference: Full code and weights for local running

- Multimodal foundation: Leverages GLM-4 series vision-language capabilities for audio tasks

- Interactive demos: Available on Hugging Face Spaces for quick testing

- Expressive generation: Supports dynamic emotional adjustments mid-conversation

Price Plans

- Free ($0): Fully open-source model with weights, code, and inference scripts available on Hugging Face; no usage fees

- Cloud/Enterprise (Custom): Potential future hosted options via Zhipu AI platform (not specified for this variant)

Pros

- Fully open-source: Permissive license with weights and code freely available

- Strong bilingual performance: Excellent for Chinese-English real-time voice applications

- Emotional expressiveness: Unique instruction-based control over voice style and mood

- Low-latency inference: Suitable for live conversations and interactive use

- End-to-end simplicity: No need for separate ASR/TTS pipelines

- Community support: Hugging Face hosting with discussions and examples

- High-quality output: Natural-sounding speech with good prosody

Cons

- Limited to Chinese-English: Primary focus on bilingual support; other languages not emphasized

- Hardware requirements: 9B model needs decent GPU for real-time performance

- Setup for local use: Requires installing dependencies and downloading large weights

- No hosted API: Primarily self-hosted; no official cloud inference mentioned

- Early-stage model: Released mid-2025 with ongoing community improvements

- Potential latency on low-end hardware: Real-time may vary without optimization

- Limited benchmarks: Fewer public metrics compared to text-only models

Use Cases

- Real-time voice assistants: Build bilingual chatbots with emotional responses

- Language learning tools: Practice conversations with expressive AI tutor

- Interactive storytelling: Generate narrated stories with dynamic voice changes

- Gaming NPCs: Create expressive voice characters in games

- Accessibility applications: Voice interfaces for visually impaired users

- Dubbing and translation: Real-time speech-to-speech conversion

- Customer service bots: Emotional voice support in Chinese/English

Target Audience

- AI developers and researchers: Experimenting with open-source voice models

- App builders: Creating voice-enabled products in Chinese/English markets

- Language educators: Developing interactive learning tools

- Game developers: Adding expressive NPCs

- Open-source enthusiasts: Fine-tuning or deploying locally

- Accessibility advocates: Building inclusive voice interfaces

How To Use

- Visit Hugging Face: Go to huggingface.co/zai-org/glm-4-voice-9b for model card and files

- Install dependencies: Use pip install requirements from repo or GitHub

- Download model: Load weights via transformers library

- Run inference: Use provided scripts for audio input/output

- Control emotion: Add instructions like 'speak happily' in prompts

- Test demos: Try Spaces demo for quick online experience

- Deploy locally: Integrate into apps with microphone/speaker support

How we rated GLM-4-Voice

- Performance: 4.5/5

- Accuracy: 4.6/5

- Features: 4.7/5

- Cost-Efficiency: 5.0/5

- Ease of Use: 4.3/5

- Customization: 4.8/5

- Data Privacy: 5.0/5

- Support: 4.4/5

- Integration: 4.5/5

- Overall Score: 4.6/5

GLM-4-Voice integration with other tools

- Hugging Face Transformers: Direct loading and inference via official library

- GitHub Repository: Full code examples and community contributions

- Web Demos (Spaces): Online testing without local setup

- Voice Frameworks: Compatible with Gradio, Streamlit, or custom apps for UI

- Local Hardware: Runs on GPUs with CUDA for real-time performance

Best prompts optimised for GLM-4-Voice

- Generate a happy and enthusiastic response in Chinese: Hello, how are you today?

- Speak in a calm and soothing English voice: Take a deep breath and relax, everything will be okay.

- Respond angrily in bilingual mode: Why did you do that? 这太令人失望了!

- Use a professional and neutral tone for customer service: Thank you for your call. How may I assist you today?

- Speak excitedly like a storyteller in English: Once upon a time in a faraway land...

FAQs

Newly Added Tools

About Author