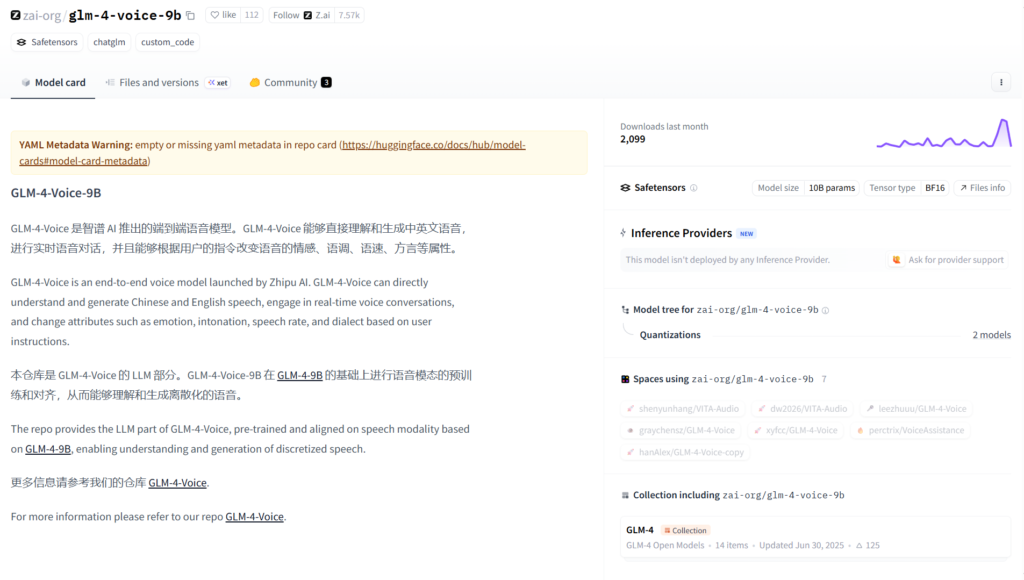

What is GLM-4-Voice?

GLM-4-Voice is an end-to-end open-source voice model from Zhipu AI that directly understands and generates Chinese and English speech for real-time conversations with emotion control.

When was GLM-4-Voice released?

It was released on June 30, 2025, as part of the GLM-4 series, with weights hosted on Hugging Face.

Is GLM-4-Voice free to use?

Yes, it is completely open-source with model weights and code available on Hugging Face under permissive license; no usage fees.

What languages does GLM-4-Voice support?

It primarily supports Chinese and English, including mixed bilingual conversations.

Can GLM-4-Voice change voice emotion?

Yes, users control emotion (happy, sad, angry, calm, etc.) through natural language instructions during generation.

What hardware is needed for GLM-4-Voice?

The 9B model requires a capable GPU for real-time inference; local deployment via Hugging Face transformers.

How does GLM-4-Voice work?

It processes audio input directly to generate audio output, enabling real-time voice dialogue without separate ASR/TTS.

Where can I try GLM-4-Voice?

Use Hugging Face Spaces demo for quick online testing or download weights/code for local running.